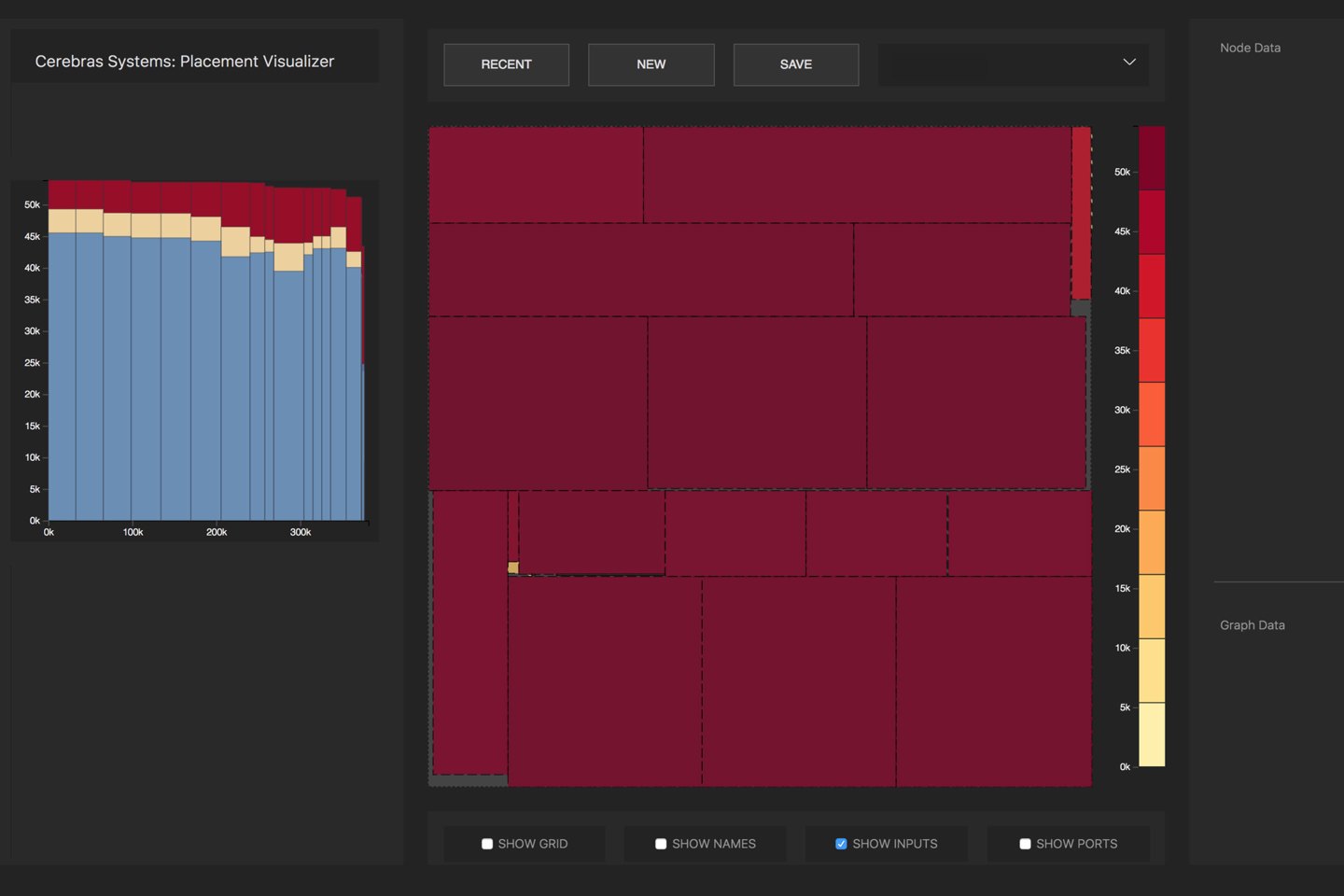

Half an hour’s drive southwest from downtown Chicago, at Argonne National Laboratory, a major U.S. supercomputing facility, a computer dashboard shows artificial intelligence at work. In rectangles of various sizes, fitted together like a monochromatic Mondrian painting in red, a digital heat map charts the activity of a neural network as it thinks about drugs. At the end of hours of work, the neural network will produce an assessment of the correlation between various drugs and tumor cells that could someday lead to new treatments for cancer.

Argonne has many very powerful computers, but something different is happening on this dashboard. The system running this AI is 100 times faster than Argonne’s fastest computer. The speed-up not only means that it takes less time to run neural networks; it may ultimately mean a qualitative leap in the kinds of work on cancer drugs the lab can do. What will be revealed, scientists, hope, are connections that they haven’t anticipated yet.

“Imagine a computer model that we train with the data we have for all known drug molecules,” says Rick Stevens, Argonne’s associate laboratory director for computing, environment and life sciences. “We have the model explore the drug response landscape, and it generates drugs, it generates molecules with desirable properties.”

This is artificial intelligence entering an age of unknown unknowns. Most drug studies look for correlations between a short list of known drugs and a selection of cell lines, to see whether the drug will slow tumor growth. At Argonne, Stevens and his team can simulate all drug molecules for correlations to disease they might never have thought to check.

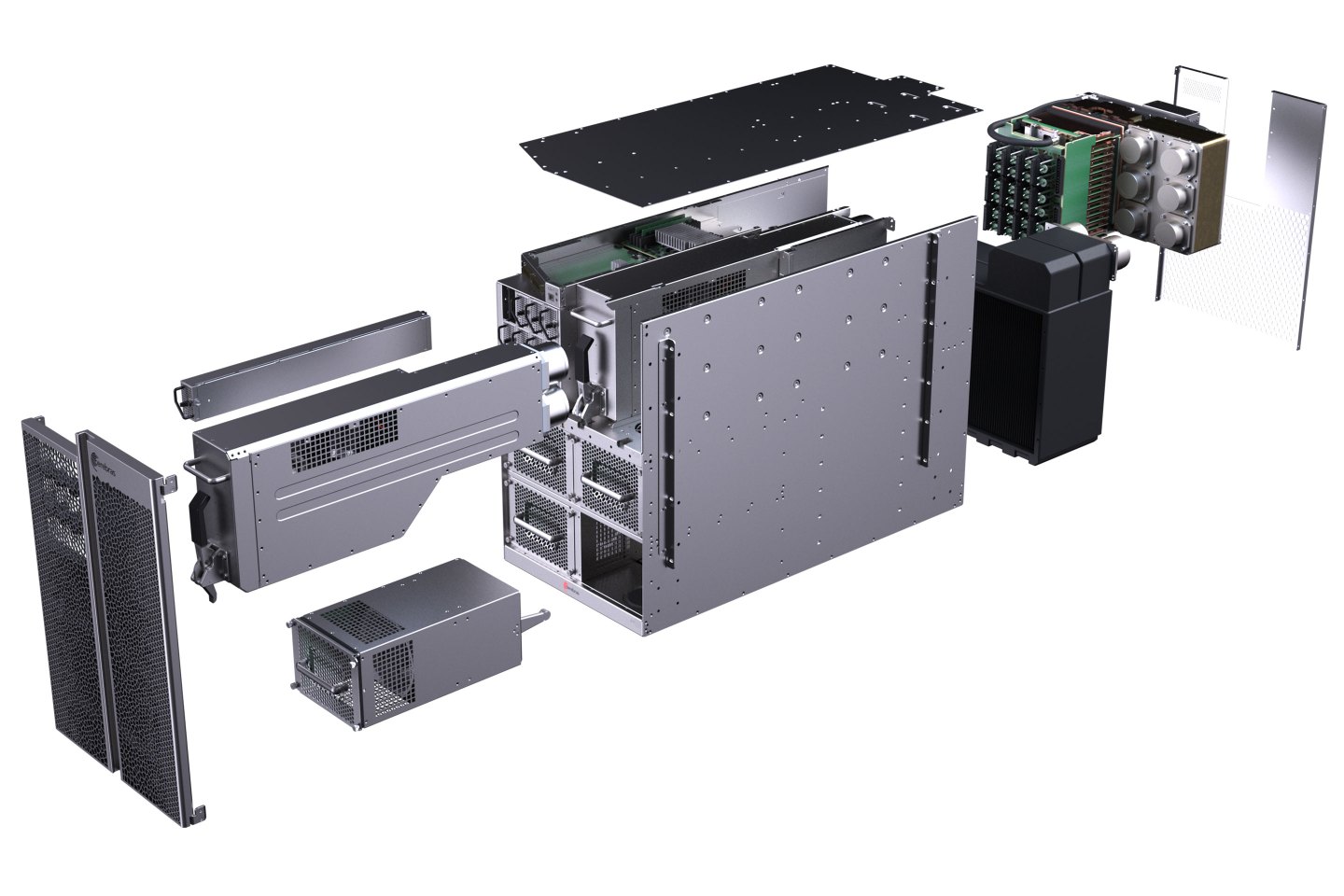

The technology that makes that possible sits in a metal box the size of a dormitory fridge. Called the CS-1, the two-foot-high cabinet is the first computer from Silicon Valley startup Cerebras Systems. Cerebras in August unveiled the world’s largest computer chip, almost the size of an entire twelve-inch silicon wafer, called the WSE. The CS-1 computer, as Cerebras cofounder and chief executive Andrew Feldman puts it, is the race-car chassis that houses that WSE race-car engine.

Feldman claims that the CS-1 is more powerful than larger competing systems that take up multiple racks of equipment, while also consuming less power, thanks to an ingenious heat-extraction system. A copper-colored block sitting behind the giant Cerebras chip, called a cold plate, conducts heat away from the chip. That cold plate is cooled by pipes of cold water running past the plate. Fans then blow cold air, carrying the heat away from the pipes.

Argonne has had the box for only a few months, Stevens explains, but he says “we are very optimistic about what we are seeing so far” in using it. “Having a factor of 100 in training [neural networks] is so important,” he says, because, “you can still remember what the question was when the job finishes.”

Impressive as that hardware is, the Mondrian-style dashboard may represent the more profound breakthrough. Supercomputers and supercomputer-class machines such as Google’s Pod are challenging to program. Somebody has to figure out how to spread a neural net over hundreds, sometimes thousands of individual chips. Argonne has spent years developing such tools for supercomputers such as “Summit” and “Theta.”

The Cerebras CS-1 is just one giant chip in one self-contained cabinet. The entire neural network can be put on that one chip, and Cerebras has made a program that automatically figures out how to do it with whatever neural net scientists may hand it. The program, called a compiler, optimizes how the numerous math operations of a given neural net are spread across the WSE’s circuits. The rectangles of the heat map are the visual representation of those parts of the neural net as they are computed in parallel.

“We have tools to do this” on supercomputers, says Argonne’s Stevens, “but nothing turnkey the way the CS-1 is, [where] it’s all done automatically.”

In a sense, Cerebras is democratizing supercomputers. That makes it somewhat fitting that the machine is being unveiled today in Denver at SC19, this year’s iteration of the International Conference for High-Performance Computing, Networking, Storage and Analysis, an annual gathering where the biggest computer systems make their debut. The Cerebras compiler is turning machines that were the province of teams of scientists into something a grad student could program.

That ease may open doors to new kinds of AI. One prospective category would include really big experiments, like the molecule search mentioned above. Another would be to add what are called “confidence intervals,” a measure of uncertainty, to studies of drugs. Computing a confidence interval costs extra time, so, on slower systems, it’s only done at the end of long batches of experiments.

The CS-1 is fast enough that a confidence interval can be done every single time. “It makes research more subtle,” says Stevens of the prospect of having confidence intervals. “It gives you a measure of how close you are in some sense, and so it changes how you approach the problem.” Connections that would turn out to be a waste of time to explore can be more quickly dispensed with, he says.

A third possibility would be the development of entirely new kinds of neural networks, says Stevens. “It’s fast enough that you could explore a large space of [neural] network designs,” he says. “It’s possible there are formulations that will perform better” than what scientists would devise by hand — another unknown unknown.

To Cerebras’s Feldman, it’s all evidence that “making things faster allows you to do things you couldn’t do before, and suddenly you get insights you didn’t have access to.” He makes the analogy to the rise of the dynamo and the electrification of factories. A hundred years ago, the dynamo merely improved existing things such as belt-driven machines. As decades went by, factories were completely reorganized around automated process control because of the dynamo. The nature of manufacturing changed.

The speed-up of research that can happen with the CS-1 may be just such a qualitative change in experimentation, a shift in how AI research happens whose implications have yet to be contemplated.

More must-read stories from Fortune:

—HP Inc.’s printing woes were years in the making. Then Xerox swooped in

—Review: Apple Watch Series 5 is insanely great

—A new Motorola Razr—and its folding screen—could bring phone design back to the future

—Most executives fear their companies will fail if they don’t adopt A.I.

—With new 16-inch MacBook Pro, Apple wants consumers to forget about its keyboard woes

Catch up with Data Sheet, Fortune’s daily digest on the business of tech.