The explosive-packed drone lay belly-up, like a dead fish, on a Kyiv street, its nose crushed and its rear propeller twisted. It had crashed without its deadly payload detonating, perhaps owing to a malfunction or because Ukrainian forces had shot it down.

Photos of the drone were quickly uploaded to social media, where weapons experts identified it as a KUB-BLA “loitering munition” made by Zala Aero, the dronemaking arm of Russian weapons maker Kalashnikov. Colloquially referred to as a “kamikaze drone,” it can fly autonomously to a specific area and then circle for up to 30 minutes.

The drone’s operator, remotely monitoring a video feed from the craft, can wait for enemy soldiers or a tank to appear below. In some cases, the drones are equipped with A.I. software that lets them hunt for particular kinds of targets based on images that have been fed into their onboard systems. In either case, once the enemy has been spotted and the operator has chosen to attack it, the drone nose-dives into its quarry and explodes.

The war in Ukraine has become a critical proving ground for increasingly sophisticated loitering munitions. That’s raised alarm bells among human rights campaigners and technologists who fear they represent the leading edge of a trend toward “killer robots” on the battlefield—weapons controlled by artificial intelligence that autonomously kill people without a human making the decision.

Militaries worldwide are keeping a close eye on the technology as it rapidly improves and its cost declines. The selling point is that small, semiautonomous drones are a fraction of the price of, say, a much larger Predator drone, which can cost tens of millions of dollars, and don’t require an experienced pilot to fly them by remote control. Infantry soldiers can, with just a little bit of training, easily deploy these new autonomous weapons.

“Predator drones are superexpensive, so countries are thinking, ‘Can I accomplish 98% of what I need with a much smaller, much less expensive drone?’ ” says Brandon Tseng, a former Navy SEAL who is cofounder and chief growth officer of U.S.-based Shield AI, a maker of small reconnaissance drones that use A.I. for navigation and image analysis.

But human rights groups and some computer scientists fear the technology could represent a grave new threat to civilians in conflict zones, or maybe even the entire human race.

“Right now, with loitering munitions, there is still a human operator making the targeting decision, but it is easy to remove that. And the big danger is that without clear regulation, there is no clarity on where the red lines are,” says Verity Coyle, senior adviser to Amnesty International, a participant in the Stop Killer Robots campaign.

“There is no clarity on where the red lines are.”

Verity Coyle of Amnesty International

The global market for A.I.-enabled lethal weapons of all kinds is growing quickly, from nearly $12 billion this year to an expected $30 billion by the end of the decade, according to Allied Market Research. In the U.S. alone, annual spending on loitering munitions, totaling about $580 million today, will rise to $1 billion by the end of the decade, Grand View Research said.

Dagan Lev Ari, the international sales and marketing director for UVision, an Israeli defense company that makes loitering munitions, says demand had been inching up until 2020, when war broke out between Armenia and Azerbaijan. In that conflict, Azerbaijan used advanced drones and loitering munitions to decimate Armenia’s larger arsenal of tanks and artillery, helping it achieve a decisive victory.

That got many countries interested, Lev Ari says. It also helps that the U.S. has begun major purchases, including UVision’s Hero family of kamikaze drones, as well as the Switchblade, made by rival U.S. firm AeroVironment. The Ukraine war has further accelerated demand, Lev Ari adds. “Suddenly, people see that a war in Europe is possible, and defense budgets are increasing,” he says.

Although less expensive than certain weapons, loitering munitions are not cheap. For example, each Switchblade costs as much as $70,000, after the launch and control systems plus munitions are factored in, according to some reports.

The U.S. is said to be sending 100 Switchblades to Ukraine. They would supplement that country’s existing fleet of Turkish-made Bayraktar TB2 drones, which can take off, land, and cruise autonomously, but need a human operator to find targets and give the order to drop the missiles or bombs they carry.

Loitering munitions aren’t entirely new. More primitive versions have been around since the 1960s, starting with a winged missile designed to fly to a specific area and search for the radar signature of an enemy antiaircraft system. What’s different today is that the technology is far more sophisticated and accurate.

In theory, A.I.-enabled weapons systems may be able to reduce civilian war casualties. Computers can process information faster than humans, and they are not affected by the physiological and emotional stress of combat. They might also be better at determining, in the heat of battle, whether the shape suddenly appearing from behind a house is an enemy soldier or a child.

But in practice, human rights campaigners and many A.I. researchers warn, today’s machine-learning–based algorithms can’t be trusted with the most consequential decision anyone will ever face: whether to take a human life. Image recognition software, while equaling human abilities in some tests, falls far short in many real-world situations—such as rainy or snowy conditions, or dealing with stark contrasts between light and shadow.

It can often make strange mistakes that humans never would. In one experiment, researchers managed to trick an A.I. system into thinking that a turtle was actually a rifle by subtly altering the pattern of pixels in the image.

Even if target identification systems were completely accurate, an autonomous weapon would still pose a serious danger unless it were coupled with a nuanced understanding of the entire battlefield. For instance, the A.I. system may accurately identify an enemy tank, but not understand that it’s parked next to a kindergarten, and so should not be attacked for fear of killing civilians.

Some supporters of a ban on autonomous weapons have evoked the danger of “slaughterbots,” swarms of small, relatively inexpensive drones, configured either to drop an antipersonnel grenade or as loitering munitions. Such swarms could, in theory, be used to kill everyone in a certain area, or to commit genocide, killing everyone with certain ethnic features, or even use facial recognition to assassinate specific individuals.

Max Tegmark, a physics professor at MIT and cofounder of the Future of Life Institute, which seeks to address “existential risks” to humanity, says swarms of slaughterbots would be a kind of “poor man’s weapon of mass destruction.” Because such autonomous weapons could destabilize the existing world order, he hopes that powerful nations—such as the U.S. and Russia—that have been pursuing other kinds of A.I.-enabled weapons, from robotic submarines to autonomous fighter jets, may at least agree to ban these slaughterbot drones and loitering munitions.

But so far, efforts at the United Nations to enact a restriction on the development and sale of lethal autonomous weapons have foundered. A UN committee has spent more than eight years debating what, if anything, to do about such weapons and has yet to reach any agreement.

Although as many as 66 countries now favor a ban, the committee operates by consensus, and the U.S., the U.K., Russia, Israel, and India oppose any restrictions. China, which is also developing A.I.-enabled weapons, has said it supports a ban, but absent a treaty, will not unilaterally forgo them.

As for the dystopian future of slaughterbots, companies building loitering munitions and other A.I.-enabled weapons say they are meant to enhance human capabilities on the battlefield, not replace them. “We don’t want the munition to attack by itself,” Lev Ari says, although he acknowledges that his company is adding A.I.-based target recognition to its weapons that would increase their autonomy. “That is to assist you in making the necessary decision,” he says.

Lev Ari points out that even if the munition is able to find a target, say, an enemy tank, it doesn’t mean that it is the best target to strike. “That particular tank might be inoperable, while another nearby may be more of a threat,” he says.

Noel Sharkey, emeritus professor of computer science at the University of Sheffield in the U.K., who is also a spokesperson for the Stop Killer Robots campaign, says automation is speeding the pace of battle to the point that humans can’t respond effectively without A.I. helping them identify targets. And inevitably one A.I. innovation is driving the demand for more, in a sort of arms race. Says Sharkey, “Therein lies the path to a massive humanitarian disaster.”

Deadly tech

A.I.-enabled weapons are already here—but most are unable to select and attack targets without a human’s approval. These are some examples.

Orca

The U.S. Navy is working with Boeing on a 51-foot-long submersible called the Orca. The goal is for it to navigate autonomously for up to 6,500 nautical miles, using sonar to detect enemy vessels and underwater mines. While the initial version will be unarmed, the Navy has suggested that a later one will be able to fire torpedoes.

Kargu 2

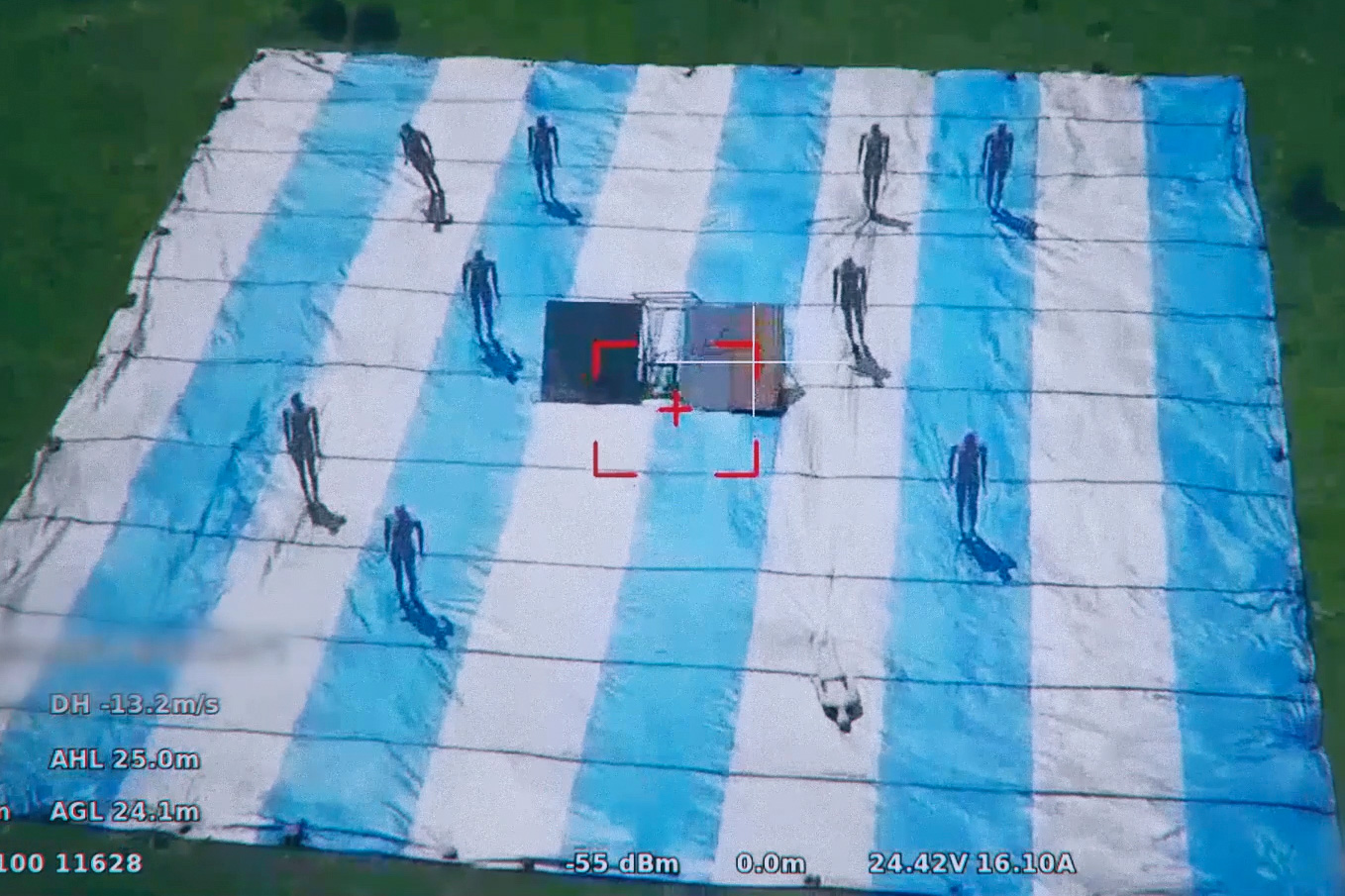

This small quadcopter by Turkish company STM made headlines after the United Nations concluded that one had autonomously attacked forces affiliated with a Libyan warlord in 2020. It was said to be the first time a kamikaze drone had selected a target on its own. But STM denies its drone is capable of doing so.

SGR-A1

An autonomous machine gun developed by South Korea’s Hanwha Aerospace and Korea University that is designed to help South Korea defend its border with North Korea. According to a news account, it uses thermal and infrared imaging to detect people near the border. If the target doesn’t speak a predesignated password, the gun can sound an alarm or fire either rubber or lethal bullets.

KUB-BLA

Russia is using this small loitering munition in Ukraine. According to its manufacturer, Zala Aero, the drone’s operator can upload a target image to the system before launch. The aircraft can then autonomously locate similar targets on the battlefield.

Loyal Wingman

Produced by Boeing, this 38-foot-long drone autonomously accompanies crewed fighter jets and other aircraft to provide intelligence and surveillance, as well as warn of incoming missiles and other threats.The video feed from STM’s Kargu 2 drone during a test.

A version of this article appears in the April/May 2022 issue of Fortune with the headline, “A.I. goes to war.”

Never miss a story: Follow your favorite topics and authors to get a personalized email with the journalism that matters most to you.