Back in 1958, Fortune science writer George A. W. Boehm was one of the first journalists to write about game theory and other developments in “new math.” His article, “The new uses of the abstract,” featured a brief bio of John Nash, the Nobel Laureate in Economics and subject of the movie A Beautiful Mind, long before the world knew of his struggles with mental illness.

Never before have so many people applied such abstract mathematics to so great a variety of problems. To meet the demands of industry, technology, and other sciences, mathematicians have had to invent new branches of mathematics and expand old ones. They have built a superstructure of fresh ideas that people trained in the classical branches of the subject would hardly recognize as mathematics at all.

Applied mathematicians have been grappling successfully with the world’s problems at a time, curiously enough, when pure mathematicians seem almost to have lost touch with the real world. Mathematics has always been abstract, but as Fortune reported last month, pure mathematicians are pushing abstraction to new limits. To them mathematics is an art they pursue for art’s sake, and they don’t much care whether it will ever have any practical use.

Yet the very abstractness of mathematics makes it useful. By applying its concepts to worldly problems the mathematician can often brush away the obscuring details and reveal simple patterns. Celestial mechanics, for example, enables astronomers to calculate the positions of the planets at any time in the past or future and to predict the comings and goings of comets. Now this ancient and abstruse branch of mathematics has suddenly become impressively practical for calculating orbits of earth satellites.

Even mathematical puzzles may have important applications. Mathematicians are still trying to find a general rule for calculating the number of ways a particle can travel from one corner of a rectangular net to another corner without crossing its own path. When they solve this seemingly simple problem, they will be able to tell chemists something about the buildup of the long-chain molecules of polymers.

Mathematicians who are interested in down-to-earth problems have learned to solve many that were beyond the scope of mathematics only a decade or two ago. They have developed new statistical methods for controlling quality in high-speed industrial mass production. They have laid foundations for Operations Research techniques that businessmen use to schedule production and distribution. They have created an elaborate theory of “information” that enables communications engineers to evaluate precisely telephone, radio, and television circuits. They have grappled with the complexities of human behavior through game theory, which applies to military and business strategy alike. They have analyzed the design of automatic controls for such complicated systems as factory production lines and supersonic aircraft. Now they are ready to solve many problems of space travel, from guidance and navigation to flight dynamics of missiles beyond the earth’s atmosphere.

Mathematicians have barely begun to turn their attention to the biological and social sciences, yet these once purely descriptive sciences are already taking on a new flavor of mathematical precision. Biologists are starting to apply information theory to inheritance. Sociologists are using sophisticated modern statistics to control their sampling. The bond between mathematics and the life sciences has been strengthened by the emergence of a whole group of applied mathematics specialties, such as biometrics, psychometrics, and econometrics.

Now that they have electronic computers, mathematicians are solving problems they would not have dared tackle a few years ago. In a matter of minutes they can get an answer that previously would have required months or even years of calculation. In designing computers and programing them to carry out instructions, furthermore, mathematicians have had to develop new techniques. While computers have as yet contributed little to pure mathematical theory, they have been used to test certain relationships among numbers. It now seems possible that a computer someday will discover and prove a brand-new mathematical theorem.

The unprecedented growth of U.S. mathematics, pure and applied, has caused an acute shortage of good mathematicians. Supplying this demand is a knotty problem. Mathematicians need more training than ever before; yet they can’t afford to spend more years in school, for mathematicians are generally most creative when very young. A whole new concept of mathematical education, starting as early as the ninth grade, may offer the only escape from this dilemma.

Convenience of the outlandish

The applied mathematician must be a creative man. For applied mathematics is more than mere problem solving. Its primary goal is finding new mathematical approaches applicable to a wide range of problems. The same differential equation, for example, may describe the scattering of neutrons by atomic nuclei and the propagation of radio waves through the ionosphere. The same topological network may be a mathematical model of wires carrying current in an electric circuit and of gossips spreading rumors at a tea party. Because applied mathematics is inextricably tied to the problems it solves, the applied mathematician must be familiar with at least one other field—e.g., aerodynamics, electronics, or genetics.

The pure mathematician judges his work largely by aesthetic standards; the applied mathematician is a pragmatist. His job is to makeabstract mathematical models of the real world, and if they work, he is satisfied. Often his abstractions are outlandishly farfetched. He may, for example, consider the sun as a mass concentrated at a point of zero volume, or he may treat it as a perfectly round and homogeneous sphere. Either model is acceptable if it leads to predictions that jibe with experiment and observation.

This matter-of-fact attitude helps to explain the radical changes in the long-established field of probability theory. Italian and French mathematicians broached the subject about three centuries ago to analyze betting odds for dice. Since then philosophers interested in mathematics have been seriously concerned about the nature of a mysterious “agency of chance.” Working mathematicians, however, don’t worry about the philosophic notion of chance. They consider probability as an abstract and undefined property —much as physicists consider mass or energy. In so doing, mathematicians have extended the techniques of probability theory to many problems that do not obviously involve the element of chance.

Pioneers in new fields of applied mathematics

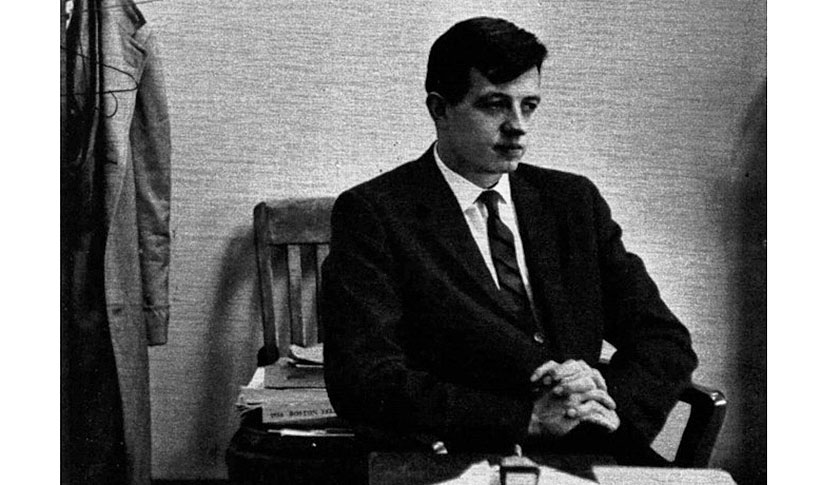

John Nash

John Nash just turned thirty. Nash has already made a reputation as a brilliant mathematician who is eager to tackle the most difficult problems. He is one of the few young mathematicians who have done important work in both pure and applied mathematics. While an undergraduate at Carnegie Tech, he formulated some of the basic concepts of modern game theory. Shortly after, he made original contributions to the highly abstract field of algebraic geometry. Later he developed some new theorems about certain non-linear differential equations that are important in pure and applied mathematics. He is now an associate professor at M.I.T. and is looking into quantum theory. He also applies mathematics to one of his hobbies: stock-market predictions.

Oswald Veblen Veblen

Oswald Veblen Veblen, still a first-rate mathematician at seventy-eight, picked the original faculty, including Albert Einstein and John von Neumann, for the Institute for Advanced Study in Princeton. Unlike his uncle Thor-stein, the cantankerous sociologist, Oswald Veblen is mild-mannered. But when he wants his way, colleagues say, he manages to get it.

Richard Courant

A genius for raising funds has helped Courant, seventy, build up New York University’s Institute of Mathematical Sciences into the nation’s outstanding center of applied mathematical analysis. Until 1933 he headed the then world-famous applied-mathematics department of the University of Göttingen, and he has modeled the N.Y.U. center on it.

* * *

Probability today is almost like a branch of geometry. Each outcome of a particular experiment is treated as the location of a point on a line. And each repetition of the experiment is the coordinate of the point in another dimension. The probability of an outcome is a measure very much like the geometric measure of volume. Many problems in probability boil down to a geometric analysis of points scattered throughout a space of many dimensions.

One of the most fertile topics of modern probability theory is the so-called “random walk.” A simple illustration is the gambler’s ruin problem, in which two men play a game until one of them is bankrupt. If one starts with $100 and the other with $200 and they play for $1 a game, the progress of their gambling can be graphed as a point on a line 300 units (i.e., dollars) long. The point jumps one unit, right or left, each time the game is played, and when it reaches either end of the line, one gambler is broke. The problem is to calculate how long the game is likely to last and what chance each gambler has of winning.

Mathematicians have recently discovered some surprising facts about such games. When both players have unlimited capital and the game can go on indefinitely, the lead tends not to change hands nearly so often as most people would guess. In a game where both players have an equal chance of winning—such as matching pennies—after 20,000 plays it is about eighty-eight times as likely that the winner has led all the time as that the two players have shared the lead equally. No matter how long the game lasts, it is more likely that one player has led from the beginning than that the lead has changed hands any given number of times.

The random-walk abstraction is applicable to a great many physical situations. Some clearly involve chance—e.g., diffusion of gases, flow of automobile traffic, spread of rumors, progress of epidemic disease. The technique has even been applied to show that after the last glacial period seed-carrying birds must have helped re-establish the oak forests in the northern parts of the British Isles. But some modern random-walk problems have no obvious connection with chance. In a complicated electrical network, for example, if the voltages at the terminals are fixed, the voltages at various points inside the circuit can be calculated by treating the whole circuit as a sort of two-dimensional gambler’s ruin game.

Risk versus gain

Mathematical statistics, the principal offshoot of probability theory, is changing just as radically as probability theory itself. Classical statistics has acted mainly as a tribunal, warning its users against drawing risky conclusions. The judgments it hands down are always somewhat equivocal, such as: “It is 98 per cent certain that drug A is at least twice as potent as drug B.” But what if drug A is actually only half as potent? Classical statistics admits this possibility, but it does not evaluate the consequences. Modern statisticians have gone a step further with a new set of ideas known collectively as decision theory. “We now try to provide a guide to actions that must be taken under conditions of uncertainty,” explains Herbert Robbins of Columbia. “The aim is to minimize the loss due to our ignorance of the true state of nature. In fact, from the viewpoint of game theory, statistical inference becomes the best strategy for playing the game called science.”

The new approach is illustrated by the following example. A philanthropist offers to flip a coin once and let you call “heads” or “tails.” If you guess right, he will pay you $100. You notice the coin is so badly bent and battered that it is much more likely to land on one side than the other. But you can’t decide which side the coin favors. The philanthropist is willing to let you test the coin with trial flips, but he insists you pay him $1 for each experiment. How many trial flips should you buy before you make up your mind? The answer, of course, depends on how the trials turn out. If the coin lands heads up the first five times, you might conclude that it is almost certainly biased in favor of heads. But if you get three heads and two tails, you would certainly ask to experiment further.

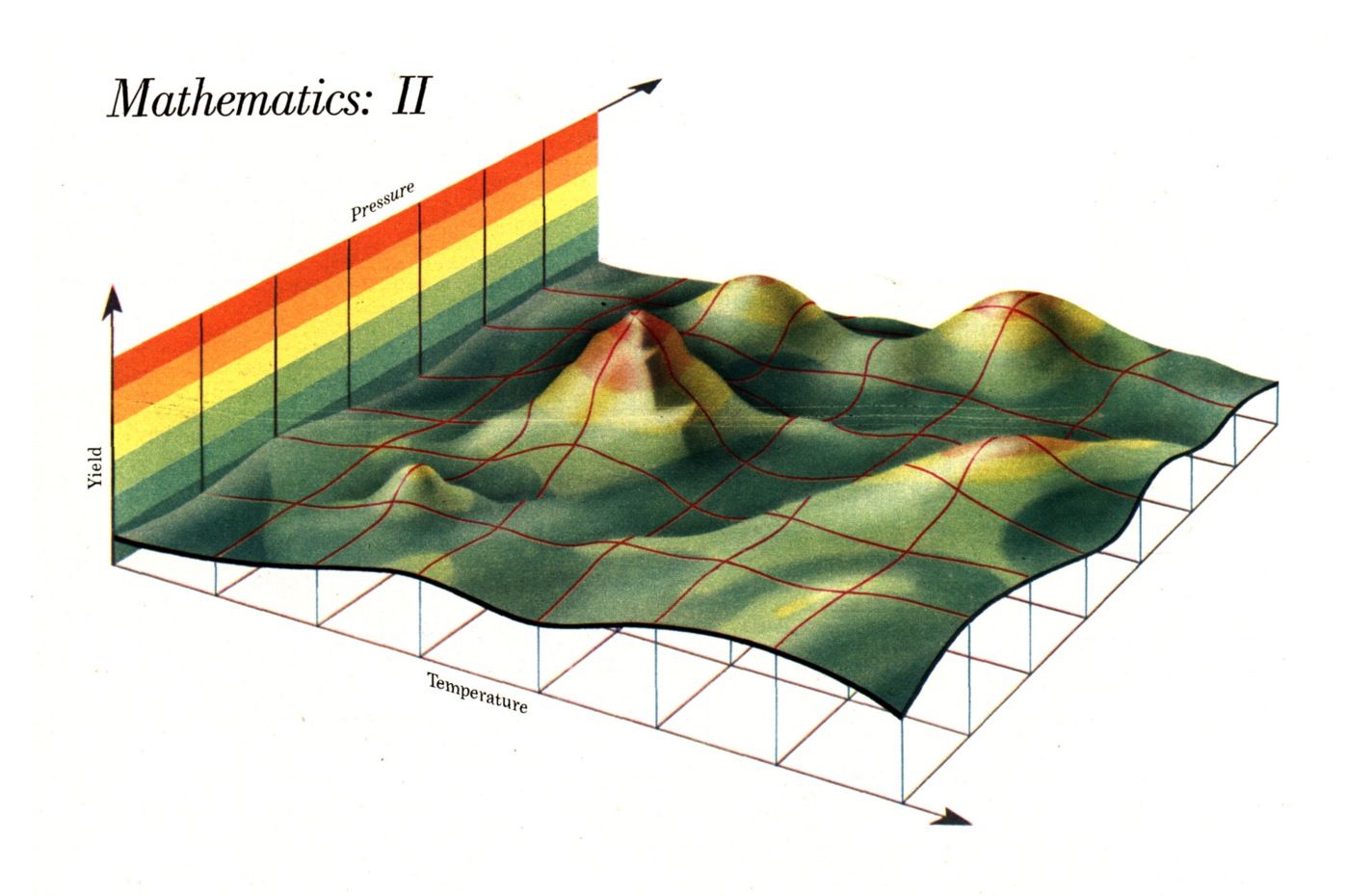

Industry faces this kind of problem regularly. A manufacturer with a new product tests it before deciding whether to put it on the market. The more he tests, the surer he will be that his decision will be right. But tests cost money, and they take time. Now modern statistics can help him balance risk against gain and decide how long to continue testing. It can also help him design and carry out experiments.New methods involving a great deal of multidimensional geometry can point out how products and industrial processes can be improved. A statistician can often apply these methods to tune up a full-scale industrial plant without interrupting production. (For an example see the diagram on page 124.)

Classical statistics has been extended in another way. One of the latest developments is “non-parametric inference,” a way of drawing conclusions about things that can be sorted according to size, longevity, dollar value, or any other graduated quality. What matters is the size of the statistical sample and the ranking of any particular object in that sample. It is not actually necessary to measure any of the objects, so long as they can be compared. It is possible to say, for instance, that if the sample consists of 473 objects, it is 99 per cent certain that only 1 per cent of all objects of this sort will be larger than the largest object in the sample. It makes no difference what the objects are—people, automobiles, ears of corn, or numbers drawn out of a hat. And the statement is still true if instead of largeness you consider smallness, intelligence, cruising speed, or any other relevant quality.

In practical application, non-parametric inference is being used to test batches of light bulbs. By burning a sample of sixty-three bulbs, for example, the manufacturer can conclude that 90 per cent of all the bulbs in the batch will almost certainly (99 chances out of 100) have a longer life than the second bulb to burn out during the test.

One of the most fascinating recent developments in applied mathematics is game theory, another offshoot of probability theory. From a mathematical viewpoint, game theory is not particularly abstruse; many mathematicians, indeed, consider it shallow. But it is exciting because it has given mathematicians an analytic approach to human behavior.

Game theory is basically a mathematical description of competition among people or such groups of people as armies, corporations, or bridge partnerships. In theory, the players know all the possible outcomes of the competition and have a firm idea of what each outcome is worth to them. They are aware of all their possible strategies and those of their opponents. And invariably they behave “rationally” (though mathematicians are not sure just how to define “rational” behavior). Obviously, game theory represents a high degree of abstraction; people are never so purposeful and well informed, even in as circumscribed a competition as a game of chess. Yet the abstraction of man is valid to the extent that game theory is proving useful in analyzing business and military situations.

When it was first developed in the Twenties, chiefly by Emile Borel in France and John von Neumann in Germany, game theory was limited to the simplest forms of competition. As late as 1944 the definitive book on the subject (Theory of Games and Economic Behavior by von Neumann and Princeton economist Oskar Morgenstern) drew many of its illustrative examples from a form of one-card poker with limited betting between two people. Now, however, the strategies of two-person, zero-sum games (in which one player gains what his opponent loses) have been quite thoroughly analyzed. And game theorists have pushed on to more complex types of competition, which are generally more true to life.

Early game theory left much to be desired when it assumed that every plan should be designed for play against an all-wise opponent who would find out the strategy and adopt his own most effective counterstrategy. In military terms, this amounted to the assumption that the enemy’s intelligence service was infallible. The game-theory solution was a randomly mixed strategy—one in which each move would be dictated by chance, say the roll of dice, so that the enemy could not possibly anticipate it. (For much the same reason the U.S. armed forces teach intelligence officers to estimate the enemy’s capabilities rather than his intentions.) Many mathematicians have felt that this approach is unrealistically cautious. Recently game theorists have worked out strategies that will take advantage of a careless or inexpert opponent without risking anything if he happens to play shrewdly.

The most difficult games to analyze mathematically are those in which the players are not strictly competing with one another. An example is a labor-management negotiation; both sides lose unless they reach an agreement. Another complicating factor is collusion among players—e.g., an agreement between two buyers not to bid against each other. Still another is payment of money outside the framework of the “game,” as when a large company holds a distributor in line by subsidizing him.

Who gets how much?

The biggest problem in analyzing such complex situations has been to find a mathematical procedure for distributing profits in such a way that “rational” players will be satisfied. One formula has been developed by Lloyd Shapley of the Rand Corporation. An outside arbitrator must decide the payments. The formula tells him how to give the players payments appropriate to the strength of their bargaining powers, and it also maximizes the total payment. There are obvious practical difficulties in applying Shapley’s “arbitration value.” In the first place, the payment, or value, each player receives can seldom be measured simply in dollars. Thus the arbitrator would have a hard time deciding on the proper distribution if the players were to lie about what they wanted to get from the game and how much they valued it.

While game theory has already contributed a great deal to decision theory in modern statistics, practical applications to complex human situations have not been strikingly successful. The chief troubles seem to be that there are no objective mathematical ways to formulate “rational” behavior or to measure the value of a given outcome to a particular player. At the very least, however, game theory has got mathematicians interested in analyzing human affairs and has stimulated more economists and social scientists to study higher mathematics. Game theory may be a forerunner of still more penetrating mathematical approaches that will someday help man to interpret more accurately what he observes about human behavior.

Universal tool

The backbone of mathematics, pure as well as applied, is a conglomeration of techniques known as “analysis.” Analysis used to be virtually synonymous with the applications of differential and integral calculus. Modern analysts, however, use theorems and techniques from almost every other branch of mathematics, including topology, the theory of numbers, and abstract algebra.

In the last twenty or thirty years mathematical analysts have made rapid progress with differential equations, which serve as mathematical models for almost every physical phenomenon involving any sort of change. Today mathematicians know relatively simple routines for solving many types of differential equations on computers. But there are still no straightforward methods for solving most non-linear differential equations—the kind that usually crop up when large or abrupt changes occur. Typical are the equations that describe the aerodynamic shock waves produced when an airplane accelerates through the speed of sound.

Russian mathematicians have concentrated enormous effort on the theory of non-linear differential equations. One consequence is that the Russians are now ahead of the rest of the world in the study of automatic control, and this may account for much of their success with missiles.

It is in the field of analysis that electronic computers have made perhaps their most important contributions to applied mathematics. It still takes a skillful mathematician to set up a differential equation and interpret the solution. But in the final stages he can usually reduce the work to a numerical procedure—long and tedious, perhaps, but straightforward enough for a computer to carry out in a few minutes or at most a few hours. The very fact that computers are available makes it feasible to analyze mathematically a great many problems that used to be handled by various rules of thumb, and less accurately.

Mathematics of logic

Computers have also had some effects on pure mathematics. Faced with the problems of instructing computers what to do and how to do it, mathematicians have reopened an old and partly dormant field: Boolean algebra. This branch of mathematics reduces the rules of formal logic to algebraic form. Two of its axioms are startlingly different from the axioms of ordinary high-school algebra. In Boolean algebra a + a = a, and a × a = a. The reason becomes clear when a is interpreted as a statement, the plus sign as “or,” and the multiplication sign as “and.” Thus, for example, the addition axiom can be illustrated by: “(this dress is red) or (this dress is red) means (this dress is red).”

Numerical analysis, a main part of the study of approximations, is another field that mathematicians have revived to program problems for computers. There is still a great deal of pure and fundamental mathematical research to be done on numerical errors that may arise through rounding off numbers. Computers are particularly liable to commit such errors, for there is a limit to the size of the numbers they can manipulate. If a machine gets a very long number, it has to drop the digits at the end and work with an approximation. While the approximation may be extremely close, the error may grow to be enormous if the number is multiplied by a large factor at a later stage of the problem. It is generally safe to assume that rounding off tends to even out in long arithmetic examples. In adding a long column of figures, for instance, you probably won’t go far wrong if you consider 44.23 simply as 44, and 517.61 as 518. But it is sheer superstition to suppose that rounding off cannot possibly build up a serious accumulation of errors. (It obviously would if all the numbers happened to end in .499.)

There are subtler pitfalls in certain more elaborate kinds of computation. In some typical computer problems involving matrices that areused to solve simultaneous equations, John Todd of Cal Tech has constructed seemingly simple numerical problems that a computer simply cannot cope with. In some cases the computer gets grossly inaccurate results; in others it can’t produce any answer at all. It is a challenge to numerical analysts to find ways to foresee this sort of trouble and then avoid it.

Patterns in primes

Computers have as yet made few direct contributions to pure mathematics except in the field of number theory. Here the results have been inconclusive but interesting. D. H. Lehmer of the University of California has had a computer draw up a list of all the prime numbers less than 46,000,000. (A prime is a number that is exactly divisible only by itself or one—e.g., 2, 3, 17, 61, 1,021.) A study of the list confirms that prime numbers, at least up to 46,000,000, are distributed among other whole numbers according to a “law” worked out theoretically about a century ago. The law states that the number of primes less than any given large number, X, is approximately equal to X divided by the natural logarithm of X. (Actually, the approximation is consistently a little on the low side.) Lehmer’s list also tends to confirm conjectures about the distribution of twin primes—i.e., pairs of consecutive odd numbers both of which are primes, like 29 and 31, or 101 and 103. The number of twin primes less than X is roughly equal to X divided by the square of the natural logarithm of X.

Lehmer and H. S. Vandiver of the University of Texas have also used a computer to test a famous theorem that mathematicians the world over are still trying either to prove or disprove. Three hundred years ago the French mathematician Fermat stated that it is impossible to satisfy the following equation by substituting whole numbers (except zero) for all the letters if n is greater than 2:

an + bn = cn

Lehmerand Vandiver have sought to find a single exception. If they could, the theorem would be disproved. Fortunately they have not had to test every conceivable combination of numbers; it is sufficient to try substituting all prime numbers for n. And there are further short cuts. The number n for example must not divide any of a certain set of so-called “Bernoulli numbers,” otherwise it cannot satisfy the equation. (The Bernoulli numbers are irregular. The first is 1/6; the third, 1/30; the eleventh, 69½,730; the thirteenth, 7/6; the seventeenth, 43,867/798; the nineteenth, 1,222,277/2,310. Numbers later in the series are enormous.)

Lehmer and Vandiver have tested the Fermat theorem for all prime n’s up to 4,000, but they seem to be coming to a dead end. The Bernoulli numbers at this stage are nearly 10,000 digits long, and even a fast computer takes a full hour to test each n. The fact that a machine has failed to find an exception does not, of course, prove the Fermat theorem, although it does perhaps add a measure of assurance that the theorem is true.

But it is possible for a computer to produce a mathematical proof. Allen Newell of Rand Corporation and Herbert A. Simon of Carnegie Tech have worked out a program of instructions that tells a high-speed computer how to work out proofs of some elementary theorems in mathematical logic contained in Principia Mathematica, a three-volume treatise by Alfred North Whitehead and Bertrand Russell.

The Newell and Simon program is based on heuristic thinking—the kind of hunch-and-analogy approach that a creative human minduses to simplify complicated problems. The computer is supplied with some basic axioms, and it stores away all theorems it has previously proved. When it is told to prove an unfamiliar theorem, it first tries to draw analogies and comparisons with the theorems it already knows. In many cases the computer produces a logical proof within a few minutes; in others it fails to produce any proof at all. It would conceivably be possible to program a computer to solve theorems with an algorithmic approach, a sure-fire, methodical procedure for exhausting all possibilities. But such a program might take years for the fastest computer to carry out.

Although most mathematicians scoff at the idea, Newell and Simon are confident that heuristic programing will soon enable computers to do truly creative mathematical work. They guess that within ten years a computer will discover and prove an important mathematical theorem that never occurred to any human mathematician.

Help wanted

But computers are not going to put mathematicians out of work. Quite to the contrary, computers have opened up so many newapplications for mathematics that industrial job opportunities for mathematicians have more than doubled in the last five years. About one-fourth of the 250 people who are getting Ph.D.’s in mathematics this year are going into industry—chiefly the aircraft, electronics, communications, and petroleum companies. In 1946 only about one in nine Ph.D.’s took jobs in industry.

While most companies prefer mathematicians who have also had considerable background in physics or engineering, many companies are also eager to hire men who have concentrated on pure mathematics. Starting pay for a good young mathematician with a fresh Ph.D. now averages close to $10,000 a year in the aircraft industry, about double that of 1950 (and about double today’s starting pay in universities).

Still, a great deal of industrial mathematics is done by physicists and engineers who have switched to mathematics after graduation. And there is also room for people with bachelor’s and master’s degrees, particularly in programing computers to perform calculations.

Different companies use mathematicians in different ways. Some incorporate them in research teams along with engineers, physicists, metallurgists, and other scientists. But a growing number have set up special mathematics groups, which carry out their own research projects and also do a strictly limited amount of problem solving for other scientific departments.

The oldest and most illustrious industrial mathematics department was set up in 1930 by Bell Telephone Laboratories. It started with six or eight professional mathematicians and grew slowly until after the war. Then in ten years it doubled in size. Today the department has about thirty professional mathematicians, half of them with Ph.D.’s in mathematics, the rest with Ph.D.’s in other sciences. The department has made outstanding contributions to mathematics. Notable is information theory, which was developed during and after the war by Claude Shannon as a mathematical model for language and its communication.

Crisis in education

The demand for mathematicians of every sort is rapidly outstripping the capacity of the U.S. educational system. Swelling enrollments in mathematics courses are already beginning to tax college and university mathematics departments. At Princeton, for example, the mathematics majors have for years numbered only five to ten. but nineteen members of last year’s junior class elected to major in mathematics. To complicate matters further, the good college and university departments no longer require their professors to teach twelve to fifteen hours a week. So that the teachers can also do research, the average classroom time has been reduced to nine hours in most schools, and to less than six in some of the best universities. Yet the serious mathematics student now needs more training than ever before. If he wants a good job in industry or in a top university he must have a doctor’s degree; and if he wants to excel in research he should have a year or two of postdoctoral study.*

There is a great deal to be mastered in modern mathematics, but surprisingly it is relatively easier to learn than most of the mathematics traditionally taught in high school and college, despite its abstractness and complexity. One change that would obviously help would be to start teaching the important modern concepts and techniques earlier. The way mathematics is taught now, complains John G. Kemeny of Dartmouth, “it is the only subject you can study for fourteen years [i.e., through sophomore calculus] without learning anything that’s been done since the year 1800.”

The Dartmouth plan

Some colleges are now making progress in modernizing their mathematics curricula. Several no longer require a special course in trigonometry. “We really don’t have to train everybody to be a surveyor,” explains one department head. Under the leadership of Kemeny, Dartmouth in the last five years has almost completely revised its undergraduate course. There are now, in fact, three separate courses of study in mathematics: one for mathematics majors, another for engineers and others who must have mathematical training, and a third for the liberal-arts students who want to make mathematics part of their cultural background.

The courses are amazingly popular. Ninety per cent of all Dartmouth students take at least one semester of mathematics, and more than 60 per cent finish a year of it (mathematics is an elective for most of them). Kemeny and two associates have written for one of their courses a remarkable textbook entitled Introduction to Finite Mathematics. Within a year after its publication in January, 1957, it was being used by about 100 colleges, in some cases just for mathematics courses especially designed for social-science majors. And several New York high schools have adopted the book for special sections of exceptional students.

Mathematics for children

The movement to teach more mathematics and teach it sooner has filtered down to the secondary-school level. The College Entrance Examination Board, through its commission on mathematics, has drawn up a program for modernizing secondary-school mathematics courses. The chief aim of the commission, according to its executive director, Albert E. Meder, is to give students an appreciation of the true meaning of mathematics and some idea of modern developments. Algebra, he points out, is no longer a “disconnected mass of memorized tricks but a study of mathematical structure; geometry no longer a body of theorems arranged in a precise order that can be memorized without understanding.”

The College Board has the support of most leading mathematicians. About twenty of them are meeting with twenty high-school mathematics teachers this summer at Yale to write outlines of sample textbooks based partly on the College Board’s recommendations. This group, headed by E. G. Begle of Yale, plans to write the actual books within the next year so that teachers and commercial publishers will know how mathematicians think mathematics ought to be taught in high school.

Perhaps the most radical step in U.S. mathematical education has been taken by the University of Illinois’ experimental high school. There, under the guidance of a member of the university’s mathematics department, a professor of education, Max Beberman, has introduced a completely new mathematics curriculum. It starts with an informal axiomatic approach to arithmetic and algebra and proceeds through aspects of probability theory, set theory, number theory, complex numbers, mathematical induction, and analytic geometry. The approach reflects the rigor, abstractness, and generality of modern mathematics. To make room for some of the newconcepts, Beberman and his advisers have had to reduce the amount of time spent drilling on such techniques as factoring algebraic expressions.

So far the experiment has been very stimulating to students—partly, of course, because of the very fact that the course is an experiment. In the college entrance examinations of 1957, the first group of students to complete four years of the Illinois course made some of the highest scores in the nation.

Founders of Two Great Mathematical Centers

While twelve other high schools have now experimentally adopted the Illinois mathematics curriculum, it is not likely to be widely usedfor some time. The reason is that most high-school teachers have to be completely retrained to teach it. With Carnegie Foundation support, the University of Illinois has begun to train high-school teachers from many states to teach the new curriculum.

For many years it has been hard for a would-be teacher to learn what mathematics he needs to teach any serious high-school course. Professor George Polya of Stanford explains: “The mathematics department [of a university] offers them tough steak they cannot chew, and the school of education vapid soup with no meat in it.” The National Science Foundation has helped more than fifty colleges and universities set up institutes where high-school teachers can study mathematics for a summer or even a full academic year.

Opportunity ahead

However many mathematicians there may be, there will always be a need for more first-rate minds to create new mathematics. This will be true of applied mathematics as well as pure mathematics. For applied mathematics now presents enough of an intellectual challenge to attract even academic men who pride themselves on creating mathematics for its own sake. One young assistant professor, recently offered $16,000 by industry, is seriously thinking of abandoning his university career. He explains: “I think that the problems in applied mathematics would offer me just as much stimulation as more basic research.”

Whole new fields of mathematics are needed to cope with problems in other sciences and human affairs. Transportation engineers, for example, still lack a mathematical method to analyze the turbulence of four-lane highway traffic; and it may be years before they can apply precise mathematical reasoning to three-dimensional air traffic. Biologists have used almost no mathematics aside from statistics, but now some of them are seriously thinking of applying topology. This branch of mathematics, which deals with generalized shapes and disregards size, may be the most appropriate way to describe living cells with their enormous variations in size and shape. Neurophysiologists are looking for a new kind of algebra to represent thinking processes, which are by no means random, yet not entirely methodical.

There are still some remarkably simple questions that are teasing mathematicians. They have not yet found, for example, a general solution to the following problem: Given a road map of N folds, how many ways can you refold it? And when this is solved, there will be another puzzle, and another.