The doomsday forecasts have been building for years: AI will hollow out the white-collar workforce, destroy entry-level jobs, and create a permanent underclass of technologically displaced workers. Now, one of Silicon Valley’s most influential firms has published a detailed rebuttal saying, basically, don’t believe the hype.

In a new essay published Tuesday, Andreessen Horowitz General Partner David George declared that the vision of an “AI job apocalypse” is a “complete fantasy”—”unhelpful marketing, bad economics and worse history,” rooted in what the firm calls a logical error that economists have been debunking for more than a century.

The piece represents the most expansive version yet of a case the firm’s co-founders have been making publicly for months. Ben Horowitz made a version of the argument on the Invest Like the Best podcast earlier this year, pointing out that AI technologies have been advancing since at least 2012—when ImageNet changed computer vision—and the catastrophic job destruction hasn’t arrived.

The core argument: the lump-of-labor fallacy

The intellectual foundation of the a16z essay is a well-worn economic concept: the “lump-of-labor fallacy,” which holds that an economy only has a fixed amount of work to be done, and that anything—a machine, an AI model, even an immigrant—that does more of it necessarily leaves humans with less. “The AI Alarmist, ‘Permanent Underclass’ panic isn’t a convincing story,” George wrote. “It isn’t even a new story. It’s the “lump-of-labor” fallacy, with updated branding.”

The problem, he argued, is that human wants and needs are not fixed. As one technology lowers the cost of some activity, people don’t simply stop wanting things—they find new things to want, creating new categories of work. The obvious example is the great economist John Maynard Keynes, who famously predicted nearly a century ago that automation would produce a 15-hour work week. But people didn’t sit back and enjoy the surplus; they found new and different things to do.

George marshaled a sequence of historical examples to make the point. Farm mechanization eliminated roughly a third of U.S. employment in the early 20th century—and yet those workers flowed into factories, offices, hospitals, and eventually the software industry, while farm output nearly tripled. Electrification didn’t destroy manufacturing jobs; it reorganized factories around new workflows, and labor productivity growth doubled for decades after its widespread adoption. And the spreadsheet—often cited as a job-killer for bookkeepers—actually led to an explosion in the number of financial analysts. “We lost ~1M bookkeepers and gained ~1.5M financial analysts,” he wrote.

At nearly the same time, across the country in New York, Apollo Global Management Chief Economist Torsten Slok continued his arguments in a similar vein, working to popularize the “Jevons Paradox” about how declining technology costs lead to a surge in demand and job creation. The release of Microsoft Excel is a perfect example, he wrote on May 7. “The bottom line is that rather than reducing the need for accountants, Excel dramatically lowered the cost of financial analysis, reporting and record-keeping, making these services accessible to a far broader range of businesses and use cases,” Slok wrote.

George also cited the Jevons Paradox: when the cost of a powerful input falls, the economy does not politely stand still. It does more. “When fossil fuels first made energy cheap and plentiful, we did more than put whalers and woodchoppers out of business; we invented plastics!” Another Jevons citation came this week from Anthropic CEO Dario Amodei, who mentioned it during his firm’s announcement of supposedly labor-destroying tools to be deployed on Wall Street.

What the current data actually shows

Crucially, a16z doesn’t just argue from history and theory—it argues from the present. Citing a battery of recent academic research, the firm concludes that “the weight of the data does not support the doomer claim.”

- A National Bureau of Economic Research working paper found that “AI adoption has not yet led to meaningful changes in total employment.”

- A Federal Reserve Bank of Atlanta working paper, based on four surveys, found that more than 90% of firms estimated no employment impact from AI over the prior three years.

- A Census Bureau study found that AI-driven employment changes “remain modest,” with changes distributed “nearly equally between increases and decreases.”

- The Yale Budget Lab reported in April that “the picture of AI’s impact on the labor market that emerges from our data is one that largely reflects stability.”

The one notable exception: Stanford researchers found that early-career workers aged 22–25 in the most AI-exposed occupations experienced a 16% relative decline in employment since ChatGPT’s release in late 2022. Even here, a16z argues the picture is more complex: “Before anyone concludes that “AI is killing entry-level jobs,” however, it bears mentioning that these researchers also variously found an increase in entry-level roles where AI is augmentative (and an increase where AI has no impact at all).”

The opposition is credentialed and not going away

The a16z case is powerful—and it has serious, named critics who disagree with almost every premise.

Take economist Anton Korinek. If the quest for artificial general intelligence succeeds, he argues, “we are not looking at another Industrial Revolution” that ultimately rewards workers, he told The New York Times in February; rather, “labor itself becomes optional for the economy.”

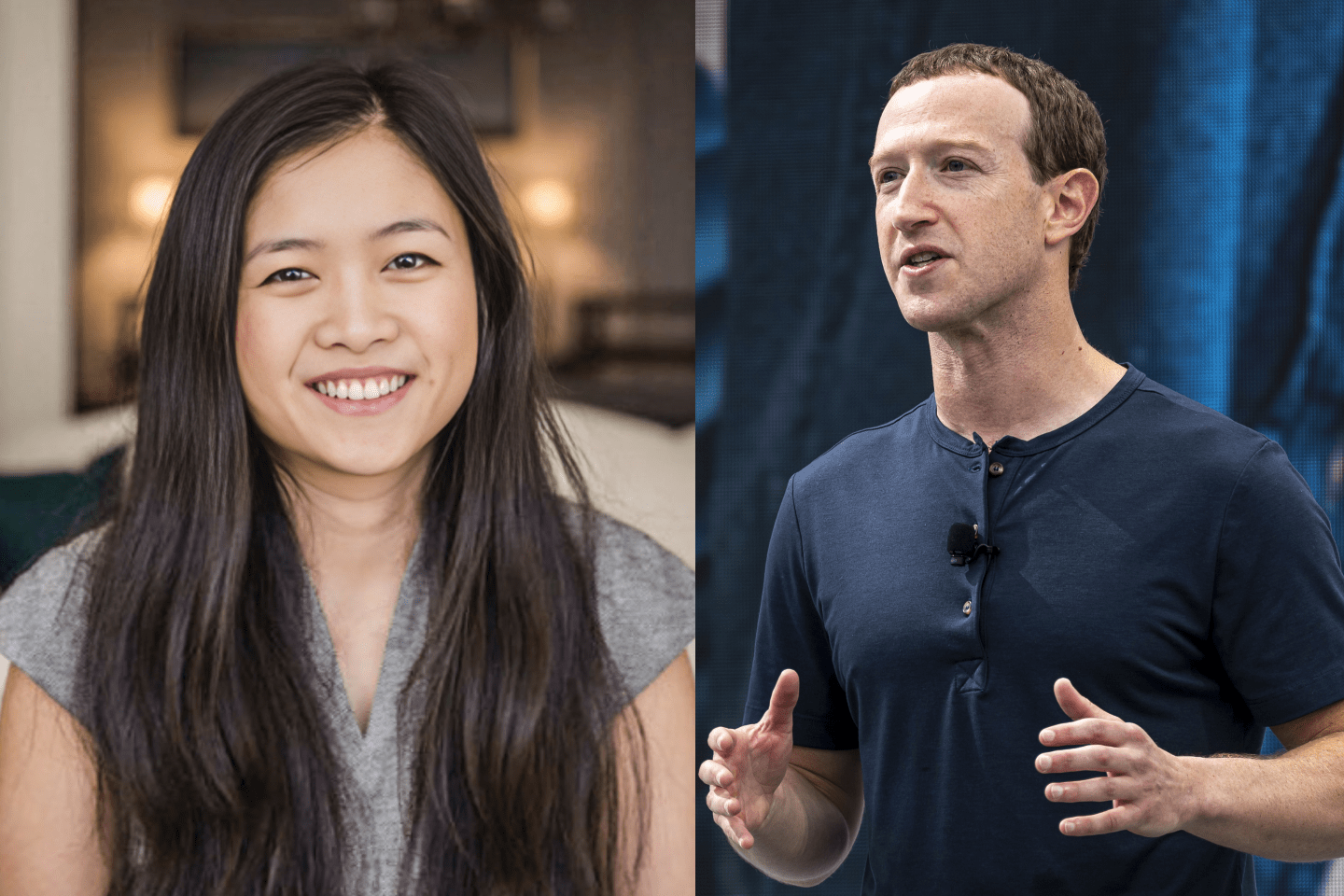

The Carnegie Endowment for International Peace published a detailed taxonomy of the debate in April, categorizing participants into three camps: the “alarmed,” the “patient,” and the “excited.” A16z squarely occupies the excited camp, with co-founder Marc Andreessen identified as one of the most excitable, but the Carnegie analysis shows why the debate is harder to resolve than either side admits. The alarmed and excited aren’t simply arguing about the same facts—they are making different predictions about the speed of AI progress, the ability of firms to adopt it, and whether new jobs will emerge fast enough to absorb displaced workers.

The ‘pace’ problem that history can’t solve

What separates this moment from prior technological transitions, critics argue, is velocity. The alarmed, as Carnegie documents, believe that scaling laws, massive investment, and the potential for AI-accelerated AI research will produce capability jumps unlike anything history offers a template for. OpenAI’s GDPVal benchmark—which tests AI systems on complex workforce tasks that take humans an average of seven hours to complete—found that the newest AI models beat human workers in a subset of 220 tasks, with expert judges preferring AI responses 83% of the time.

The “patient” camp—represented by Princeton computer scientists Arvind Narayanan and Sayash Kapoor, Nobel laureate Daron Acemoglu, and cognitive scientist Gary Marcus—argue that capabilities gaps, hallucination problems, and the sheer organizational difficulty of integrating AI into enterprises will slow adoption to a pace measured in decades, not years. Scale AI’s Remote Labor Index, which tests models on the kind of multi-day, complex tasks a human freelancer might take on, found that the best AI systems could complete just 2.5% of tasks at a level matching the human gold standard as of March 2026, a percentage that crept up marginally within a few months.

The economist David Autor, one of the most careful students of technological displacement, occupies a conditional optimist position that is more nuanced than either camp: “AI, if used well, can assist with restoring the middle-skill, middle-class heart of the US labor market”—but he is explicit that “this is not a forecast but an argument about what is possible.”

The conflict-of-interest question

The a16z argument is, of course, self-interested. Andreessen Horowitz has invested billions across the AI stack, from foundation-model companies to AI-native startups seeking to disrupt legacy industries. A cultural and political environment in which AI is widely perceived as a job-killer creates pressure for regulation, slows enterprise adoption, and clouds the consumer sentiment on which its portfolio companies depend.

That conflict doesn’t make this argument wrong, though. The historical record and the cited academic papers are all real. And as Carnegie notes, even the economist survey data shows that the majority of academics expect AI to bring only modest deviations from historical economic trends, even as they acknowledge the possibility of severe disruption under faster-than-expected capability scenarios.

What a16z is less forthcoming about is the asymmetry of harm if it’s wrong. If the optimists are right, the labor market reorganizes itself over time and workers find new roles, as they always have. If the alarmists are right and policy has been shaped by bullish venture-capital certainty, millions of displaced workers will face a safety net and a retraining infrastructure that was never built to absorb them. Paradoxically, the Yale Budget Lab recently noted that the very productivity gains that Wall Street appears to be pricing in would result in many millions of displaced workers, both solving the $39 trillion national debt crisis and further exacerbating it simultaneously.

A Quinnipiac survey released in March found that 70% of Americans now believe AI will lead to fewer job opportunities for humans, up from 56% the year before. Whether that fear reflects bad economics and worse history—or a genuine intuition about something different this time—is the question that no historical analogy can fully answer.

a16z did not respond to a request for comment.