Google’s new chatbot, Bard, is part of a revolutionary wave of artificial intelligence (A.I.) being developed that can rapidly generate anything from an essay on William Shakespeare to rap lyrics in the style of DMX. But Bard and all of its chatbot peers still have at least one serious problem—they sometimes make stuff up.

The latest evidence of this unwelcome tendency was displayed during CBS’ 60 Minutes on Sunday. The Inflation Wars: A Modern History by Peter Temin “provides a history of inflation in the United States” and discusses the policies that have been used to control it, Bard confidently declared during the report. The problem is the book doesn’t exist.

It’s an interesting lie by Bard—because it could be true. Temin is an accomplished MIT economist who studies inflation and has written over a dozen books on economics, he just never wrote one called The Inflation Wars: A Modern History. Bard “hallucinated” that, as well as names and summaries for a whole list of other economics books in response to a question about inflation.

It’s not the first public error the chatbot has made. When Bard was released in March to counter OpenAI’s rival ChatGPT, it claimed in a public demonstration that the James Webb Space Telescope was the first to capture an image of an exoplanet in 2005, but the aptly named Very Large Telescope had actually accomplished the task a year earlier in Chile.

Chatbots like Bard and ChatGPT use large language models, or LLMs, that leverage billions of data points to predict the next word in a string of text. This method of so-called generative A.I. tends to produce hallucinations in which the models generate text that appears plausible, yet isn’t factual. But with all the work being done on LLMs, are these types of hallucinations still common?

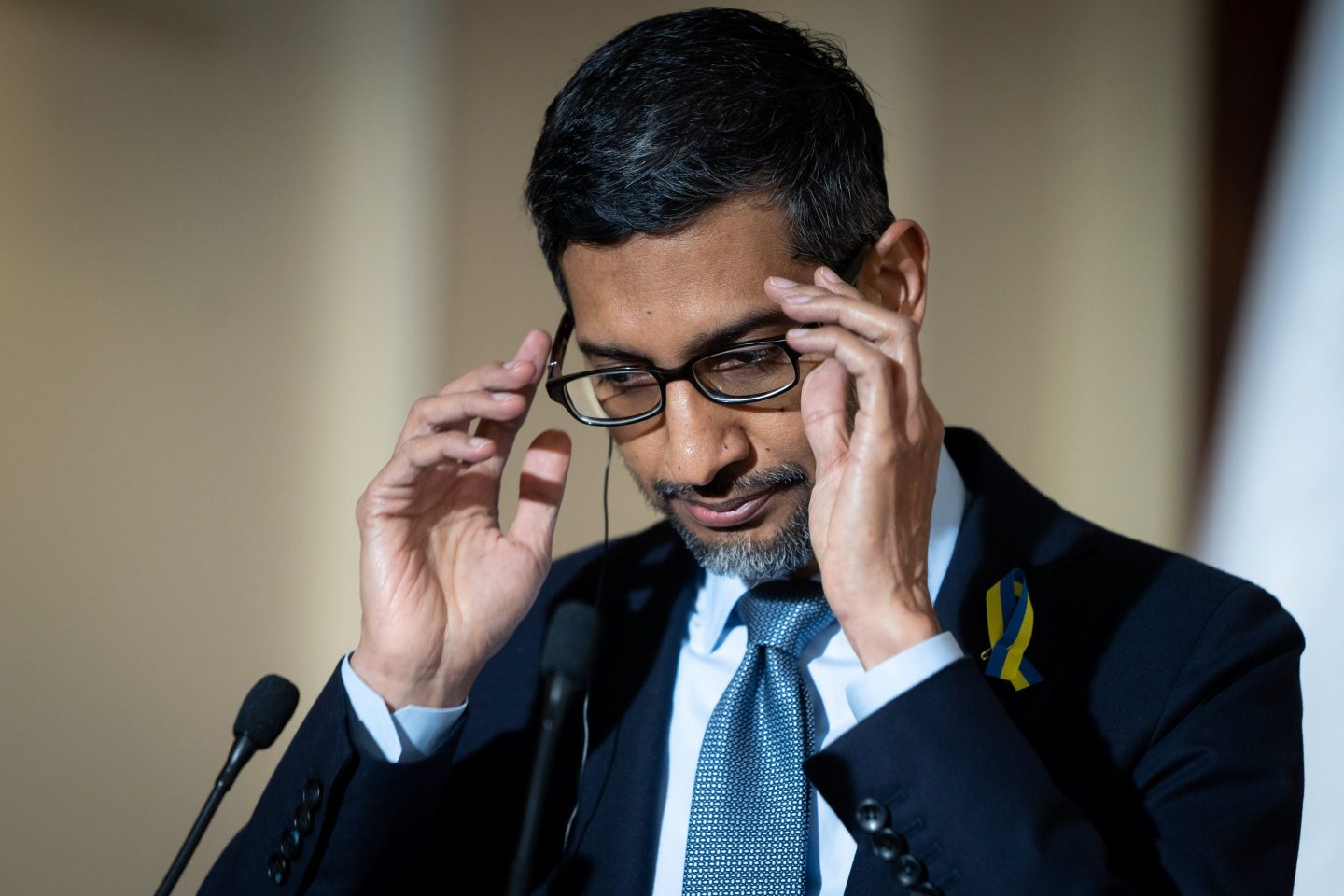

“Yes,” Google CEO Sundar Pichai admitted in his 60 Minutes interview Sunday, saying they’re “expected.” “No one in the field has yet solved the hallucination problems. All models do have this as an issue.”

When asked if the hallucination problem will be solved in the future, Pichai noted “it’s a matter of intense debate,” but said he thinks his team will eventually “make progress.”

That progress may be difficult to come by, as some A.I. experts have noted, due to the complex nature of A.I. systems. Pichai explained that there are still parts of A.I. technology that his engineers “don’t fully understand.”

“There is an aspect of this which we call—all of us in the field—call it a ‘black box,’” he said. “And you can’t quite tell why it said this, or why it got it wrong.”

Pichai said his engineers “have some ideas” about how their chatbot works, and their ability to understand the model is improving. “But that’s where the state of the art is,” he noted. That answer may not be good enough for some critics who warn about potential unintended consequences of complex A.I. systems, however.

Microsoft cofounder Bill Gates, for example, argued in March that the development of A.I. tech could exacerbate wealth inequality globally. “Market forces won’t naturally produce AI products and services that help the poorest,” the billionaire wrote in a blog post. “The opposite is more likely.”

And Elon Musk has been sounding the alarm about the dangers of A.I. for months now, arguing the technology will hit the economy “like an asteroid.” The Tesla and Twitter CEO was part of a group of more than 1,100 CEOs, technologists, and A.I. researchers who called for a six-month pause on developing A.I. tools last month—even though he was busy creating his own rival A.I. startup behind the scenes.

A.I. systems could also exacerbate the flood of misinformation through the creation of deep fakes—hoax images of events or people created by A.I.—and even harm the environment, according to researchers surveyed in an annual report on the technology by Stanford University’s Institute for Human-Centered A.I., who warned the threat amounts to a potential “nuclear-level catastrophe” last week.

On Sunday, Google’s Pichai revealed he shares some of the researchers’ concerns, arguing A.I. “can be very harmful” if deployed improperly. “We don’t have all the answers there yet—and the technology is moving fast. So does that keep me up at night? Absolutely,” he said.

Pichai added that the development of A.I. systems should include “not just engineers, but social scientists, ethicists, philosophers, and so on” to ensure the outcome benefits everyone.

“I think these are all things society needs to figure out as we move along. It’s not for a company to decide,” he said.