Companies that sell artificial intelligence technologies to the federal government should prepare for tougher scrutiny of their products.

That’s according to Taka Ariga, the chief data scientist of the U.S. Government Accountability Office (GAO), the 100-year-old agency that serves as a watchdog for Congress. Among the agency’s tasks is to investigate government processes, like technology purchasing, for irregularities or out-right corruption.

The GAO’s latest report on the government’s use of facial recognition found that several agencies have failed to properly monitor the use of the technology by their employees and contractors. This is important because privacy advocates worry about government using facing recognition unnecessarily, potentially violating people’s privacy.

The GAO’s report was based on self-reported data, and therefore wasn’t comprehensive, as Buzzfeed News noted. Some agencies like the U.S. Probation Office, which supervises people charged with or convicted of federal crimes, told the GAO that it hadn’t used facial recognition software sold by the startup Clearview AI during a certain time period. But that contradicted internal Clearview data that Buzzfeed News had previously seen.

Future GAO reports on facial recognition tech and related A.I. software will likely be deeper, Ariga told Fortune. Amid the increased use of machine learning, the agency has developed new methodologies for the “audits of tomorrow,” Ariga said.

They’ll take into account how machine learning software must be fed enormous quantities of data to perform correctly. For audits, the GAO will require government vendors to disclose the data they used to train their software and reveal more about how it makes decisions. Because A.I. software is so new, its makers operate under few rules. The GAO hopes to change that by creating standards for how companies report how their technology works and encourage Congress to apply pressure to those companies that fail to measure up.

Cloud computing vendors including Amazon and Microsoft generally keep the inner-working of their A.I. tools secret for competitive reasons. But new GAO auditing requirements may require them to disclose more, Ariga explained.

“People can’t hide behind their IP,” Ariga said, using shorthand for intellectual property. “That won’t work for the federal government.”

Ariga said the GAO has met with unspecified tech vendors to discuss what could happened during an A.I. audit. He said there was “a lot of nodding heads,” implying that company representatives understand the GAO’s new auditing guidelines and have yet to push back. He said that several representatives said “this will be an interesting few years.”

“Frankly, we don’t know how the industry will react,” Ariga said. But he added that vendors like Clearview AI, which gained notoriety for creating a massive database of people’s faces scraped from the Internet, “absolutely should” expect tougher reviews in the near future.

“We don’t want to be playing catch up,” he said.

P.S. Fortune wants to hear from you about who should be on this year’s 40 under 40 list. Check out past lists here to get a feel of who we are looking for. Please do not email us about the list, but rather fill out the form below.

40 Under 40 nominees

Jonathan Vanian

@JonathanVanian

jonathan.vanian@fortune.com

A.I. IN THE NEWS

Fun with facial recognition. Chinese tech and video game giant Tencent has unveiled a facial recognition system dubbed Midnight Patrol that will prevent people in China under the age of 18 from playing some of the company’s games between 10 p.m. and 8 a.m., Bloomberg News reported. In order to play games, like “Honor of Kings,” people will have to scan their faces, presumably by using their smartphone cameras. “Anyone who refuses or fails face verification will be treated as a minor, included in the anti-addiction supervision of Tencent’s game health system and kicked offline,” the company said in a statement.

A.I. goes zoom. Business software company Zoominfo said that it would buy the startup Chorus.ai for $575 million. Chorus.AI uses machine learning to analyze customer calls, emails, and meetings to discover key words or trends that could help salespeople land deals.

The future of voice acting? Several startups are developing A.I.-generated voices that sound human and could be used for corporate training videos, digital assistants, call center operators, and video game characters, according to a MIT Technology Review report. The article takes a look at companies that are trying to make a business out of the same deep learning technology used to create deepfakes, the realistic video and audio that seem authentic, but are computer generated. From the article: It’s still difficult to maintain the realism of a voice over the long stretches of time that might be required for an audiobook or podcast. And there’s little ability to control an AI voice’s performance in the same way a director can guide a human performer.

What does A.I. have to do with the cloud? The U.S. Department of Defense’s Joint Artificial Intelligence Center (JAIC) plans to use the Air Force’s Cloud One computing services to power its A.I. projects after the Pentagon recently delayed its own cloud computing contract, according to a Federal News Network report. The Pentagon previously awarded its cloud computing contract, formerly known as the JEDI Cloud, to Microsoft, but has since indicated it may also work with Amazon Web Services. “The lack of an enterprise cloud solution has slowed us down, there’s no question about that,” JAIC director Lt. Gen. Jack Shanahan said, according to the report. The Air Force’s Cloud One service is powered by both Microsoft and AWS, the report noted.

A.I. takes cheating to another level. Video game companies will likely have to address the wave of newer A.I.-powered tools that help cheaters win at video games, tech publication Ars Technica reported. These new A.I. cheating tools use computer vision technology—which helps computers recognize objects—to calculate the exact speed and direction that a player needs to move a mouse to fire at a target in a first-person shooter game, the report said. The creators of the A.I. cheating tools claim that their methods are undetectable because they don’t manipulate the video game’s underlying software like most cheating tools do. It’s unclear what video game publishers will do to stop these tools from proliferating.

EYE ON A.I. TALENT

LoanDepot picked George Brady to be the financial firm’s chief digital officer. Brady was previously the chief technology officer of Capital One.

Bon Secours Mercy Health hired Jason Szczuka to be the healthcare firm’s first chief digital officer. Szczuka was previously the chief digital officer of Cigna.

The Ironman Group added Roshanie Ross as the sporting event company’s chief digital officer. Ross was previously the global general manager of digital customer experience at the health and security firm International SOS.

EYE ON A.I. RESEARCH

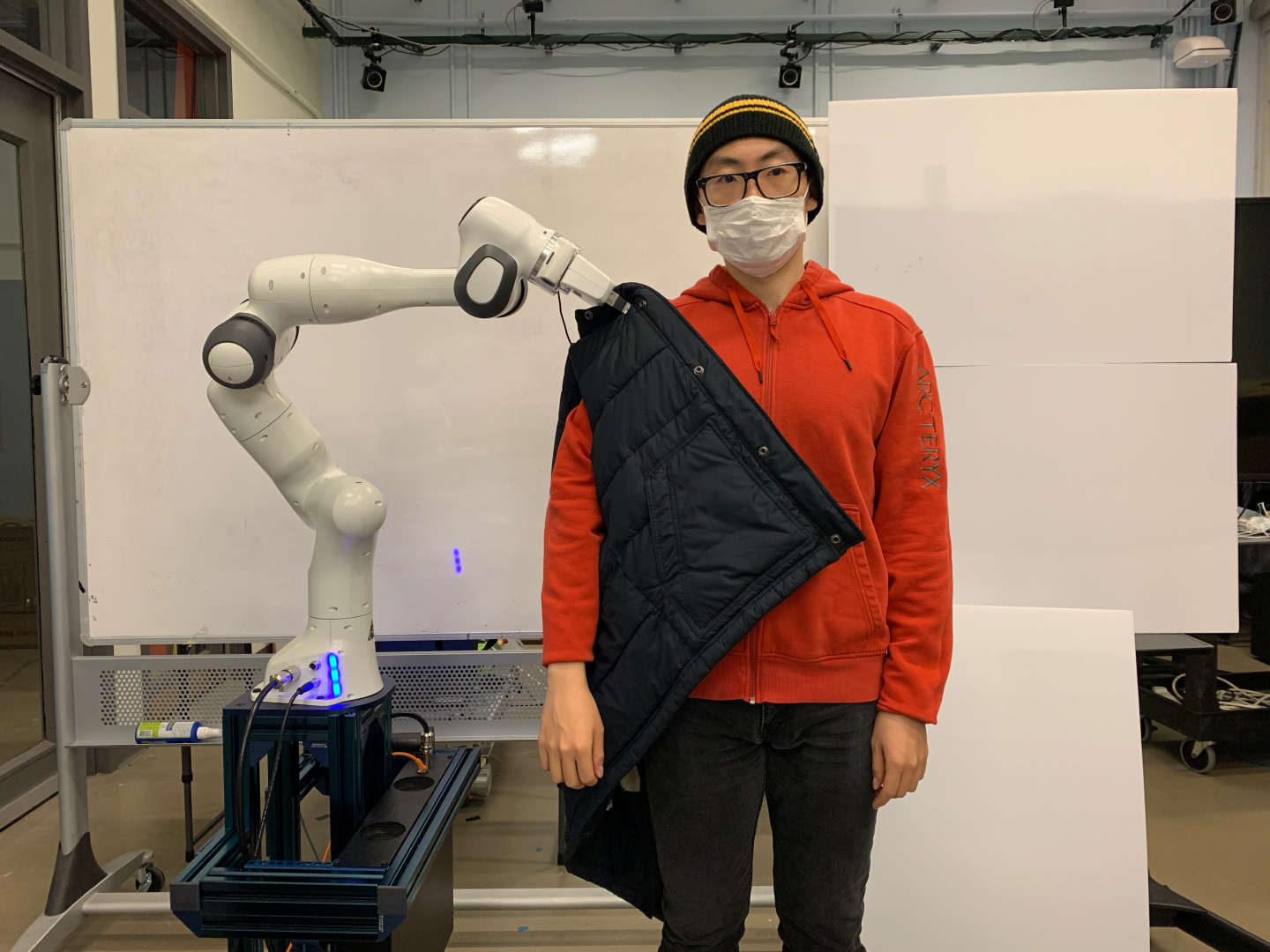

How to dress well. Researchers from the Massachusetts Institute of Technology published a paper that describes how robotic arms can place jackets on people without hurting them. The researchers developed a so-called planning algorithm that helps the robotic arm formulate the right paths and movements that “theoretically guarantees safety” for the humans. It’s a noteworthy achievement because robotic arms don’t typically possess the kinds of finesse and agility required for such tasks.

The paper was accepted for presentation as part of the 2021 Robotics: Science and Systems conference. Check out a short video clip of the robot arm in action.

FORTUNE ON A.I.

Capital One is on a hiring spree for experts in this hot tech specialty—By Jonathan Vanian

3 principles for protecting the world from A.I. bias—By Rob Thomas

Cybersecurity startup Netskope raises new funding at a $7.5 billion valuation—By Lucinda Shen

Verizon begins blocking spoofed ‘local’ robocalls—By Chris Morris

BRAIN FOOD

The future of toys? As companies develop more sophisticated toys that use machine learning to recognize and respond to children’s faces and voices, parents must deal with potential privacy issues, according to a CNBC report. The article takes a look at the privacy backlash against Mattel’s Hello Barbie, which “created a persona” of the children who played with the doll.

From the article: Similar to My Friend Cayla, Hello Barbie was designed to talk with children, take in information about them and create a profile of the child in order to develop better conversations in the future. For instance, if a child told Hello Barbie about their favorite ice cream flavor or the sports they play, then Barbie created a persona of the child.

“There are severe consequences around child protection laws,” said Stephanie Wissink, a senior research analyst and managing director in the consumer practice at Jefferies. “When you start creating a technology profile of a child, you are crossing a privacy line.”