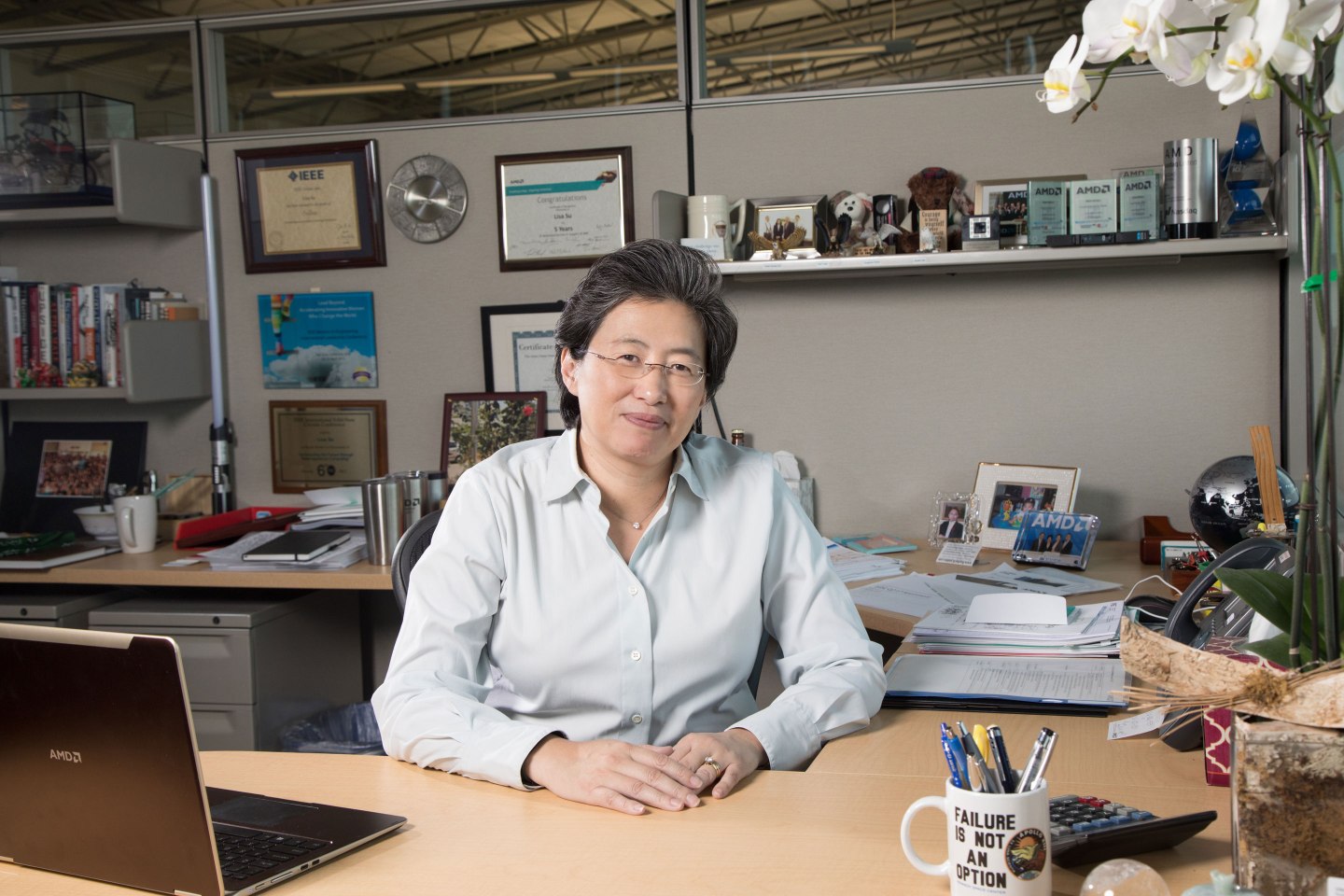

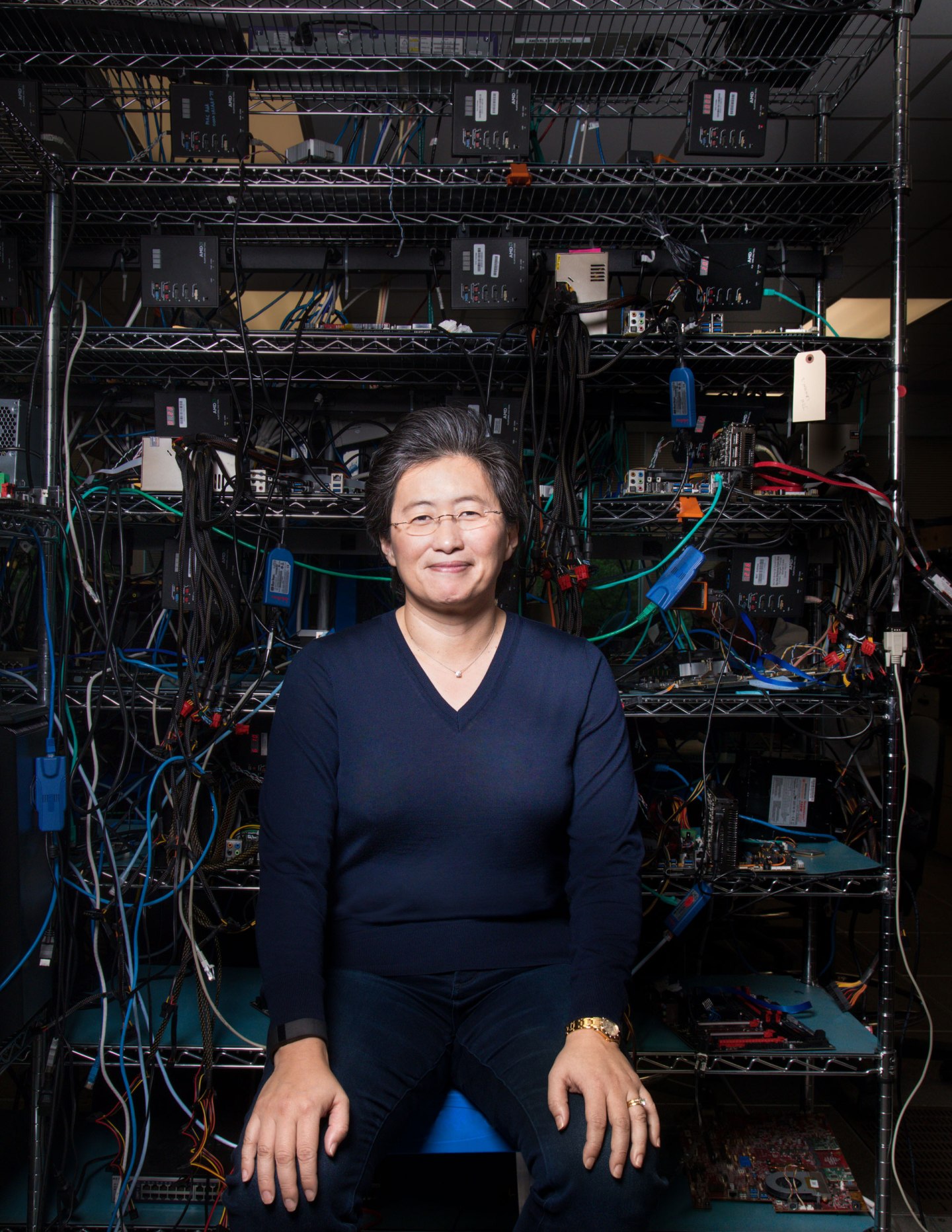

From the wide windows of her fourth-floor office, Lisa Su can look across the Austin campus of Advanced Micro Devices and see the laboratory building where the company’s new chips get tested. In the spring of 2016, Su was looking in that direction quite often, not to mention texting, instant messaging, and calling the staffers who worked there. She was waiting eagerly for a Zeppelin to arrive.

Zeppelin was the code name for AMD’s (AMD) newest microprocessor, a flagship chip designed to run in personal computers and corporate servers—and the company’s future was riding on its success. Su, a Ph.D. microprocessor engineer herself, had become CEO in 2014 in the midst of a dismal sales decline for the chipmaker. Zeppelin was the first fruit of her effort to revive AMD’s product line, with redesigned-from-the-ground-up chips that could woo customers with intense computing needs, from finicky video gamers to tech companies running artificial intelligence and machine learning programs. If the new products thrived, AMD stood a chance of reversing years of losses, and even emerging from the shadow of rivals like Intel and Nvidia.

What Su didn’t anticipate was that when the Zeppelin finally got to Austin, it would crash-land.

Louis Castro, who oversees testing, had assembled a team of 80 engineers to check out the first Zeppelin chip, dubbed the Ryzen. But the night before testing was to begin, in April 2016, the head of the chip design team called Castro. A flaw had slipped through the designers’ computer simulations, and the first chip would be dead on arrival, incapable of even booting up a computer. If the problem couldn’t be quickly fixed, the chip might be delayed for weeks or even months. And to complicate matters, Su was 8,000 miles and 10 time zones away on a business trip in India. “You’ve never been part of something as big as this in your career,” Castro recalls. “I sat and thought to myself, Oh, my gosh, what am I going to do?”

Lee Rusk, the engineer in charge of Zeppelin, called the foundry that was making the chip for AMD and told it to stop production immediately. Chief technology officer Mark Papermaster stepped up to call the CEO with the bad news. The conversation was urgent, but neither executive panicked. And Su’s immediate reaction was decisive: Testing couldn’t be delayed.

AMD’s team quickly went into what Su calls “Apollo 13 mode.” Four different teams of engineers brainstormed solutions for getting around the flaw in the prototype chip to start testing immediately. As soon as she got back to Austin, Su headed straight for the lab, encouraging the teams while reminding them that “failure was not an option.”

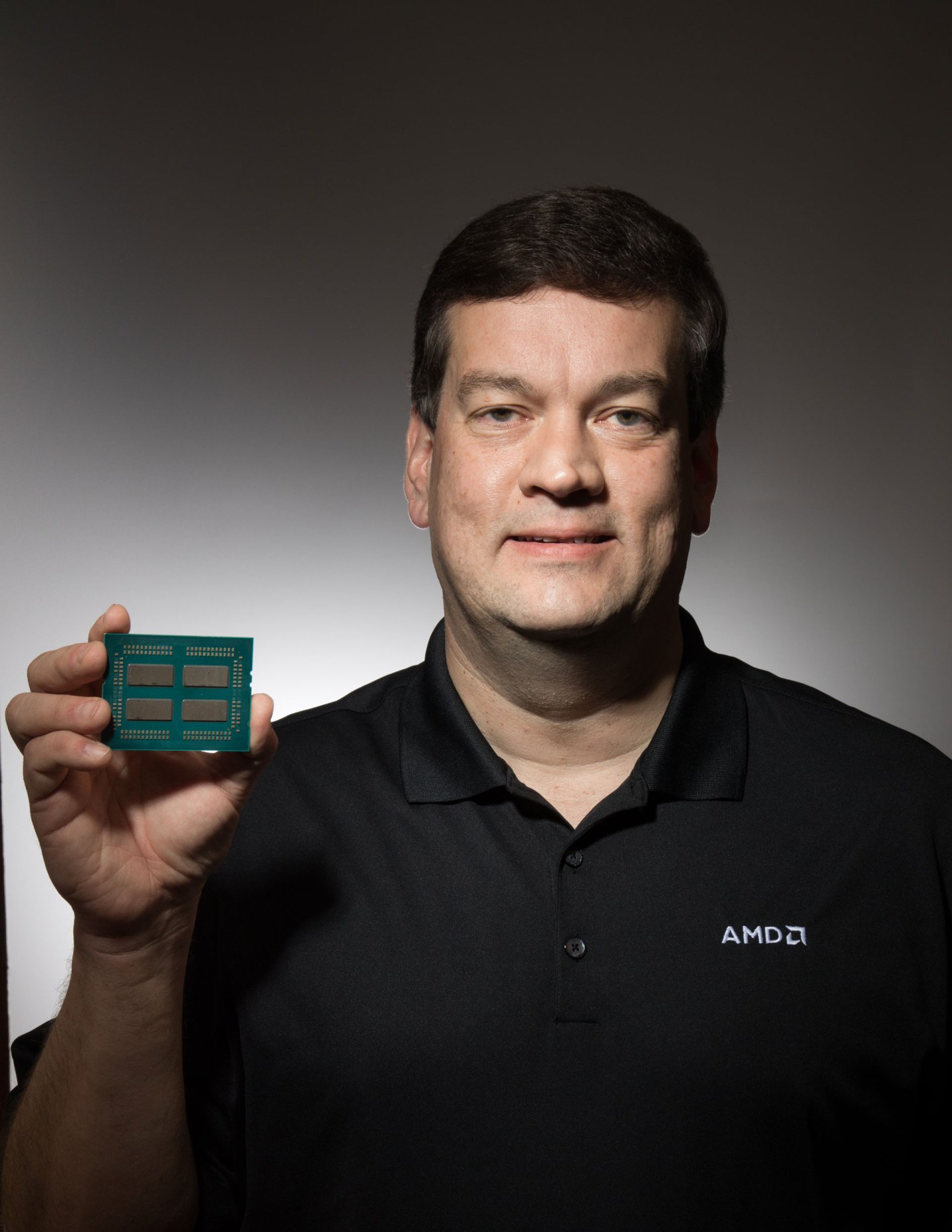

The silicon chips at the heart of today’s computers and phones are insanely complex. A single Ryzen chip the size of a nickel has five billion transistors, laid out across 100 different layers. The flaw that Castro’s team had found affected fewer than onehundredth of one percent of the circuits. If it had been located deep in the chip, on one of the lowest layers, repairing it could have been fatally time-consuming. But AMD caught a break: The problem, it turned out, could be corrected at the foundry in a month. And Castro’s team figured out how to get around the flaw for testing, avoiding losing even just that month.

It’s hard to overstate how badly AMD needed a win, and a quick one to boot. The strategy it relied on over most of the past decade—building basic but essential chips, rolling out modest upgrades every year or two, and underpricing the competition—has broken down. Between 2007 and 2016, AMD’s market share in PC chips sank from 23% to less than 10%, according to IDC; in server chips, it has dropped below 1%. At the same time the overall PC market has shrunk faster than anyone expected, losing ground to a mobile revolution that largely passed the company by. AMD has lost money for five years in a row, and revenue bottomed out at just under $4 billion in 2015, a 39% decline from its 2011 peak.

Bad news for AMD, arguably, is a loss for Silicon Valley. The company has never been anywhere near No. 1 in the semiconductor arms race. But AMD has been an innovator in countless ways—the first chipmaker to break the one-gigahertz speed barrier, the first to put two processing cores in a single chip for PCs. And in that role, it has helped keep costs down and ideas flowing for innumerable businesses that rely on processing power. “Competition drives innovation in every market I’ve ever seen,” says Meg Whitman, CEO of Hewlett Packard Enterprise and now a veteran of the PC and server industries. “A broad ecosystem in the chip market,” she adds, “is a good thing for the industry as a whole.”

Today, AMD’s extinction looks less likely—thanks in large part to Su. Her strategy hinges on radical redesigns that could help AMD leapfrog Intel and Nvidia in the market for supercomputer-like processors, chips that do more calculations simultaneously and speed access to data stored on other parts of a user’s computer. At the same time, Su has begun weaning AMD from its dependence on PCs, focusing on deals to supply chips to the three leading video game console makers: Microsoft, Sony, and Nintendo. She has also boosted the bottom line by licensing server chip designs to a Chinese partner. To accomplish it all, Su is drawing on her experience as an engineer, on relationships she built over more than a decade at IBM—and on design talent poached from the glamorous confines of Apple.

The first Ryzen chips hit the market this March, and early reviews are strong. The chips easily exceeded AMD’s promise of 40% faster processing than the previous generation. And they’re matching the performance of comparable Intel chips at less than half the price; a top-end Ryzen 7 1800X chip for desktop computers, for example, sells for $499, while Intel’s Core i7-6900K is $1,089. Investors are excited too: AMD’s stock, which was barely treading water at under $2 a share in early 2016, now trades at about $12.

This summer will bring more debuts: Up next is a chip for servers called Epyc—taking on Intel’s near monopoly in that category. Later comes Vega, a graphics chip, or GPU. Such chips have not only become important for gamers but also as the best way to run cutting-edge artificial intelligence and machine learning tasks—the kinds that fuel Siri and Alexa and are used by corporate giants like GE to analyze “big data” streams. Indeed, demand for GPUs is growing even as the PC market remains in a slump. The Vega’s performance has been turning heads too: It convinced Apple to use the chip in its upcoming iMac Pro, the sleek, all-black computer it unveiled in June.

With those products still unproven, however, AMD is hardly out of the woods. Stacy Rasgon, the longtime chip industry analyst at Bernstein Research, believes Su has made the right bets and cleaned up the balance sheet but says she has yet to prove AMD can deliver. “In the context of a company where 18 months ago the concern was, are they going bankrupt or not, she’s doing a really good job,” Rasgon says. “But I have too much history with AMD to bet on their execution.” It’s Su’s mission to vanquish such skepticism.

That history is tightly intertwined with that of AMD’s far bigger rival, Intel. Both companies were founded by engineers and executives from semiconductor pioneer Fairchild. Robert Noyce, Gordon Moore, and Andy Grove struck out in 1968 to form Intel. The AMD group spun out a year later, under salesman Jerry Sanders, a self-proclaimed tough kid from Chicago’s South Side. AMD’s business took off in the 1980s largely because IBM decided it shouldn’t rely only on Intel for chips for its new personal computer and designated AMD as its official second supplier. AMD remains the only major alternative source for PC chips compatible with Intel’s x86 template—but even at its peak in the 2000s, when it sold nearly one out of every four chips found in PCs, it was always a distant second.

AMD reached its prime in the 1990s and 2000s under Sanders and his successor, Hector de Jesus Ruiz, introducing fast and innovative PC chips like the K6 that proved the company was more than an Intel clone. Its stock reached a high of over $42 a share in 2006. But Ruiz made the fateful decision that year to buy graphics-chip maker ATI for $5.4 billion. ATI’s technology never gave AMD the boost it hoped for. What’s more, the deal led to years in the red for the company as it juggled a heavy debt load and merger write-offs. From 2008 to 2011, AMD went through four CEOs.

That was the mess inherited by Su. Born in Taiwan, she moved to New York City with her family at age 2. Her parents told Lisa she could be a concert pianist, a doctor, or an engineer. The third choice resonated with a kid who regularly took apart her younger brother’s electric cars and tried to put them back together. She attended the prestigious Bronx High School of Science, then MIT—where her interest in microprocessors first took root—for an undergraduate degree, master’s, and Ph.D. in electrical engineering.

After a brief stint at Texas Instruments, Su went to IBM, where she spent more than a decade focused on the race for cheaper, faster chips. She also met a crucial mentor in Nicholas Donofrio, an IBM legend who had worked on everything from mainframes to the original PC. Donofrio arranged for Su’s appointment as special technical assistant to Lou Gerstner, the then CEO who left American Express to run Big Blue. Su’s job was to keep Gerstner abreast of major technology developments and ensure his lack of technical training didn’t hinder his decision-making. “What I got as a benefit from it was watching how the CEO of a major corporation thinks,” Su recalls now. Gerstner’s strength lay in simplifying the available options, homing in on how new technologies could actually help customers.

Su aspired to run a company, and as her star rose she felt she couldn’t do that at IBM. (Being a woman, she stresses, was never an obstacle: If anything, she feels lucky to have always had bosses who were free of gender hang-ups.) In 2007, Freescale Semiconductor, a Motorola spinoff that made chips for the Apollo moon missions and was in need of an overhaul, offered Su the role of chief technology officer, and she seized the opportunity. She relocated to Austin, where she eventually ran Freescale’s $1 billion networking chip division and helped the company go public in 2011.

By then, Donofrio had retired from IBM and joined AMD’s board to help craft a turnaround strategy. In Austin for a board meeting, Donofrio invited Su to dinner at the tony Barton Creek Resort. Each worried that the other was angry over Su’s departure from IBM. To break the tension, Donofrio ordered a very expensive California cabernet, Shafer Hillside Select, and as the wine flowed it became obvious that neither harbored a grudge. Remembering why Su had left IBM, Donofrio flipped the script. AMD had deeply rooted problems but also had incredible engineering talent and unique intellectual property. “It’s so ripe for you,” Donofrio recalls saying. “It’s so right.” Su jumped at the bait and joined the company in 2012. By 2014, she was CEO.

By then AMD had put together a design dream team that could reinvent its chips. Donofrio recruited Mark Papermaster, now the CTO, an IBM veteran whom Steve Jobs had wooed to help Apple develop its own line of chips for the iPhone. Papermaster in turn helped the company bring in other rock-star designers—most notably Raja Koduri in 2013. Widely respected as a graphics-chip visionary, Koduri was at Apple at the time, overhauling its product line to handle high-resolution Retina screens.

Jumping from Apple, at the height of its dominance, to struggling AMD seemed crazy to Koduri’s friends and family. His wife thought he was having a midlife crisis. But Koduri had come to believe that other platforms might displace mobile as the locus for innovation in chipmaking. Staring at the display of an iPhone for hours on end, he had an epiphany. “Man eventually wants to carry this thing around with him all the time, not just in his pocket,” Koduri recalls. “You want access to this information all the time.” That might be attained through virtual reality or digital assistants fueled by artificial intelligence, or some mix Koduri couldn’t foresee. But it was bound to boost demand for high-performance computing—and new kinds of chips. And AMD’s desperation for renewal made it the place where he could design such chips with a clean slate. “If you work like you have nothing to lose, you can do some pretty interesting things,” he says.

The “nothing to lose” ethos is beginning to pay dividends. Last year AMD’s revenue rose 7% over the previous year, to $4.2 billion. By the end of this year, the graphics business could be pulling more weight. In 2015, Su put all AMD’s graphics-chip work under Koduri in a new unit called the Radeon Technology Group. Radeon’s headcount has risen 60% since then, to 3,200, making it the largest team in the company. AMD’s PC chips “had taken the limelight,” Su says. “Now we’re saying that graphics is also a first-class citizen.”

Soon will come the real test of the strategy, as products with the new Vega chips go on sale this summer. Nvidia, under CEO Jensen Huang (another Taiwanese-born American trained as an electrical engineer), is a powerful player in graphics, particularly on the software side, where its proprietary CUDA platform dominates as a tool for big-data analysis. Although the market for what analysts call “GPU compute” is small, it’s growing fast. From under $500 million in sales last year, it will hit close to $9 billion by 2020, Bernstein’s Rasgon estimates. AMD is building an open-source software platform to catch up to CUDA. But by its executives’ own admission, it’s starting far behind.

Intel, meanwhile, is far from folding its tent in the competition for PCs and servers. CEO Brian Krzanich is focusing attention on data center customers, and in May Intel unveiled a higher-performance desktop line dubbed the Core i9. “We really stepped on the accelerator in this space a while back,” says Greg Bryant, the new head of Intel’s PC chip unit.

Meg Whitman, for one, thinks AMD will punch above its weight. Notably, Whitman and Su are among the few women who have reached the ranks of CEO at a Fortune 500 company (though AMD’s recent revenue woes bumped it off that list in 2015), and Su consulted Whitman early on for advice about being a CEO. Whitman thinks Hewlett Packard Enterprise’s core server business will be a big beneficiary (and buyer) of AMD’s Epyc chip. “Why did Lisa succeed where others failed?” she asks. “She has focused that company on building great product. Beginning, middle, end of story.”

Su regularly travels the world to pitch that story. And on a sunny day this June she was back in the Boston area, mingling with some likely future customers. Almost 25 years after she earned her own doctorate, Su’s alma mater, MIT, invited her to speak to the 500 or so graduates receiving a Ph.D. Apple CEO Tim Cook (an Auburn University and Duke business school grad) would address the school’s full graduation the next day, but it was Su who spoke at the event where the doctoral recipients got their ceremonial hoods.

In a speech she wrote herself (a task many execs delegate), Su challenged the graduates to dream big, make their own luck, and change the world. Her competitive streak also came out. “Promise me that you will work hard at ensuring that there are lots of Harvard MBAs working for MIT Ph.D.s in the future,” she concluded to hearty applause. Afterward her celebrity status was confirmed as graduates asked her to pose for selfies and professors approached to discuss chip design and Moore’s law.

Su dutifully posed for the smartphones and chatted amiably before retreating to a nearby hole-in-the-wall Chinese restaurant, a favorite from her student days. Sitting next to her husband and ordering some of the spiciest dishes from the plastic menus, a relaxed Su summed up what she was trying to get across in her speech. “I’m fighting my set of wars, and I’m having a great time,” Su said. “Each of you can pick a war that you want to fight, and you can win it.”

A version of this article appears in the July 1, 2017 issue of Fortune with the headline “Betting It All, With Brand-New Chips.”