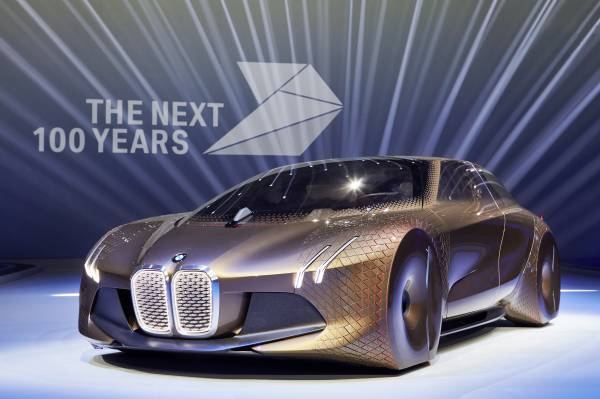

Concept cars, by design, are meant to showcase futuristic technology and unconventional ideas; they’re not production-ready vehicles built for today’s customer. And BMW’s Vision Next 100 concept car—introduced in Munich to kick off the automaker’s 100th anniversary—doesn’t disappoint.

There’s artificial intelligence (embodied in a gemstone-like object called the companion), hundreds of color-changing triangles that communicate with the driver, and even 4D printing—a process in which physical materials emerge fully functional.

The BMW Vision Next 100 concept car has plenty of far-out elements, but more important, the prototype car reveals the company’s underlying philosophy and indicates where the business is headed. Cut through all the noise, and BMW’s concept car suggests the company is keen to create one product that can handle two extremes: the driverless and driver-operated car.

Most major automakers, plus Google (GOOG), are working on autonomous driving technologies of varying degrees. Google is testing a fully autonomous prototype that replaces the driver completely and hopes to commercialize its technology by 2020. Automakers, meanwhile, are moving toward full autonomy in stages. At level 0 the driver is completely in control, and by level 4 the vehicle takes over all safety-critical functions and monitors roadway conditions for an entire trip.

Get Data Sheet, Fortune’s technology newsletter.

All modern cars today are at “level 1” of vehicle automation, which includes tech like precharged brakes. Many luxury automakers such as Volvo have recently introduced level 2 capabilities, where automation takes over two primary control functions. For example, adaptive cruise control with lane steering.

BMW’s Vision Next 100 concept car can switch between fully autonomous and a driver-operated mode. BMW describes it as “a customized vehicle that is perfectly tailored to suit the driver’s changing needs.” (For more on BMW, read Fortune’s recent feature, “The Ultimate Driving Machine Prepares for a Driverless World.”)

Drivers can choose “boost” mode, in which he or she has all the control—albeit with some added support from the car like displaying the ideal driving line onto the windshield. When the driver wants to kick back, he or she picks “ease” mode and the car takes over all operations and the interior transforms. The steering wheel and center console retract, the headrests turn to the side and the seats and door trim merge to form a single unit so that the driver and front-seat passenger can turn toward each other. In “ease” mode, the windshield turns into an entertainment display.

For more on self-driving cars, watch:

Meanwhile, those 800 triangles called “Alive Geometry” communicate with the driver or passenger, depending on what mode the car is in. For example, in boost mode the triangles change color to alert the driver of an upcoming obstacle.

All of this—the color-changing triangles, AI, and an interior that physically transforms—is meant to safely transition between driverless and driver-operated mode. It’s a problem that automakers producing cars with advanced automated driving tech are already wrestling with. Even Google struggled with the challenge of how to keep drivers engaged enough that they can take control of driving as needed. Ultimately, Google opted to bypass that problem and make only fully autonomous vehicles as opposed to semiautonomous ones that require some driver engagement.