Happy Earth Day! Or is it? We dig deep into the equity implications of the moment we’re in, explore the hidden bias in the tort system, the health impacts of racism and offer a simple solution to help people feel more included at work. All that and Jonathan Vanian highlights a new way to think about A.I., and two recent grants that might help reduce bias in systems before they become permanent parts of the landscape.

Happy Friday.

By so many important measures, the planet is more in peril than ever before, as today’s Google Doodle so helpfully makes clear. Originally designed to draw attention to the necessary steps to a sustainable future, Earth Day has also become a marketing event, riddled with mixed corporate messaging.

But now is not the time to be cynical. For one thing, sustainability is now on a lot of people’s to-do lists.

Last month, the Securities and Exchange Commission proposed a rule requiring publicly traded companies to disclose climate-related risks as part of their normal reporting protocols. It’s a popular idea. “Today, investors representing literally tens of trillions of dollars support climate-related disclosures because they recognize that climate risks can pose significant financial risks to companies, and investors need reliable information about climate risks to make informed investment decisions,” said SEC Chair Gary Gensler in a statement. That means it’s crunch time for CFOs, says my colleague Sheryl Estrada, who writes the outstanding CFO Daily newsletter. (Sign up here.) “The proposed climate-risk disclosure rules…would require compliance as soon as fiscal 2023 for the largest public companies,” she writes.

But this is also a story about people, hidden inequities, and an up-and-coming generation poised to inherit the outcomes of past inaction.

Because of climate change, an additional 68 to 135 million people could be pushed into poverty by 2030, and the people who are already living in impoverished conditions will continue to bear the brunt of its impacts. The World Bank describes the most vulnerable populations around the world this way: “Female-headed households, children, persons with disabilities, Indigenous Peoples and ethnic minorities, landless tenants, migrant workers, displaced persons, sexual and gender minorities, older people, and other socially marginalized groups.”

That’s a lot of people, and a lot of ground lost.

And then there’s the world outside your door.

In the United States, the White House recently announced that floods, drought, wildfires, and more powerful hurricanes could cost the U.S. federal budget about $2 trillion annually until 2100. The list of communities vulnerable to climate disaster in the U.S. mirrors those around the world. According to a 2021 report from the EPA, Black people will suffer higher mortality rates, Latinx populations are more likely to live in communities that will lose work because of extreme heat, and American Indians and Alaska Natives are more likely than other demographics to live in areas that will be inundated by flooding.

Put another way, in addition to the planet, relationships with shareholders, stakeholders, and the talent pipeline also hang in the balance.

Are you ready? Can we help? Let me know what reporting or resources we can provide, subject line: Climate.

Wishing you a carbon-neutral weekend.

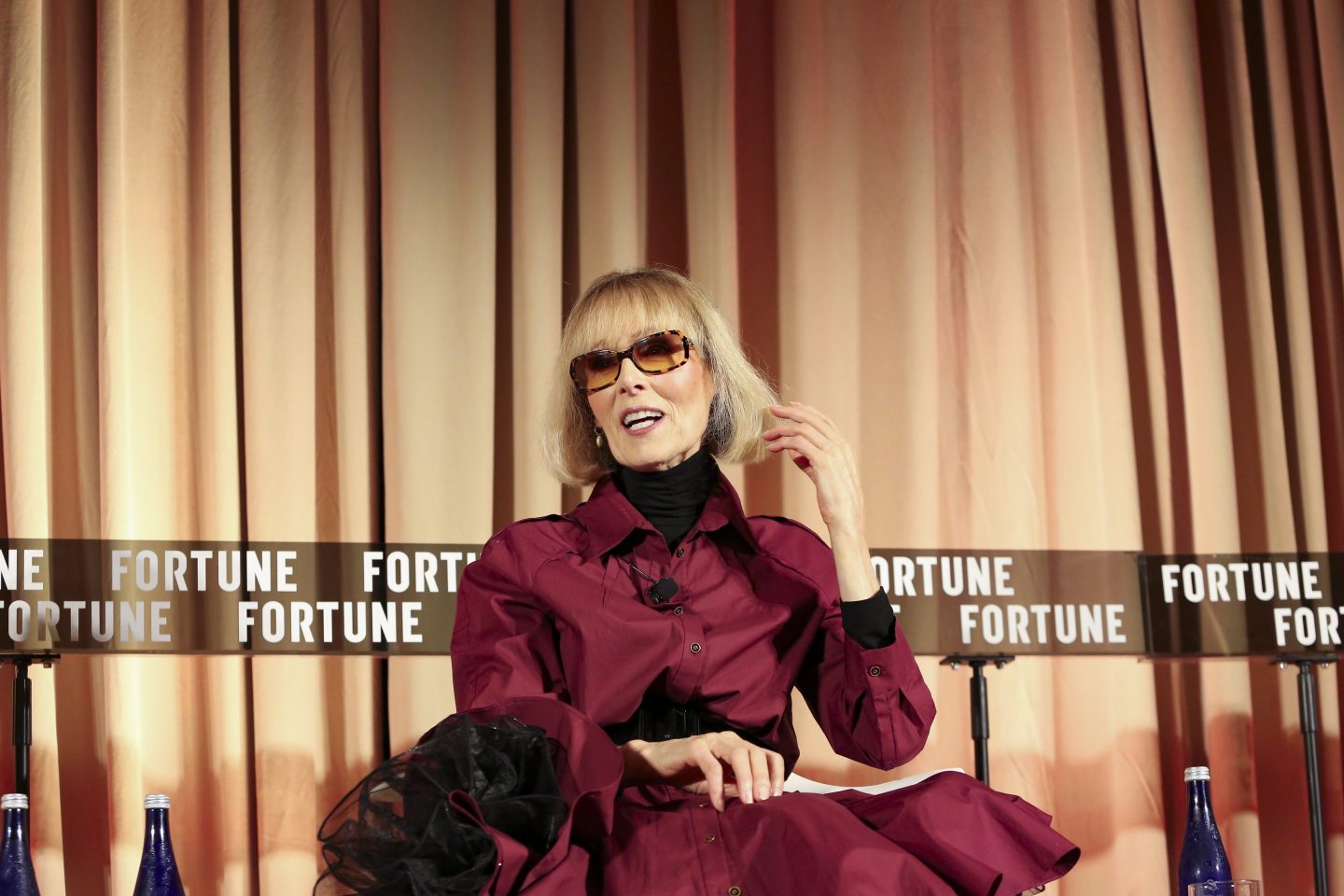

Ellen McGirt

@ellmcgirt

Ellen.McGirt@fortune.com

In Brief

Bridges and artificial intelligence have more in common than one might think.

Zia Khan, the senior vice president for innovation at The Rockefeller Foundation, considers bridges and A.I. as examples of infrastructure. While bridges exist within the physical world serving a crucial role in facilitating transportation, machine learning’s task lies in the digital realm, enabling apps to perform feats like serving relevant job postings to people or recognizing one’s face to unlock a smartphone.

Because A.I. can be considered a kind of infrastructure, Khan is concerned about the technology’s development and how it can influence society, particularly when it comes to one of A.I.’s thorniest problems: its propensity toward bias.

“How might we tilt in the early stage the development of A.I. so that it doesn't reinforce and cement biases that could create a lot of unintentional harms,” Khan tells Fortune.

Khan helped oversee The Rockefeller Foundation’s recent grants to two non-profits attempting to ensure that A.I. functions as stable infrastructure for society.

The Black in AI non-profit received a $300,000 grant to help fund its work growing the community of Black A.I. practitioners and researchers who could be excluded or overlooked by companies and academic institutions. And the Distributed AI Research Institute (DAIR), founded by the leading technologist and A.I. bias expert Timnit Gebru, received about $200,000 to help fund the group’s work creating more equitable A.I. research.

Prior to starting DAIR, Gebru was pushed out by Google after she questioned the search giant’s treatment of Black workers and alleged that the company was suppressing her research into some of the societal harms of so-called large language models.

Khan views groups like Black in AI and DAIR as important to tilting the “early stage development of A.I. so that it doesn't reinforce and cement biases that could create a lot of unintentional harms,” he says.

There’s a passage in writer Robert Caro’s biography of the New York urban planner Robert Moses that stands out in Khan’s mind. Although Moses “was hailed as a hero,” the urban planner “had some biases around not wanting certain races or poor people” to access beaches outside of New York City and Manhattan that were intended for the elite. Whereas the wealthy had cars to drive to the beaches, the poor had to take buses.

“So then he built these really low bridges so that the buses wouldn't be able to get there and that becomes a permanent feature of the landscape,” Khan says.

Khan views A.I. as functioning like bridges in the digital world.

“So we're trying to prevent the intentional and often unintentional biases that happen when you start to think of A.I. as infrastructure underlying decision-making systems and things like that,” he says.

Jonathan Vanian

@JonathanVanian

jonathan.vanian@fortune.com

On background

Hey, how are you doing? It is a surprisingly powerful question, particularly if you ask it sincerely. Karen Twaronite, EY’s Global Chair, Diversity and Inclusiveness, has data that suggests the question can trigger an important moment between colleagues. Pre-pandemic, their data found that more than 40% of people surveyed reported feeling physically and emotionally isolated in the workplace, across all generations, genders, and ethnicities. But about the same number reported that when people checked in on them, they felt more included. But it has to be personal – cc’d on emails, invitations to formal events (or Zooms) isn’t going to cut it. I share this advice from David Kyuman Kim often and it bears repeating now: It’s not the asking, it’s the listening. In times of crisis, “We have a responsibility to draw our attention to co-workers, to community members and ask a simple question–‘how are you doing?’” he says. “And then listen, really listen, as if you don’t already know the answer.”

Harvard Business Review

Black lives are shorter, even next door Health disparities play out in the lives of families in quiet and terrifying ways, as this extraordinary piece from Linda Villarosa poignantly shows. It begins with a tour of the Englewood section of Chicago, which was once a “promised land” destination for Great Migrationers from rural Mississippi, like her grandparents. The promise has been razed. And, she writes, “it is Chicago, not the rural South, that has the country’s widest racial gap in life expectancy: In the Streeterville neighborhood, nine miles north, which is 73 percent white, residents live, on average, to 90 years old; in Englewood, where nearly 95 percent of residents are Black, people live to an average of only 60.”

New York Times

The hidden bias in the tort system This opinion piece, written by law professors Ronen Avraham and Kimberly Yuracko, offers a compelling look at how biased sex and race data inform jury awards. Here’s a clear Black and white example: Using likely lifetime earnings data to calculate an award for an injured plaintiff. “The use of data that explicitly distinguishes and defines people based on race and sex ensures that victims who are female, Black or from other marginalized communities receive lower damage awards than do victims who are White men,” they write, bringing receipts. The practice is common and longstanding.

Washington Post

Racism is literally making us sick Every seven minutes a Black person dies prematurely in the U.S., people who would not be dead if the health of Black and white people were equal, says David. R. Williams, a Harvard professor and researcher. To better understand the impact of racism on health, he created the Everyday Discrimination Scale, a now essential tool that measures the small instances of everyday discrimination—like being treated less courteously than others or treated with suspicion—that erode the dignity of Black people. His research has been pivotal in understanding the link between racism and health. “Research has found that experiences of discrimination have been associated with the elevated risk of a broad range of diseases, from blood pressure to abdominal obesity, to breast cancer to heart disease and even premature mortality.”

TEDMed

This edition of raceAhead was edited by Wandy Felicita Ortiz.

Parting Words

I am impatient /I want to live in a nation where my leaders actually lead / They see our lungs are being filled with last breath from burning trees drowning in ashes, more flames than people.

— Aniya Butler, 16-year-old poet and climate and racial justice advocate, member of Youth vs Apocalypse