Science has cultivated much of the progress our species has made over the past few centuries–allowing us to treat diseases, build computers and visit other worlds.

However, trust in the scientific way of thinking, which has been so important since the Enlightenment, seems to be eroding away.

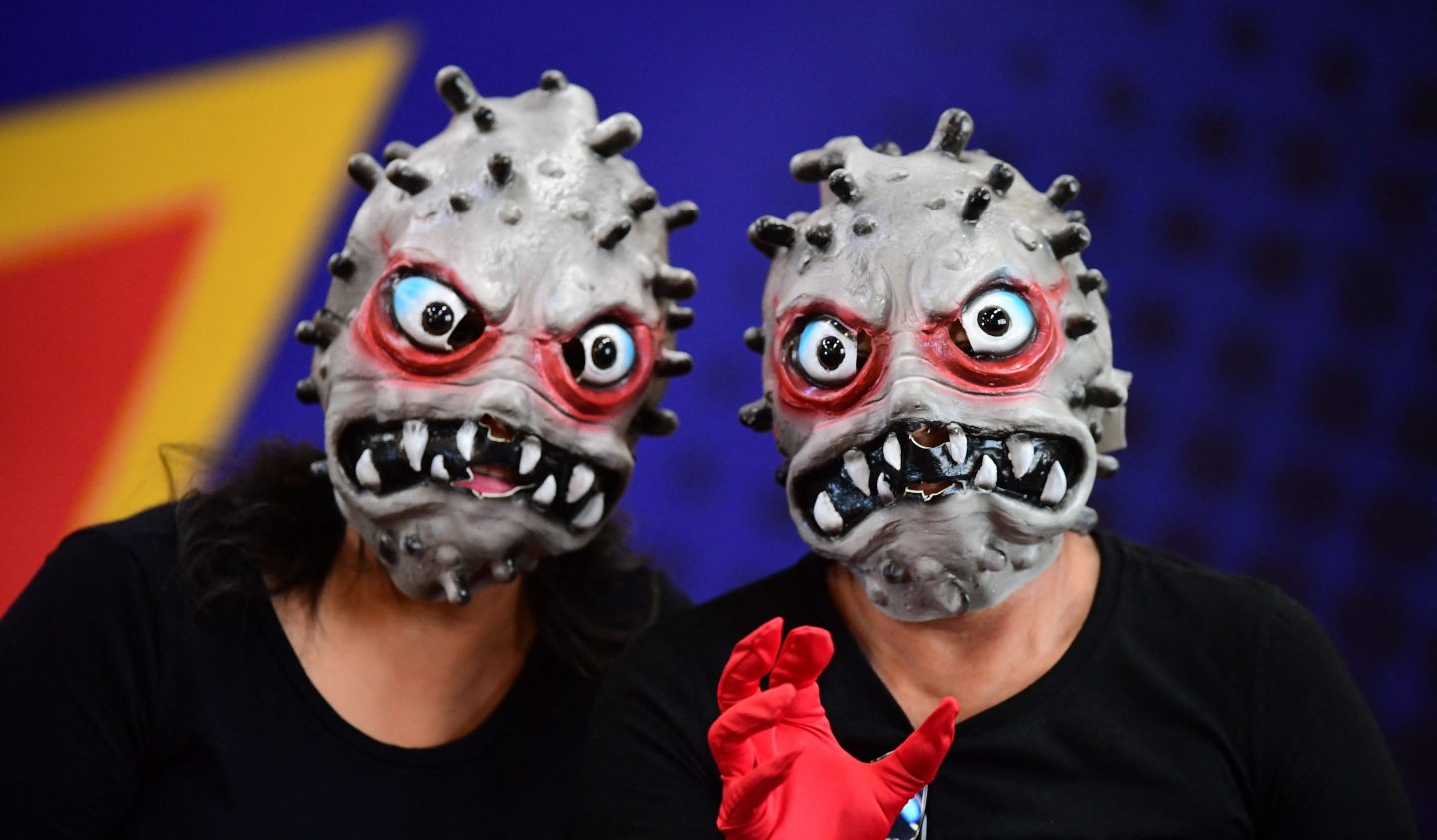

The director-general of the World Health Organization (WHO) has described how the world is currently fighting not just the COVID-19 pandemic but also an “infodemic”. Data is being distorted and tangled with falsehoods so perniciously that it is causing harm to people’s health and lives.

Misinformation about vaccines has interfered with the uptake of our best defense against COVID-19, while some people have died after following inaccurate advice or taking fabricated treatments against the virus.

There are concerns that the digital ecosystems we use to communicate are allowing misinformation and disinformation to spread faster and in ways that they never have before.

I contributed to a recent report published by the Royal Society, the U.K.’s national academy of sciences, looking at the evidence of the impact of online misinformation, concluding that we need to build “collective resilience” to scientific misinformation and not simply ban it from online platforms.

A kernel of truth

Not all areas of scientific work are subject to misinformation campaigns and conspiracy theories. Few hoax rumors circulate on social media and internet forums about quantum computing or gravitational waves. So why do other topics such as climate change, 5G technology, and vaccines become the subject of sustained misinformation campaigns?

While they encompass different areas of science and have different impacts on people’s lives, if we look closely, it is possible to see some common threads running through myths that perpetuate around science.

Invariably, they start with a kernel of truth. They are underpinned by a fact or observation that makes the arguments that follow feel more plausible. They often use scientific-sounding reasoning too. But while scientists build hypotheses that can be tested, adapting their theories based on their observations, conspiracy theories and fake news tend to be rooted in emotions. They trigger emotional reactions such as moral panic that cloud how evidence is interpreted.

Emotional messages travel faster and use a form of logic that is very difficult to then counter with facts, even when there is a preponderance of evidence.

A fertile ground

Research suggests that misinformation is more likely to spread at times of great uncertainty, such as during the current pandemic, where it becomes difficult for people to assess a claim’s credibility. In the absence of a good explanation, it is natural to seek out alternative information to complete the gaps in our mental model of the world.

People’s own preconceptions also make them more prone to believe misinformation. If a claim confirms our existing beliefs and biases, then we are less likely to interrogate its truthfulness. Misinformation is also more likely to spread if it has a direct impact on the people who are reading and sharing it. Certain topics such as those involving our health or those that trigger moral outrage proliferate more readily.

It would also be wrong to dismiss those who are vulnerable to misinformation too readily. Many communities still carry the weight of inequality and discrimination that has left them with a deep distrust of conventional sources of information.

The medical community, for example, should be aware of how its past mistakes affect the willingness of certain groups to listen to them. Instead, they may seek alternative sources of information on the internet, social media, or passing within their communities themselves.

The source that we all obtain our information from is important and the level to which we question its validity varies accordingly. We are more likely to trust our friends and family as the core of our social networks, so in those communities that already distrust the medical community, it is hardly surprising we see higher levels of misinformation being spread.

What to do about it?

Armed with this understanding of how falsehoods can become an alternative reality, it may even be possible to predict what topics may become fake news in the future.

There is also some evidence that simply rebutting misinformation may only serve to amplify that claim. It is impossible to win an argument that is driven by fear and personal values with facts alone. Facts definitely matter, but they are not enough. The words used and how they are communicated will be what makes the difference.

In the words of the American pediatrician and educator Wendy Sue Swanson: “I knew if I wanted to change the minds of my patient’s parents, I had to change the conversation.”

It is something I have tried to do myself

When COVID-19 hit, I saw some of my own friends and family back in Kentucky entirely dismiss the existence of a global pandemic. At first, I tried to debate with them on social media by sending them links to information from the WHO and research papers. It would end up enraging me, so I decided to change how I was using social media.

Rather than withdrawing from the conversation altogether, I began posting the occasional picture of a flower along with a short commentary on what was happening in Oxford at the time with the pandemic from a personal point of view. The most extraordinary thing happened: Those same people I had been fighting with started engaging and asking questions about whether they should get a vaccine. I have changed the conversation.

We need to think carefully about how we choose to communicate with the public, especially those who are harder to reach. The stakes are high–nothing less than our trust in the ability of science to deliver progress, our trust in governments and society, and our trust in each other.

Professor Gina Neff is the executive director of the Minderoo Centre for Technology & Democracy at the University of Cambridge and professor of Technology & Society at the University of Oxford. Her research focuses on the effects that the rapid expansion of the digital information environment has had on our lives. She has also written a number of books on the topic.

More must-read commentary published by Fortune:

- NYSE’s new leader on the three core beliefs that are guiding her

- Carmen Busquets: What are women waiting for?

- The drive for voting rights is being gravely wounded by friendly fire

- U.S.-China tech race: A quantum failure

- Why employees are leaving—and the culture that makes them stay

Never miss a story: Follow your favorite topics and authors to get a personalized email with the journalism that matters most to you.