This is the web version of Eye on A.I., Fortune’s weekly newsletter covering artificial intelligence and business. To get it delivered weekly to your in-box, sign up here.

Hello and welcome to the last “Eye on A.I.” of 2020! I spent last week immersed in the Neural Information Processing Systems (NeurIPS) conference, the annual gathering of top academic A.I. researchers. It’s always a good spot for taking the pulse of the field. Held completely virtually this year thanks to COVID-19, it attracted more than 20,000 participants. Here were a few of the highlights.

Charles Isbell’s opening keynote was a tour-de-force that made great use of the pre-recorded video format, including some basic special effects edits and cameos by many other leading A.I. researchers. The Georgia Tech professor’s message: it’s past time for A.I. research to grow up and become more concerned about the real-world consequences of its work. Machine learning researchers should stop ducking responsibility by claiming such considerations belong to other fields—data science or anthropology or political science.

Isbell urged the field to adopt a systems approach: how a piece of technology will operate in the world, who will use it, on whom will it be used or misused, and what could possibly go wrong, are all questions that should be front-and-center when A.I. researchers sit down to create an algorithm. And to get answers, machine learning scientists need to collaborate far more with other stakeholders.

Many of the invited speakers picked up on this theme: how to ensure A.I. does good, or at least does no harm, in the real world.

Saiph Savage, director of the human computer interaction lab at West Virginia University, talked about her efforts to lift the prospects of A.I.’s “invisible workers”—the low-paid contractors who are often used to label the data on which A.I. software is trained—by helping them train one another. In this way, the workers gained some new skills and, possibly, by becoming more productive, could earn more from their work. She also talked about efforts to use A.I. to find the best strategies to help these workers unionize or engage in other collective action that might better their economic prospects.

Marloes Maathuis, a professor of theoretical and applied statistics at ETH Zurich, looked at how directed acyclic graphs (DAGs) could be used to derive causal relationships in data. Understanding causality is essential for many real world uses of A.I., particularly in contexts like medicine and finance. Yet one of the biggest problems with neural network-based deep learning is that such systems are very good at discovering correlations, but often useless for figuring out causation. One of Maathuis’s main points was that in order to suss out causation it is important to make causal assumptions and then test them. And that means talking to domain experts who can at least hazard some educated guesses about the underlying dynamics. Too often machine learning engineers don’t bother, falling back on deep learning to work out correlations. That’s dangerous, Maathuis implied.

It was hard to ignore that this year’s conference took place against the backdrop of the continuing controversy over Google’s treatment of Timnit Gebru, the well-respected A.I. ethics researcher and one of the very few Black women in the company’s research division, who left the company two weeks earlier (she says she was fired; the company continues to insist she resigned). Some attending NeurIPS voiced support for Gebru in their talks. (Many more did so on Twitter. Gebru herself also appeared on a few panels that were part of a conference workshop on creating “Resistance A.I.”) The academics were particularly disturbed Google had forced Gebru to withdraw a research paper it didn’t like, noting that it raised troubling questions about corporate influence over A.I. research in general, and A.I. ethics research in particular. A paper presented at the “Resistance A.I.” workshop explicitly compared Big Tech’s involvement in A.I. ethics to Big Tobacco’s funding of bogus science around the health effects of smoking. Some researchers said they would stop reviewing conference papers from Google-affiliated researchers since they now could not be sure the authors weren’t hopelessly conflicted.

Here were a few other research strands to keep an eye on:

• A team from semiconductor giant Nvidia showcased a new technique for dramatically reducing the amount of data needed to train a generative adversarial network (or GAN, the type of A.I. used to create deepfakes). Using the technique, which Nvidia calls adaptive discriminator augmentation (or ADA), it was able to train a GAN to generate images in the style of artwork found in the Metropolitan Museum of Art using less than 1,500 training examples, which the company says is at least 10 to 20 times less data than would normally be required.

• OpenAI, the San Francisco A.I. research shop, won a best research paper award for its work on GPT-3, the ultra-large language model that can generate long passages of novel and coherent text from just a small human-written prompt. The paper focused on GPT-3’s ability to perform many other language tasks—such as answering questions about a text or translating between languages—with either no additional training or just a few examples to learn from. GPT-3 is massive, taking in some 175 billion different variables and was trained on many terrabytes of textual data, and it’s interesting to see the OpenAI team concede in the paper that “we are probably approaching the limits of scaling,” and that to make further progress new methods will be necessary. It is also notable that OpenAI mentions many of the same ethical issues with large language models like GPT-3—the way they absorb racist and sexist biases from the training data, their huge carbon footprint—that Gebru was trying to highlight in the paper that Google tried to force her to retract.

• The other two “best paper” award winners are worth noting too: Researchers from Politecnico di Milano, in Italy, and Carnegie Mellon University, used concepts from game theory to create an algorithm that acts as an automated mediator in an economic system with multiple self-interested agents, suggesting actions for each to take that will bring the entire system into the best equilibrium. The researchers suggested such a system would be useful for managing “gig economy” workers.

• A team from the University of California Berkeley scooped up an award for their research showing that it is possible, through careful selection of representative samples, to summarize most real-world data sets. The finding contradicts prior research which had essentially argued that because it could be shown that there were a few datasets for which no representative sample existed, summarization itself was a dead end. Automated summarization, of both text and other data, is becoming a hot topic in business analytics, so the research may wind up having commercial impact.

I will highlight a few other things I found interesting in the Research and Brain Food sections below. And for those who responded to Jeff’s post last week about A.I. in the movies, thank you. We’ll share some of your thoughts below too. Since “Eye on A.I.” will be on hiatus for the upcoming few weeks, I want to wish you happy holidays and best wishes for a happy, healthy new year! We’ll be back in 2021. Now, here’s the rest of this week’s A.I. news.

Jeremy Kahn

@jeremyakahn

jeremy.kahn@fortune.com

A.I. IN THE NEWS

C3.ai goes public. The California-based A.I. company founded by billionaire Tom Siebel, which counts big companies such as Caterpillar and Baker Hughes as customers as well as the U.S. Air Force, went public on The New York Stock Exchange, raising $651 million. The company was valued at $4 billion on its debut, but the shares immediately soared, and at one point the company had a market cap of more than $11 billion. For more Siebel's thoughts about the IPO, when C3.ai might turn a profit, and the ethics of A.I., you can read my interview with him here.

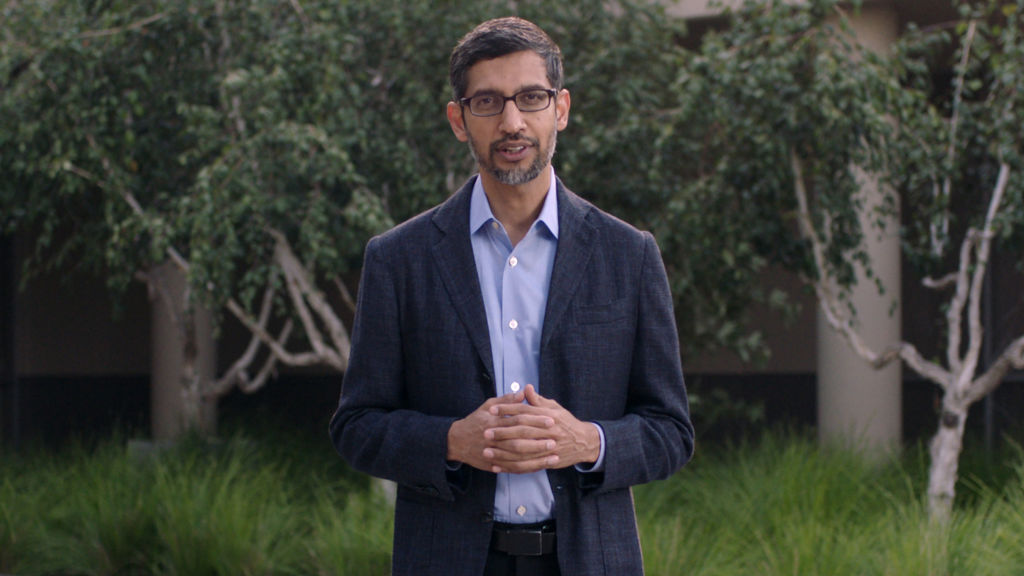

Google CEO Pichai promises to investigate Gebru's departure. Sundar Pichai, CEO of Google-parent Alphabet, wrote a letter to the whole company promising the investigate the circumstances that led to the departure of Timnit Gebru, co-head of the company's AI Ethics research team. Gebru says she was fired after questioning Google's commitment to diversity in a message she posted to an internal listserv. The company insists she resigned. The incident followed Google's request that she withdraw a research paper looking at the ethics of A.I. systems responsible for recent breakthroughs in natural language processing. Gebru was one of Google's few Black women researchers and Pichai acknowledged that what happened to her "seeded doubts and led some in our community to question their place at Google. I want to say how sorry I am for that, and I accept the responsibility of working to restore your trust," according to The Verge. But in an interview with VentureBeat, Gebru said Pichai was trying to paint her as "an angry Black woman" and that his memo was "dehumanizing." Pichai's memo came after more than 6,000 people, including more than 2,000 Google employees, signed an open letter in support of Gebru and calling on the company to live up to its commitments to academic freedom.

Did Huawei and Megvii build a system to help China identify Uighurs? That's what The Washington Post reported based on documents it obtained that said the two companies had collaborated on a facial recognition system that had, as one of its features, a "Uighur alarm." It says the system was tested in 2018 and could identify members of the ethnic minority group by their facial features. Huawei told the paper that "simply a test and it has not seen real-world application. Huawei only supplies general-purpose products for this kind of testing. We do not provide custom algorithms or applications.” Megvii said its systems was not designed to target specific ethnic groups. Both companies have been sanctioned by the U.S. government for their role in allegedly abetting human rights violations by Beijing.

Graphcore says its A.I. computer chips outperform Nvidia's. The U.K.-based A.I. hardware startup, which is a likely IPO candidate in the coming months, published data from benchmark tests that it says show its specialized chips, which are engineered to accelerate the running of deep learning systems, offer better perfromance than Nvidia's graphics processing chips, which are currently the workhorses for most A.I. computing tasks. For some tasks, Graphcore's intelligence processing units (IPUs) were more than five times faster than Nvidia's DGX A100 system.

Ransomware criminals target Intel's Habana Labs A.I. chip group. A group of hackers likely linked to Iran has posted on the web what appears to be data stolen from Habana Labs, an Israel-based A.I. chip company acquired by Intel a year ago, and has threatened to post far more if Habana doesn't pay a ransom in bitcoin, according to a story in tech publication The Register.

The University of Texas abandons admissions algorithm over bias concerns. UT Austin had been using software called GRADE to screen PHD candidates for its computer science department since 2013. But it stopped using the system in early 2020, according to a story in The Register, because of concerns the system may have incorporated historical racial and gender biases in admissions decisions.

EYE ON A.I. TALENT

Graphable, a Boston-based data science company that specializes in software that helps organize and analyze data in graph form, has hired Lee Hong as its director of data science, according to Ai Authority. Hong was formerly director of data science for L Brands.

Aktana, a San Francisco company that provides A.I.-enabled customer engagement software for the life sciences industry, announced it has hired Dmitri Daveynis to be its chief technology officer and senior vice president of engineering and Mary Triggiano as senior vice president of professional services. Daveynis had previously been vice president of engineering at analytics firm Verint. Triggiano was previously chief operating officer at digital media company Theorem.

EYE ON A.I. RESEARCH

There was an entire workshop at NeurIPS devoted to ways in which machine learning can be applied to combating climate change. One paper to highlight from that session: Jiayang Wang from Harrisburg University's data science department teamed up with Selvaprabu Nadarajah from the University of Illinois at Chicago, Jingfan Wang from Stanford University, and Arvind Ravikumer, also from Harrisburg University, to create a machine learning system to automatically predict areas of oil and natural gas drilling sites that are likely to leak methane, the heat trapping gas that is 84 times more potent than carbon dioxide and a major contributor to global warming.

Normally such work is carried out by engineers conducting walking a site armed with special imaging cameras. It is, as the researchers point out, slow and time-consuming. The machine learning system allows engineers to prioritize for repair parts of a site most likely to leak large amounts of methane. In a proof of concept project, their algorithm reduced the time needed to halve methane emissions by 42%. It also reduced the mitigation cost from $85 per ton of carbon dioxide equivalent to $49 per ton. You can read more here.

One of the interesting things about this paper is that there has been a movement among some A.I. researchers to eschew work for oil and gas companies on ethical grounds—after all, why help major carbon emitters to stay in business? But, as this research shows, some of the most impactful steps in combating climate change may come from helping exactly these sorts of companies make their existing operations more efficient.

FORTUNE ON A.I.

Europe is missing out on the A.I. revolution—but it isn’t too late to catch up—by Francois Candelon and Rodolphe Charme di Carlo

Google’s ouster of a top A.I. researcher may have come down to this—by Jeremy Kahn

Tom Siebel, CEO of C3.ai, discusses failure and the future after his company’s soaring IPO—Jeremy Kahn

Vise, a company founded by two high-school graduates, raises $45 million in funding with Sequoia at the helm—by Lucinda Shen

BRAIN FOOD

Anthony Zador, a neuroscientist at Cold Spring Harbor National Laboratory, gave a talk at NeurIPS in which he made a provocative argument: Maybe the A.I. community is far too focused on individual learning as the essence of intelligence and not focused enough on the innate abilities that so many animals possess—abilities that are not learned at all on the individual level and which have been selected and programmed by evolution.

The wiring diagram for each creature's brain, Zador argues, has to pass through what he calls a "genetic bottleneck"—the fact that the genome can only encode a limited amount of information. And that means the wiring diagram cannot be too complex and probably includes more hard-coded rules than A.I. researchers would like to admit. Focusing on this bottleneck, Zador argues, may be the key to unlocking artificial neural networks that will be able to learn far more rapidly from very limited data. He also argues that to the extent humans have been more successful than other species, it is not because we are better learners, but that we have developed a system for overcoming the genetic bottleneck through language and the cultural transmission of knowledge. You can read more about Zador's argument here.

SATURDAY NIGHT AT THE MOVIES...

Thank you again for emailing in response to Jeff's Eye on A.I. newsletter last week asking for reader picks of the best depiction of A.I. in film and television. Here were some of the responses:

Kurt Bunker suggested the television series "Devs." Jonathan Dunnett said "Person of Interest." Mel Edwards recommends Japanese anime "Psycho-pass" as well as "I am Mother." Celia Chapman says that while she knows it's not a movie, the book "Robopocalypse” by Daniel H. Wilson is worth checking out, as well as the short story “Executable” by Hugh Howey, which can be found in the anthology “Robot Uprisings” edited by Wilson. Lisa Baker's top choice was "Star Trek, First Contact," particularly looking at the story arc of the character Data. Leland Schwartz is, like many on Fortune's own staff (myself included), a big fan of "Ex Machina." Jeff says that Magnus Daniellson was one of many people who wrote in to remind him that he had left "The Matrix" off his list. And Thomas Cross emailed to say about HAL in "2001" that "there are many interpretations, but HAL was actually programmed to do what he did. that is why so many AI projects fail." Thanks for all the comments and feedback.