Intel is ready to ship its long awaited computer chip used to power artificial intelligence projects by the end of the year.

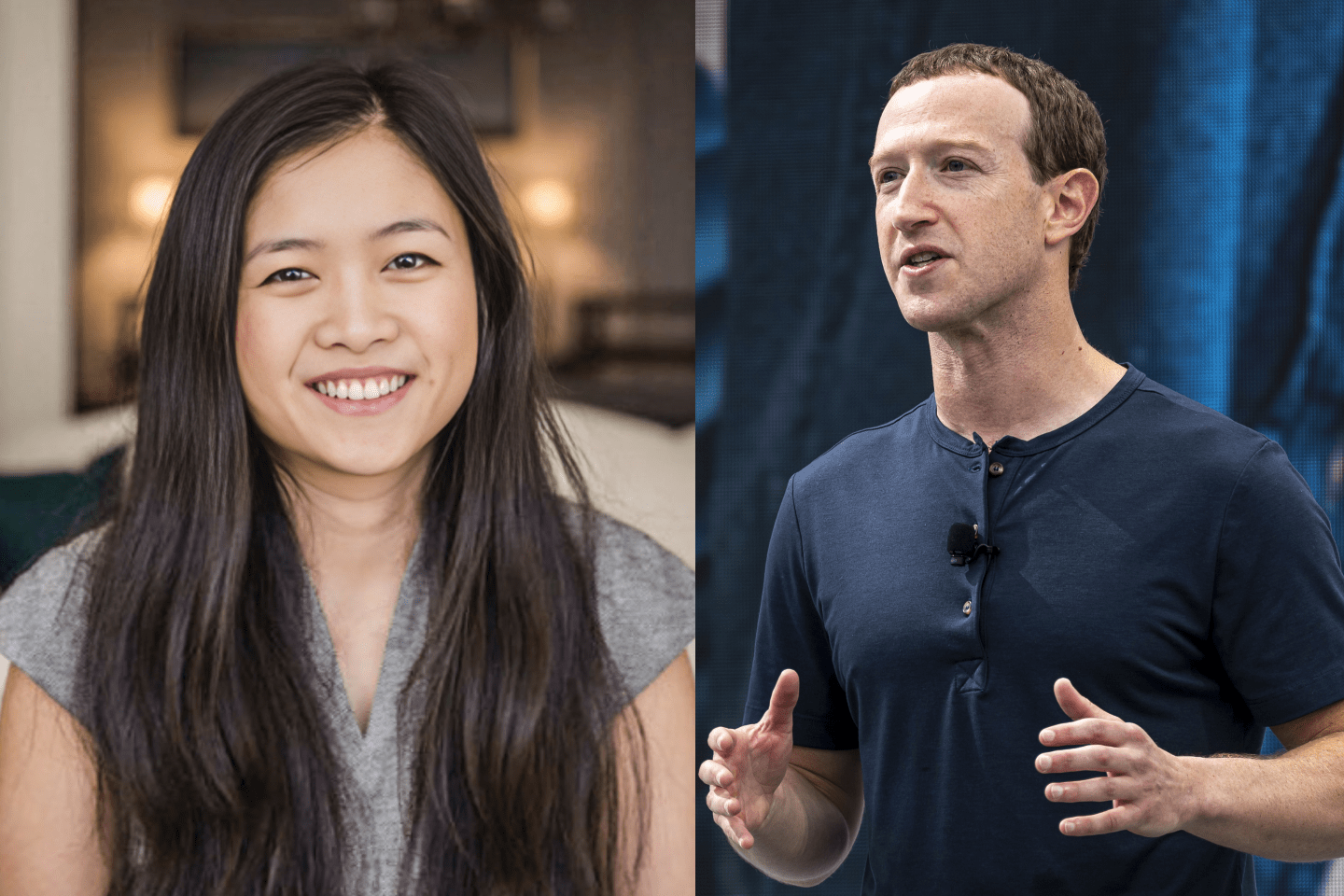

Intel CEO Brian Krzanich explained the chip-maker’s foray into the red-hot field of artificial intelligence Tuesday and said that Facebook (FB) has assisted the company in prelude to its new chip’s debut.

“We are thrilled to have Facebook in close collaboration sharing its technical insights as we bring this new generation of AI hardware to market,” Krzanich wrote. An Intel spokesperson wrote to Fortune in an email that while the two companies are collaborating, they do not have a formal partnership.

The genesis of the Intel Nervana Neural Network Processor comes from Intel’s acquisition of the chip startup Nervana Systems in 2016. That acquisition was intended to help Intel create its own semiconductor technology tailored for tasks like deep learning that require a lot of heavy computer processing to create software that can spot and react to patterns in enormous quantities of data.

In the absence of AI-optimized chips, companies like Walmart looking to power deep learning tasks in their internal data centers have been turning to rival chip makers like Nvidia (NVDA) that build graphical processing units (GPUs).

With so much hype around artificial intelligence and its potential to become a big business, Intel’s new chip represents a key moment for the company that has missed out on previous technology trends like mobile computing.

“A company like Intel doesn’t announce a new class of products very often,” said Intel’s leader of the new chip project Naveen Rao. “This is really a historical point in the history of computation.”

Get Data Sheet, Fortune’s technology newsletter.

Rao, who was Nervana’s CEO, said Intel will eventually sell the chip to customers in two different ways.

In one method, Intel (INTC) will sell a data center appliance containing several of the new Nervana Neural Network Processors as well as Intel’s CPUs to customers wanting to run deep learning projects within their own data centers. Customers will also be able to rent access to the chips via Intel’s cloud data centers, which is similar to the way rival Nvidia sells access to its AI-tailored GPUs.

Intel will not sell the chip by itself, Rao said. He explained that running AI projects isn’t as simple as merely installing a new processor into a computer. Intel’s new data center appliance, he said, was built to accommodate most deep learning projects and is intended to be used by companies just dipping their toes into the space.

Eventually, Intel could make a more customizable version of the AI data center box, but for now it’s sticking with its all-in-one package.

“This is a starting point,” Rao said. “I do anticipate more of an ecosystem with plug-and-play modules.”