While industry analysts and vendors have long touted the promise of analyzing big data, building systems to do this has not always been easy. It has become especially difficult as companies try to analyze data in new ways, such as adding real-time analysis to what was previously a nightly job, or bringing in new types of data from previously unavailable sources like social media.

The problem is essentially one of too many tools for too many jobs. When every type of storage or database system is designed for a certain type of application or a certain type of data, you can’t choose just one and expect it to do everything. On the other hand, tying together various technologies (likely built by different companies or open source communities) into a functional system can be an engineering burden.

On Monday, Microsoft (MSFT) and Cloudera each announced new storage systems designed to tackle this problem. They’re kind of like the big data versions of a laptop computer: They might not be ideal for edge cases involving extreme scale or speed, but they will get the job done in most cases.

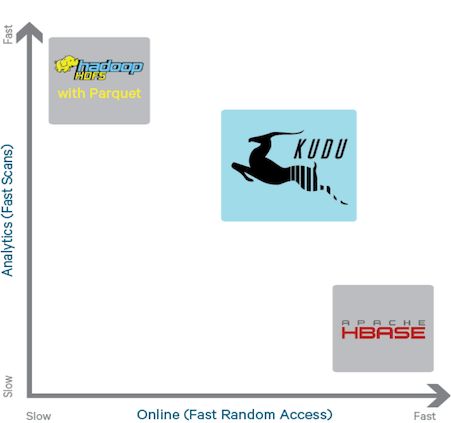

Cloudera’s new open source system, called Kudu, fills a missing middle in the Hadoop ecosystem. There are storage systems for storing huge amounts of data, storage systems for querying huge amounts of historical data in near real time, and storage systems for carrying out fast database operations with live data. Cloudera intends for Kudu to bridge these gaps, acting as a storage system that can update itself in real-time as new data comes in, so users can analyze it when it’s still freshest.

Kudu was jointly developed with Intel (INTC), the giant chipmaker that made a nearly billion-dollar investment in Cloudera in 2014. The resulting engineering relationship, the companies claim, helps them develop technologies, Kudu included, that can use new processor, memory and other Intel-produced components as they hit the market. And, indeed, Kudu is designed to use both RAM and flash for storage, should users prefer the fastest connection between their processors and their data.

Microsoft’s new storage system is called Azure Data Lake Store, and is available as part of the company’s Azure cloud computing platform. It’s built using the Hadoop Distributed File System as its core but, Microsoft’s corporate vice president for data platforms, T. K. “Ranga” Rengarajan, told Fortune, uses design principles from Microsoft’s internal Cosmos storage system that runs most of its web businesses (such as Bing, Office 365 and Xbox Live). Azure Data Lake Store can take in huge amounts of data in its raw format, which is then available for processing by tools such as Apache Spark or Microsoft’s HDInsight Hadoop service.

Rengarajan said Azure Data Lake Store also allows for easy sharing of data between different people and teams within a company.

Microsoft also announced on Monday a new cloud-based data-analysis service called Azure Data Lake Analytics, which Rengarajan described as the big data version of signing up for a webmail service like Gmail or Outlook. It provides all the capabilities of open source technologies such as Impala or Apache Hive, but has a user-friendly interface and Microsoft manages all the underlying server infrastructure. Azure Data Lake Analytics can work with data across a slew of different Microsoft data stores, and includes a new query language called U-SQL that lets users combine standard SQL with their own unique code for specialized operations.

You can look at all of these efforts as the part of the continuing evolution of big data from something done by skilled engineers and data scientists at large Internet companies into something that’s doable by just regular companies and regular data analysts. And as data keeps piling up and new sources such as sensors keep pumping out more of it, you could argue the timing of tools to make it easier to handle couldn’t be better.

To learn more about the promise of big data, watch this Fortune video:

Sign up for Data Sheet, Fortune’s daily newsletter about the business of technology.