Our mission to help you navigate the new normal is fueled by subscribers. To enjoy unlimited access to our journalism, subscribe today.

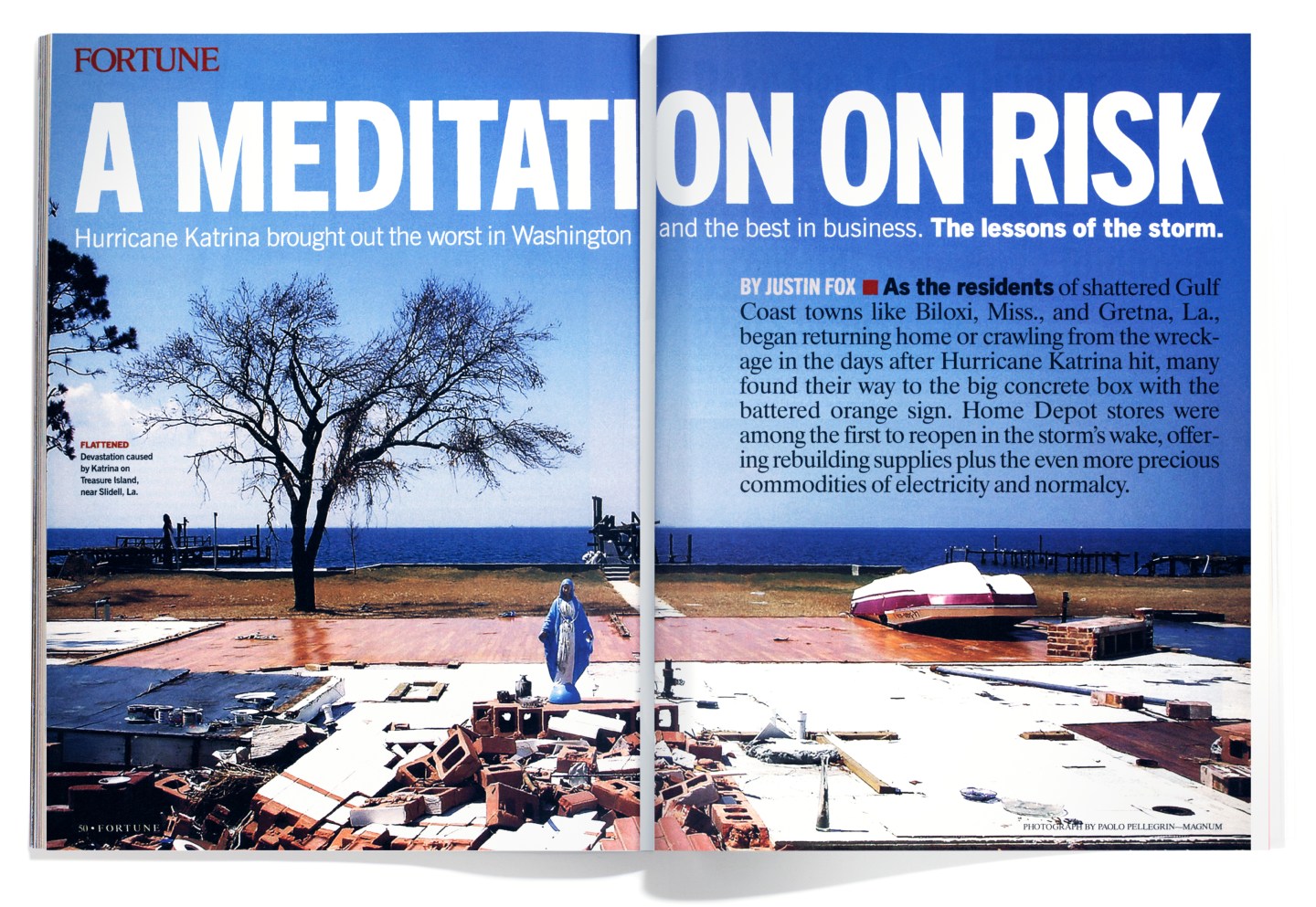

As the residents of shattered Gulf Coast towns like Biloxi, Miss., and Gretna, La., began returning home or crawling from the wreckage in the days after Hurricane Katrina hit, many found their way to the big concrete box with the battered orange sign. Home Depot stores were among the first to reopen in the storm’s wake, offering rebuilding supplies plus the even more precious commodities of electricity and normalcy.

That was no accident. Home Depot had started mobilizing four days before Katrina slammed into the coast. Two days before landfall, maintenance teams battened down stores in the hurricane’s projected path, while electrical generators and hundreds of extra workers were moved into place along both sides of it. At the company’s hurricane center in Atlanta, staff from different divisions—maintenance, HR, logistics—worked 18 hours a day to cut through logjams and get things where they needed to be.

A day after the storm, all but ten of the company’s 33 stores in Katrina’s impact zone were open. Within a week, it was down to just four closed stores (of nine total) in metropolitan New Orleans. “We always take tremendous pride in being able to be among the first responders,” says Home Depot CEO Bob Nardelli.

Other big businesses would tell similar stories. They prepared well, they responded quickly—and they did so because Katrina was exactly the kind of event for which well-run corporations prepare themselves. Home Depot even reorganized geographically this year to match its divisions with the main disasters they have to deal with: earthquakes and wildfires in the West, blizzards in the North, and hurricanes in the South.

The government didn’t come through Katrina nearly as well, of course. Local and federal officials failed to adequately prepare for the storm and failed to react quickly after it hit.

Home Depot had mobilized four days before Katrina slammed into the coast.

But this issue of Fortune is not about who did what wrong. It is about how we cope with the realities of risk, uncertainty, and crisis. Often we simply don’t: Risk taxes the wiring of our brains, which evolved at a time when reacting to a tangible present was far more important than planning for an uncertain future. Risk overwhelms the decision-making capabilities of our elected officials, who also have incentives to focus more on today than tomorrow. Markets and corporations may be the greatest risk processors mankind has yet devised, but that isn’t to suggest that corporations can or should supplant government. Elected officials have it much tougher than CEOs: Home Depot had to get its stores open, not evacuate a city. As of Sept. 13, corporations had donated $312 million for Katrina relief; the federal government may spend close to a thousand times that much.

That said, Katrina is an especially poignant study in risk because the catastrophe was so widely foreseen. The Army Corps of Engineers told anyone who asked that the chance in any given year that a storm would inundate New Orleans was between one in 200 and one in 300. Over the 77 years of the average American’s life expectancy, one-in-200 annual odds snowball to one-in-three. Or try this simple (and oversimplified) cost-benefit analysis: If the cost of a flooded New Orleans is $100 billion, and the annual chance of that flood is one in 200, then it would pay to spend up to $500 million a year (one-200th of $100 billion) to keep such a flood from happening. It would also more than pay, probabilistically speaking, to undertake a forced evacuation whenever a Category 4 or 5 storm threatens the city. Yet nothing of the sort was done.

Katrina is a poignant study in risk because the catastrophe was so widely foreseen.

“This is a case where they did an exceptionally good job on the natural science and a really poor job on the social science,” says Baruch Fischhoff, professor of social and decision sciences at Carnegie Mellon University and current president of the Society for Risk Analysis. That’s partly because elected officials have another set of probabilities to consider. Say you’re at the beginning of a four-year term. Over that span the one-in-200 annual chance of a New Orleans flood grows to one in 50. That’s still slim odds that spending big bucks on better levees will pay off during your term—and you won’t get much credit even if it does. Force residents onto buses and drive them out of town only to see the hurricane miss the city, and you’re in real political trouble, guaranteed.

There is a place where spending billions of dollars to keep levees from breaking does amount to good politics. It is the Netherlands, where people were shocked to learn that Americans had accepted a one-in-200 chance of New Orleans flooding. It has been a matter of Dutch law since the late 1950s that the country’s sea defenses be capable of turning back all but a once-in-10,000-years storm.

That isn’t a result of foresight. It is driven by what happened in 1953, when a storm surge overwhelmed the dikes protecting the province of Zeeland in the southwestern corner of the country, killing 1,800 people and 200,000 cows, horses, pigs, and other livestock. We give potential disasters far more attention if we have witnessed them. One can be sure that when New Orleans and its levees are rebuilt, the once-in-200-years standard will no longer be deemed even remotely adequate. The risk of a big hurricane’s hitting New Orleans is no greater now than it was a year ago, so why would people be willing to pay for protection now that they weren’t then? Because that’s the way our brains work.

In 1969, Israeli psychologists Daniel Kahneman and Amos Tversky began a now legendary research project to catalog the strange quirks of human decision-making about risk. Rather than calculating probabilities, they found most people take shortcuts based on experience, emotion, and various biases. In the past few years scholars have discovered that such decisions appear to be the product of an uneasy collaboration between the uniquely human, calculating parts of the brain and the more primitive, reactive areas. Sometimes the collaboration just doesn’t work: A recent study by researchers at Carnegie Mellon, Stanford, and the University of Iowa found that people with stroke- or disease-induced damage to the emotional areas of the brain outperformed those with undamaged brains in an investing game, because emotions didn’t get in the way of their market calls.

It was in an effort to rein in our undamaged brains that the science of risk analysis and management evolved. In his history Against the Gods: The Remarkable Story of Risk, former money manager Peter Bernstein describes how until the 17th century, disaster and good fortune were attributed to the whims of the gods. Then, thanks to the work of a few pioneering scholars, “fate” became statistics and probabilities. This approach really took off in the social sciences and the business and investing worlds after World War II, when scholars steeped in the probabilistic wartime discipline of operations research began to look for peacetime applications.

That engineering approach to risk, Bernstein says, made modern capitalism possible. But the engineers and the corporate-risk managers and the quant investing whizzes can still get it wrong. Sometimes it’s just bad luck: A week before Katrina struck, the Hilton New Orleans Riverside hosted an offshore drilling conference. Oil executives on a panel titled “What Has the Industry Learned From Ivan?” discussed how the hurricane that blew through the Gulf last September had taught them they needed to do a much better job of securing rigs so they wouldn’t blow over. Had they done anything about it? Not really. They had time, they figured. Risk analysis told them it was highly unlikely that another storm would batter the offshore fields anytime soon. Oops.

Beyond bad luck, there is something about approaching risk as a mere engineering challenge that invites disaster, or at least disappointment. For example, while advances in automotive safety like airbags, antilock brakes, and limited-access freeways have reduced deaths per mile driven, they have had little impact on overall driving deaths. How can that be? The answer may have to do with what Gerald Wilde, psychology professor emeritus at Queen’s University in Canada, calls “target risk.” We internally set a level of risk that is acceptable to us, and when new technologies reduce that risk, we alter our actions to get risk back up to our tars get level. And so, secure in the knowledge of our airbags and all-wheel drive, we drive faster and more often. That is in itself a benefit. But it keeps the “safety” innovations from making us any safer.

Wilde also applies his theory to flood control. Wilde grew up in the Netherlands, and as a conscript in the Dutch army in 1953 was sent to Zeeland to assist in relief efforts. He remembers watching the bodies—human and animal—float by in the floodwaters, and finds the once-in-10,000-years risk standard set by the Dutch government reasonable. But he also argues that a century of federal flood-control efforts in the U.S. have led to no discernible reduction in deaths and property damage because the new levees and dams have lured more people to floodplains.

Approaching risk as a mere engineering problem invites disaster.

In Holland, as more frequent floods have been banished, the risk of a cataclysmic inundation—while still small—has grown. Some 50% of Dutch land area is below sea level, and a growing majority of the country’s people and businesses are concentrated in the lowest-lying western provinces. The diked inlands of those provinces are sinking ever farther below sea level because they aren’t being replenished by the silt from river floods. And it’s likely that global warming will drive up the sea level. Some Dutch experts have begun urging their countrymen to at least contemplate how they would cope with the flooding of the country’s heartland—a possibility banished from the national consciousness for half a century. “We’re not prepared for floods now,” says Huib de Vriend, director of science and technology at WL Delft Hydraulics, a nonprofit institute that is the leading center of knowledge on keeping lowlands dry. “We’ve become much more vulnerable.”

Risk is like that. You stamp it out in one place, and suddenly it appears somewhere else. Bounteous evidence of this phenomenon can be found in finance. Hedge funds, for instance, are judged by their risk-adjusted performance. They adopt trading strategies that are likely to make money regardless of whether the overall market is rising or falling—betting, say, that similar securities will converge in price. Because there are so many funds doing the same thing, such trades are seldom very profitable. So to get a decent return, funds leverage their bets with borrowed money. The result is an investment vehicle that looks less risky—that is, less volatile—than a standard mutual fund but is in fact at far greater risk of blowing up if its trades go sour and its lenders want their money back. That’s what happened at Long-Term Capital Management, the famous hedge fund that collapsed in 1998.

You stamp out risk in one place, and suddenly it appears somewhere else.

LTCM’s highly advanced risk models entirely failed to predict its demise. Maybe they were just bad models. Or maybe you can’t get risk right without a touch of intuition. Intuition, of course, is a product of the same screwy brain processes that made us all buy Cisco in early 2000. But still: After Sept. 11, 2001, says Carnegie Mellon’s Fischhoff, himself a former student of Kahneman and Tversky, there was much mockery by the risk engineers of people who drove rather than got on airplanes. The risk of death per mile traveled is far higher in a car than on a plane, they argued, so why irrationally opt for more danger? Fischhoff counters: “Car-accident statistics are dominated by people driving drunk early in the morning, not somebody driving a Volvo to Boston in the daytime. Also, after Sept. 11 nobody knew what the relevant aviation statistics were.” In other words, even if the decision not to fly was driven by emotion, it managed to take in nuance that a quantitative risk analysis did not. If they hadn’t made risk assessments and spun scenarios, companies like Home Depot, Wal-Mart, and FedEx would have stood no chance of getting things going so quickly in the hurricane zone. Ignorance and denial are not viable alternatives. What works in the face of risk is a combination of knowledge, humility, and willingness to change gears. Appeals to the gods probably don’t hurt either.

Additional reporting by Julia Boorstin and Barney Gimbel

A version of this article appears in the Oct. 3, 2005 issue of Fortune with the headline “A meditation on risk.”