Facebook wants to speed up research into artificial intelligence for everyone by making the plans for a massively powered box available to any company that wants to hasten up its efforts to build better facial or voice recognition. The box is called Big Sur, and is twice as fast as Facebook’s existing gear and will allow it to create neural networks that are twice as large. In the case of AI, bigger neural networks are faster.

The net result of this speed boost means that the social network could take its uncanny facial recognition abilities to your videos in addition to your photos. And because it’s contributing these designs to the Open Compute Foundation, much like it has done for its server designs and networking gear, other companies can take these designs and build their own AI hardware or even tweak the Big Sur designs to make them better.

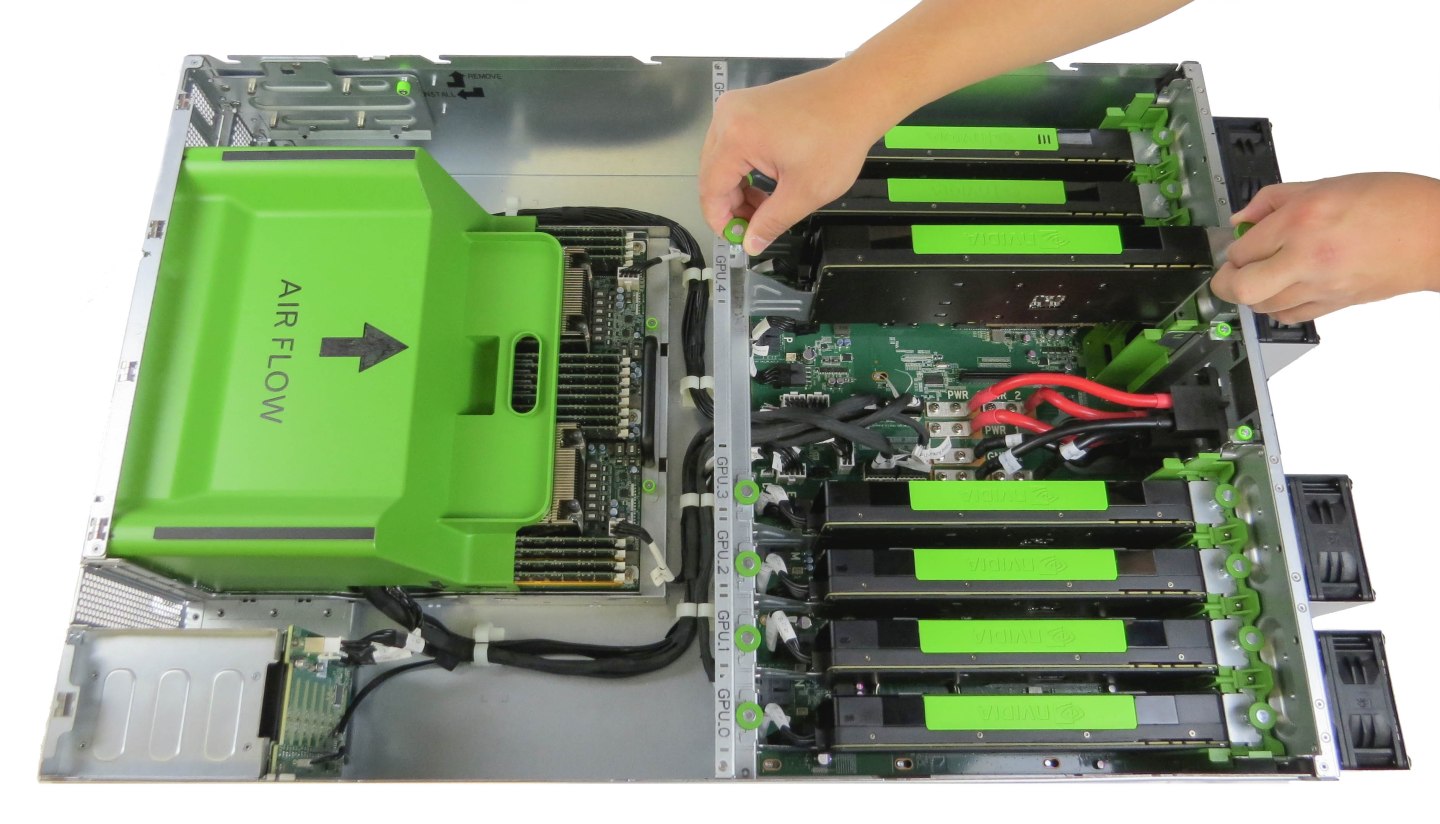

Building hardware for machine learning and artificial intelligence is tough. Facebook (FB) has spent 18 months perfecting this design, and is using some new chips from Nvidia (NVDA) to power the boxes. Serkan Piantino, director of engineering at Facebook’s AI Research, said on a conference call explaining the news, that because of the enormous amount of heat and power drawn by the graphics processing chips used in building the machines, that the team that melted its first enclosure would have gotten a steak dinner. But the challenge in building the hardware wasn’t reducing the heat from the semiconductors, but rather the amount of power they consumed.

Yann LeCun, the head of AI research at Facebook, said that negotiating with the data center administrators to take in the necessary power to ensure the machines got the juice they needed was a significant issue. Each box contains 8 GPUs that consume 300 watts each, which is a large power draw. However, Facebook has managed to make the power and heat of the boxes work in its own data centers, which means they should work well in other modern data center facilities.

These boxes also have the advantage of being about 30% cheaper than existing hardware that is custom-built for AI. For example, a startup called Minds.ai, launched an Amazon Web Services-like neural network as a service for companies that wanted to speed up their neural network training earlier this week built on custom hardware. As for newer types of machine learning such as deep recommendation learning or attention learning, LeCun said there aren’t a lot of specific changes that are needed on the software side, so boxes like Big Sur could be used with some software modifications.

It may be that in another year we’ll see that while 2015 was a big year for advancements in artificial intelligence, that it was just the start of something much bigger enabled by a new generation of powerful hardware.

For more on AI, watch this Fortune video:

Subscribe to Data Sheet, Fortune’s daily newsletter on the business of technology.