Spy Tech That Reads Your Mind

Leaks, theft, and sabotage by employees have become a major cybersecurity problem. One company says it can spot “insider threats” before they happen—by reading all your workers’ email.

n any given morning at a big national bank or a Silicon Valley software giant or a government agency, a security official could start her day by asking a software program for a report on her organization’s staff. “Okay, as of last night, who were the people who were most disgruntled?” she could ask. “Show me the top 10.”

She would have that capability, says Eric Shaw, a psychologist and longtime consultant to the intelligence community, if she used a software tool he developed for Stroz Friedberg, a cybersecurity firm. The software combs through an organization’s emails and text messages—millions a day, the company says—looking for high usage of words and phrases that language psychologists associate with certain mental states and personality profiles. Ask for a list of staffers who score high for discontent, Shaw says, “and you could look at their names. Or you could look at the top emails themselves.”

Many companies already have the ability to run keyword searches of employees’ emails, looking for worrisome words and phrases like embezzle and I loathe this job. But the Stroz Friedberg software, called Scout, aspires to go a giant step further, detecting indirectly, through unconscious syntactic and grammatical clues, workers’ anger, financial or personal stress, and other tip-offs that an employee might be about to lose it.

To measure employees’ disgruntlement, for instance, it uses an algorithm based on linguistic tells found to connote feelings of victimization, anger, and blame. For instance, unusually frequent use of the word me—several standard deviations above the norm—is associated with feelings of victimization, Shaw says. Why me? How can you do that to me? Anger might be signaled by unusually high use of negatives like no, not, never, and n’t, or of “negative evaluators” like You’re terrible and You’re awful at that. There might be heavy use of “adverbial intensifiers” like very, so, and such a or words rendered in all caps for emphasis: He’s a ZERO.

It’s not illegal to be disgruntled. But today’s frustrated worker could engineer tomorrow’s hundred-million-dollar data breach. Scout is being marketed as a cutting-edge weapon in the growing arsenal that helps corporations combat “insider threat,” the phenomenon of employees going bad. Workers who commit fraud or embezzlement are one example, but so are “bad leavers”—employees or contractors who, when they depart, steal intellectual property or other confidential data, sabotage the information technology system, or threaten to do so unless they’re paid off. Workplace violence is a growing concern too.

Though companies have long been arming themselves against cyberattack by external hackers, often presumed to come from distant lands like Russia and China, they’re increasingly realizing that many assaults are launched from within—by, say, the quiet guy down the hall whose contract wasn’t renewed. The most spectacular examples have been governmental—the massive 2010 data dump of more than 700,000 classified files onto WikiLeaks by Chelsea Manning (then known as Pfc. Bradley Manning) and the leaks by former intelligence contractor Edward Snowden in 2013. While those events were sui generis, they opened the world’s eyes to the breathtaking scope of every organization’s vulnerability.

About 27% of electronic attacks on organizations—public and private—come from within, according to the latest annual cybercrime survey jointly conducted by CSO Magazine, the U.S. Secret Service, PricewaterhouseCoopers, and the Software Engineering Institute CERT program. (CERT is a Defense Department–funded cybercrime research center at Carnegie Mellon University.) About 43% of the 562 participants surveyed said their organizations had endured at least one insider attack in the previous year. Though targets of these assaults often keep the incidents secret, known victims in recent years include Morgan Stanley (MS), AT&T (T), Goldman Sachs (GS), and DuPont (DD).

Insider threats are now sufficiently well recognized that their victims—especially financial institutions—may face regulatory sanctions as well as civil liability for not having taken adequate steps to prevent them. In June the Securities and Exchange Commission fined Morgan Stanley $1 million for failing to prevent a rogue financial adviser from compromising 730,000 customer accounts, even though the bank itself caught and reported the employee, who later pleaded guilty to a federal crime.

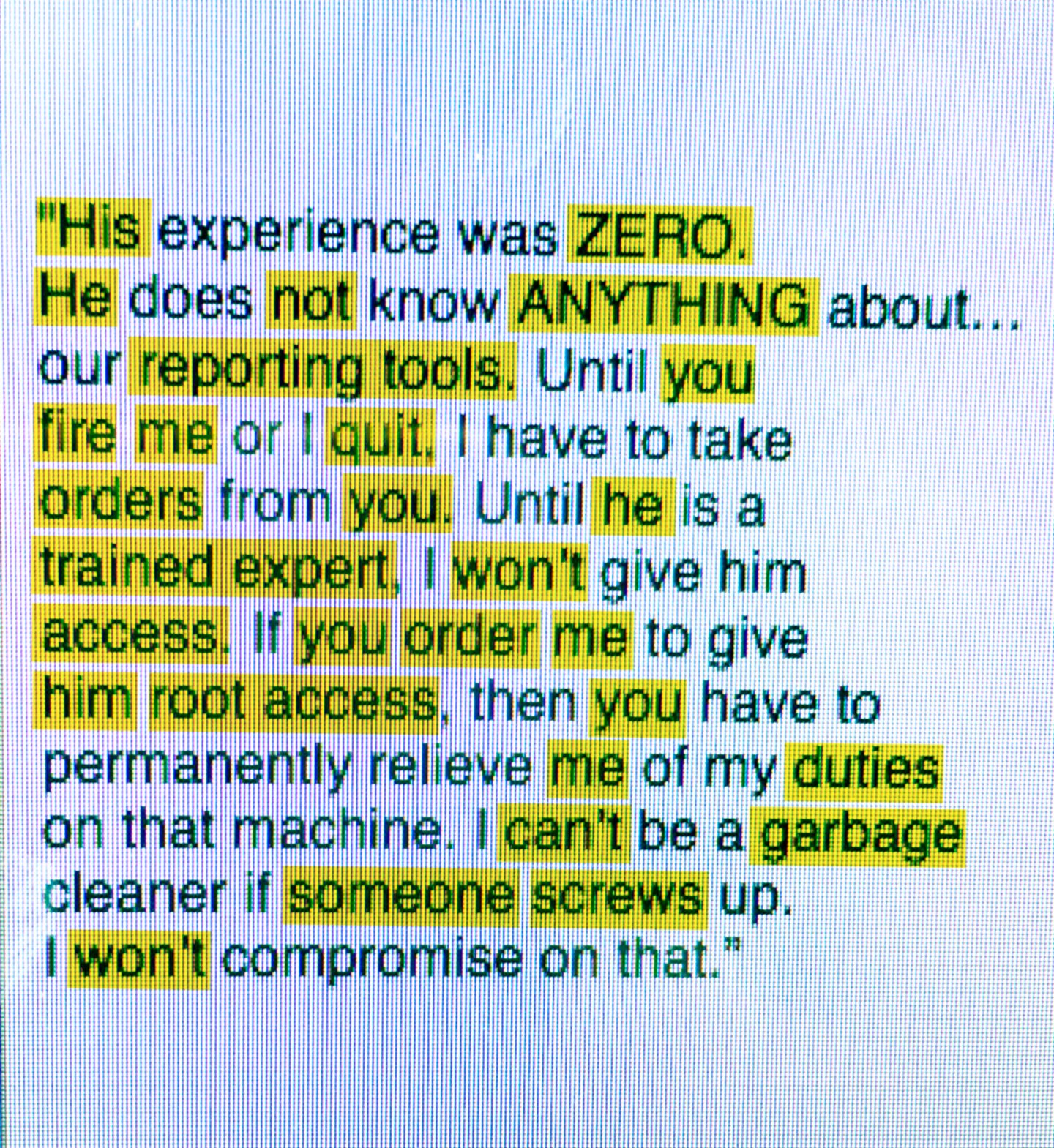

Psycholinguistics: Finding Clues in an Ordinary Email

This text was adapted from actual emails that a systems administrator, working under contract for a bank, wrote to his supervisor. after the man later lost his position, he sabotaged the bank’s servers. The illustration below shows which words Stroz Friedberg’s Scout software would pick up and “score,” using psycholinguistic principles, if it analyzed the email today. Here’s an explanation of why those words raise red flags, especially when they appear unusually frequently. —R.P.

- “Negatives” like no, not, and n’t may signal anger, which Scout treats as a component of disgruntlement.

- The word me used in excess can signal victimization, another component of disgruntlement.

- Direct references, especially you, can signal blame, yet another sign of disgruntlement.

- Words in all caps are “intensifiers” and can signal anger. Strong words and phrases (like garbage and screws up) are intensifiers and “negative evaluators,” which both signal anger.

- Since much anger and negativity in emails relate to marital conflict, which is often not the employer’s concern, Scout uses words and phrases relating to employment, like fire, quit, and root access, as a filter. A client can opt to see only emails that contain such references.

Since 2011, government agencies that handle classified information have been required to have formal insider-threat programs in place. And in May that rule was extended to private contractors who handle such data—some 6,000 to 8,000 companies, according to Randall Trzeciak, who heads CERT’s Insider Threat Center. With increasing awareness of the problem, Trzeciak notes, the tools marketed to combat insider risk have proliferated. At the annual RSA conference on security two years ago, he says, only about 20 vendors displayed such wares. At this year’s, in February, he counted more than 125.

The vast majority of these tools, known as technical indicators, provide ways to monitor computer networks, prevent data loss, alert security to suspicious conduct, or even record keystrokes and take video of individual computer screens. Such solutions let an organization see, for instance, who’s logging onto her computer at odd hours, messing around with electronic tags that demark confidential information, or simply departing from routine in some sudden, marked fashion. (See below, “Tools for Stopping the Enemy Within.”)

Still other tools are available to comb through employees’ emails, looking for keywords. But Scout appears to be the email-scanning tool most specifically and ingeniously tailored to try to sniff out insider threats before they occur.

cout was soft launched as a client service by Stroz Friedberg in late 2014, though the firm has long used earlier versions for internal investigations. The firm was founded in 2000 by Ed Stroz, a 16-year FBI veteran in Manhattan, and Eric Friedberg, an 11-year Brooklyn federal prosecutor. Each had led his office’s computer crime unit. Today, with more than 500 employees in 14 offices, the firm is one of the leading outfits of its kind, with specialties in digital forensics, incident response, and e‑discovery. Though most of its assignments are confidential, it claims to have worked for 30 of the Fortune 50, and publicly identified clients have included Target (TGT) and Neiman Marcus (after their massive data breaches), Facebook (FB), Google (GOOGL), and the Justice Department.

As impressive as Stroz Friedberg’s credentials are, discussion of its Scout product must come with caveats. The firm declined to introduce Fortune to a single client using it, notwithstanding our promise to protect the organization’s identity. (Companies don’t like to discuss their insider-threat programs, in part because doing so makes workers feel mistrusted.) While the firm described instances in which Scout had been used as a forensic tool—say, identifying the sources of anonymous threats—it furnished no specific case in which Scout proactively warded off an insider attack. Stroz Friedberg did cite an instance in which it said that the system had flagged an employee’s extreme stress; upon follow-up, officials learned that the person was planning a suicide. They intervened, and Scout may have saved the worker’s life.

Ed Stroz acknowledges that Scout does not supplant the many technical tools already available to fight insider threat. But those solutions help only after someone is already “touching, reading, copying, and moving files” he’s not supposed to, he says. He likens Scout’s aspirations to those of the FBI after the attacks on the World Trade Center. “After 9/11 it became ‘disrupt and prevent,’ not just ‘react and investigate,’ ” he says. “How do you get in front of something and protect somebody from themselves?” The answer is through language. “Language is being used by everybody,” he observes. “Google is using it to sell you jeans.” Why not use it to “get to the left” of the actual event—getting ahead of it on a metaphorical timeline, in other words—“so that disasters don’t happen?”

ric Shaw, 63, practices a rare specialty called political psychology. After earning his Ph.D. from Duke, he did a stint with the Central Intelligence Agency, from 1990 to 1992, and then worked as a consultant to other intelligence offices while building a private practice and teaching at George Washington University. (Shaw says he still spends two days a week consulting for an intelligence agency, which he won’t identify but which, he says, has installed Scout to monitor its own personnel.)

Political psychologists draw up mental-health profiles of foreign leaders—Kim Jong-Un, say—to assist policymakers at the State and Defense departments, intelligence agencies, and the White House. Is a hostile chief of state a madman, or can he be reasoned with? If the latter, what is the best way to approach him? These psychologists can’t examine their patients on the couch. One tool they use instead is language. They look for clues to a leader’s personality in his unconscious speech patterns as captured at public appearances.

In the late 1990s, Shaw recounts, the Defense Department asked Shaw to study insider cyberattacks after a couple of alarming incidents, including one in which an administrator at a Navy hospital encrypted patient records and held them for ransom. The FBI computer crime squads had the most experience with such crimes, so Shaw was put in touch with Ed Stroz, who then headed the flagship unit in Manhattan.

The first case file that Stroz showed Shaw involved a systems administrator at a bank who had butted heads with his supervisor. The supervisor eventually terminated him, prompting him to leave behind a “logic bomb” embedded in the network, which exploded and shut down the bank’s servers. Shaw examined the email traffic between the disputants prior to the termination and then marked them up by hand to show Stroz the linguistic red flags.

“It was fascinating,” recalls Stroz. At the FBI, he focused on white-collar crime, a realm in which the perpetrator’s state of mind is often the only contested issue. Shaw’s analysis provided entrée into that realm. “At some point,” Shaw continues, “[Stroz] is watching me code the emails, and he said, ‘You know, we have computers that will do this now.’ That was the beginning of the idea of creating this psycholinguistic software.”

Stroz left the bureau in 2000 and co-founded Stroz Friedberg. A few months later he contacted Shaw, after receiving client calls that required forensic linguistic expertise. These were often “anonymous author” cases, in which a client was receiving threats or demands. Shaw would try to identify the perpetrator by comparing distinctive aspects of his writing style to those of a series of suspects. He relied in part on traditional forensic techniques—distinctive formatting conventions, odd diction, telltale misspellings—but also on the linguistic principles political psychologists used. In a case written up in the New York Times in 2005, for instance, Shaw’s work helped identify a cyberextortionist who had been demanding $17 million from MicroPatent, a patent and trademark company he had hacked. (The perpetrator pleaded guilty and was sentenced to prison.)

To assist in analyzing writings, Stroz and Shaw developed an internal software tool, which they named WarmTouch. “Terrible name,” Stroz admits, “but the idea was, the keyboard exists only because human beings need a way to interface with the computer. The human being begins where he touches the keys.” Meanwhile, Shaw continued studying insider-risk cases, poring over case files at CERT’s Insider Threat Center. He looked for missed warning flags that preceded these crimes and then tried to design features that would enable WarmTouch to pick up the linguistic precursors of bad behavior.

To test and hone his hypotheses, he hid actual emails written by insiders prior to crimes in portions of a large, publicly available database of emails known as the Enron corpus. (The corpus consists of about 600,000 emails written by 175 Enron employees, the vast majority of them innocent of any wrongdoing, whose emails were collected by the Federal Energy Regulatory Commission during an investigation of market manipulation.) Shaw then had both human coders and WarmTouch use principles of language psychology to try to filter out red-flag emails without also catching an unmanageable number of false positives. The results, some of which were published in two articles in the peer-reviewed Journal of Digital Forensics in 2013, suggested that WarmTouch could be a useful, if imperfect, filtering tool. By late 2014, Stroz Friedberg was ready to offer the latest version, renamed Scout, to customers.

Scout uses about 60 algorithms and tracks a vocabulary list of about 10,000 words, though that list is fine-tuned for each client. About 50 of the algorithms focus on insider threat. The rest can be used for a variety of purposes, Stroz Friedberg maintains, including some nonforensic ones—like detecting intra-office strife, evaluating managers, and identifying emerging leaders. Scout is typically provided to clients with a service contract, calling for “licensed clinicians”—outside contractors overseen by Shaw—to interpret the results.

Tools for Stopping the Enemy Within

Cybersecurity pros have devised a range of technical tools to combat insider threats: data theft, fraud, and sabotage. Here are four categories of protection. —Robert Hackett

- SIEMs: Security information and event management is the art of monitoring all the data generated by a company’s security software and appliances. Information managers store info to be studied later; event managers create data feeds that staff can track in real time. SIEM players include Hewlett Packard Enterprise, IBM, and Splunk.

- Data Loss Prevention (DLPs): This technology spots—and blocks—unauthorized attempts to move around sensitive information. RSA, a cybersecurity unit owned by EMC (and soon Dell), has been winding down its DLP services, but other widely used products include Intel Security’s McAfee DLP, Comodo’s MyDLP, and the free and open source OpenDLP.

- Behavior Analytics: This nascent field combines data crunching and machine learning to pinpoint insider threats and compromised accounts. Analytics tools raise a flag whenever people’s actions deviate from a given norm. Companies that offer such analytics products include Rapid7, RedOwl, and Securonix.

- Activity Monitoring: Let’s say an employee triggers an alert—by, for example, removing a data tag on a document marked “company’s most valuable.” A monitoring tool would kick in and start recording his keystrokes, capturing screenshots, or disabling outgoing email traffic. Raytheon and Digital Guardian sell activity-monitoring tools.

To oversee the new product, Stroz Friedberg hired Scott Weber, who had previously been a partner at law firm Patton Boggs and headed the government business at big-data company Opera Solutions. “Scout is not dispositive,” Weber admits. “It’s not going to say that Carolyn’s going to come in tomorrow and steal, or that Scott’s going to commit an act of workplace violence.” What it does do, he continues, is “take a massive amount of information in an organization and filter it down to an operationally friendly pool.”

As an example, Weber displays a PowerPoint slide of Scout’s user interface tackling a data set of nearly 51 million emails and text messages from more than 69,000 senders. Weber says this represented, at the time, a full data set from one governmental client. When directed to search for aberrantly high scores across four insider-risk variables, Scout winnowed out just 383 messages from 137 senders, representing 0.0008% of the total data set.

In a real-life case, a human clinician would then pull up the actual emails, via Scout’s interface, and examine them individually. He would present any messages judged truly worrisome to the client. The client would then decide what action to take, says Weber, after drawing input from managers and its human resources, legal, and security departments. Scout is currently being used in government and in the financial sector, Weber asserts, and is now being tested by clients in manufacturing, health care, and pharmaceuticals. He declines to give numbers.

haw jokes that he originally wanted to call Scout “Big Brother.” Doesn’t it, in fact, invade employees’ privacy?

“It’s really very respectful of privacy,” Weber insists. He stresses that only a tiny fraction of emails are ever read, and most of those are reviewed only by the outside clinician—never coming to the attention of co-workers or supervisors. From a legal standpoint, Weber explains, in the U.S. a company needs “informed consent” to look at employees’ emails. “If you have a policy that informs your employees that it’s not their computer, it’s not their data, it’s subject to search, there’s no expectation of privacy—you’re covered,” he says. (Most large U.S. companies already have such policies in place.)

Weber even argues that privacy concerns cut in favor of Scout. “In many cyberattack cases we’re brought into,” he says, “privacy is exactly how people were wronged. Intruders went through their network, read stuff, copied things, photographed them, turned on the microphone or the camera inside the computer—those are huge privacy violations.”

Against that backdrop, the Stroz Friedberg crew claims that Scout is an enlightened approach to a grave, intractable problem. Clients are saying, “ ‘I want it to be something I’m not going to be ashamed to be doing, to have it be part of a caring working environment,’ ” says Stroz. “You have to get to the left of the line so that disasters don’t happen. But you have to do it responsibly.”

A version of this article appears in the July 1, 2016 issue of Fortune.