Facebook (FB) this morning introduced changes to its News Feed to help its users better control what appears there.

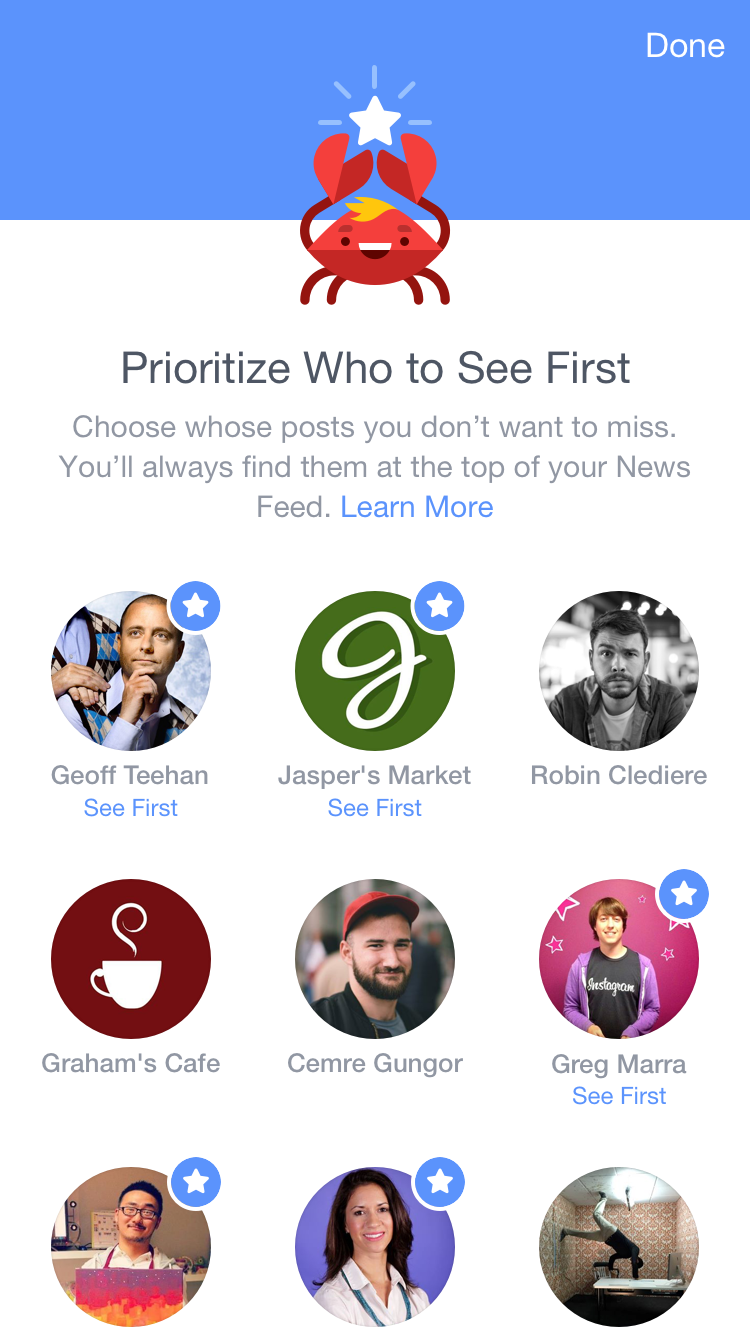

With the changes to the product—a stream of friend- and advertiser-originated content that serves as a core component to the site’s appeal—1.4 billion Facebook users can prioritize updates from select friends and Pages with a tool called “See First.” They can also de-prioritize content from friends and Pages that they’d rather not see with unfollow and refollow tools, as well as use an option to “see less” and put someone “on pause” for a brief period of time. The idea behind the additions, says Facebook product director Adam Mosseri, is to give users controls that they can more easily understand.

The company has for years allowed people to hide, filter, block and prioritize various friends and Pages. The new controls are more powerful, simple, and noticeable, Mosseri says. There is also a new way to discover new Pages to follow.

The move comes amid increasing wariness around Facebook’s News Feed and the algorithm that controls it. As one of the world’s largest Web services, Facebook now wields an incredible amount of power. It is responsible for one of every five minutes Americans spend on their smartphones. More than half of millennials say they get news from Facebook each day. Facebook drives one quarter of all Web traffic—enough to make or break some publishers. Every change to its News Feed can have a massive ripple effect.

Facebook insists that every change it makes to the News Feed algorithm is based on what users want, not its own business incentives. The company even employs 1,000 professional “raters” who surf Facebook for four hours at a time and explain why they Liked, clicked, or shared certain things to Facebook engineers. (While absurd-sounding, this is better than the alternative, when Facebook experimented with 70,000 users on whether it could affect their feelings by showing positive and negative content in 2012. Users responded, justifiably, with one emotion: anger.)

But the fact that Facebook pays “professional raters” to use its site in a lab shows exactly how difficult it is for Web companies to solve the Internet’s most persistent problem—figuring out what people truly want to see, read, and respond to. It’s a goal fraught with complications. It can be difficult to discern a person’s intentions or preferences and those choices do not always follow the same logic. It can be difficult to A/B test a person’s relationship with their mother, for example—keep the family updates, lose the politicking—or reconcile an interest in stories about Taylor Swift’s fight with Apple Music with their annoyance at stories about her personal life. Put another way, there’s no accounting for taste.

Pandora (P) was early to figure this out with digital music. Pandora’s chief scientist and second employee Eric Bieschke once told me that the recommendation problem is so difficult that he didn’t even believe it was a “solvable problem.” That’s why the company’s recommendations team employs at least 55 data scientists, recommendation system specialists, statisticians, and software engineers; an astrophysicist; 25 music analysts; and 10 curators to tackle the issue.

One way Pandora combats the possibility of bad recommendations is by explaining the “why.” It’s impossible to get every recommendation right, but if you tell a user why you think they’d like a certain song, they’re likely to be more forgiving.

When you play a song on Pandora, you get a message like this one:

From here on out we’ll be exploring other songs and artists that have musical qualities similar to Led Zeppelin. This track, “Come Together” by The Beatles, has similar blues influences, great lyrics, repetitive melodic phrasing, extensive vamping and minor key tonality.

Making your algorithm this transparent builds trust with users. If a jarring song comes up, a person can understand why Pandora thinks he or she might like it. Then that user can adjust his or her settings to make sure it doesn’t happen again.

With its latest update, Facebook takes a step in the same direction. The company has long been explaining how its News Feed algorithm works with blog posts and various settings, but never on an item-by-item basis in the actual feed. “See More” and its other various settings aims to help people control more of what shows up in their feeds.

“Historically, News Feed has always looked at what do you do, what do you look at, what do you like, what do you comment on,” Mosseri says. “But that wasn’t enough.”