In 1950, at a time when there were fewer than 10 digital computers worldwide, Bill Pfann, a 33-year-old scientist at Bell Laboratories in New Jersey, discovered a method that could be used to purify elements, such as germanium and silicon. He could not possibly have imagined then that this discovery would enable the silicon micro-chip and the rise of the computer industry, the Internet, and the emergence of the information age. Today, there are about 10 billion Internet-connected devices in the world, such as laptops and mobile phones, and at the heart of each of these devices, there is at least one such micro-chip that acts as its “engine”.

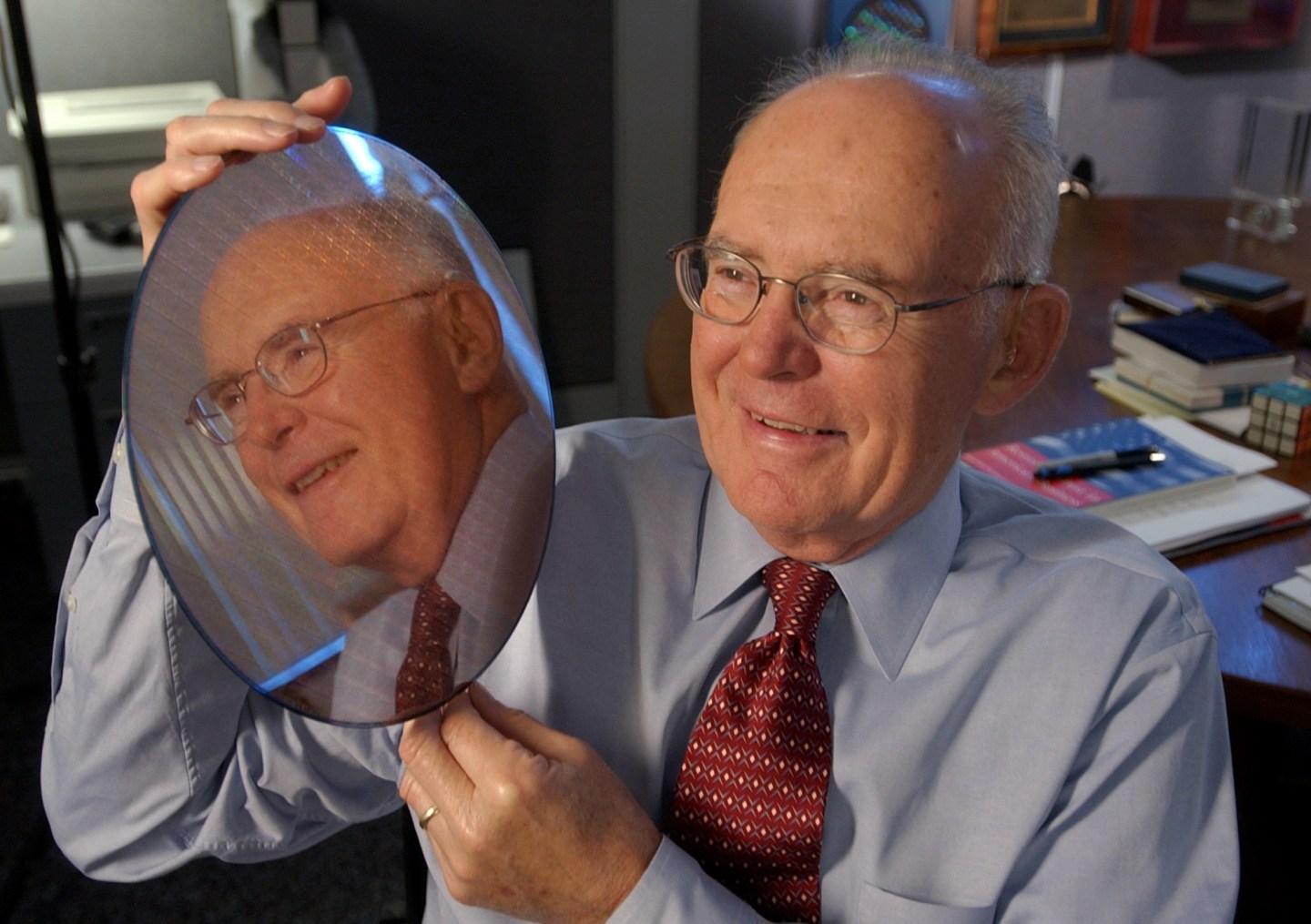

The reason behind this relentless progress is neatly contained in a prophetic law that was announced 50 years ago this Sunday, called Moore’s Law. The micro-chip is built with tiny electrical switches made of purified silicon called transistors and the law stated that the number of transistors on a chip would double every year. In 1975, Gordon Moore revised his forecast to state that the count would double every two years. The law has held true since.

Why is Moore’s Law relevant? Because this doubling of the number of transistors led to computer chips that could be packed with increasingly sophisticated circuitry that was both energy efficient and cheap. This led to the widespread adoption of computers, mobile phones, and the information technology revolution.

The price of computation is about 10 million times cheaper than it was 40 years ago, and the computing power held in a smart phone outstrips the workstations that computer scientists used in their offices in the 1990s. That we have been able to so far hold true to Moore’s Law is the reason that the electronic circulation of information has been commoditized, changing the way many of us learn, bank, travel, communicate and socialize.

Take the example of social networking using a mobile phone. It works because the cost of a transistor has dropped a million fold and computing is about 10,000 times more energy efficient since 1980, when this writer first went to engineering school. Consequently, a $200 smart phone powered by a biscuit-sized battery contains a micro-chip with a few billion transistors in it and enough computing power to digitally process an image, and then upload and share it wirelessly using powerful mathematics to encode the data. This is a consequence of Moore’s Law in action.

Yet, on its 50th anniversary, there are tell-tale signs that Moore’s Law is slowing, and we are almost certain that the law will cease to hold within a decade. With further miniaturization silicon transistors will attain dimensions of the order of only a handful of atoms and the laws of physics dictate that the transistors and electronic circuits will cease to work efficiently at that point. As Moore’s Law’s slows down, innovations in other areas, such as developments in software, will pick up the slack in the short-term.

But in the longer-term, there will be fundamental changes in the essential design of the classical computer that, remarkably, has remained unchanged since the 1950s. Designed for precise calculations, today’s computing machines do not make inferences, and qualitative decisions, or recognize patterns from large amounts of data efficiently. The next substantive leap forward will be in computers with human-like cognitive capabilities that are also energy efficient. IBM’s Watson, the computing system that won the television game show Jeopardy! in 2011, consumed about 4000 times more energy than its human competitors. This experience reinforced the need for new energy efficient computing machines that are designed differently from the sequential, calculative methodology of classical computers and are inspired, perhaps, by the way biological brains work.

A journalist recently asked me whether the continuation of Moore’s Law was indispensable. It is the beauty of the collective enterprise of human innovation, which ensures that nothing is indispensable indefinitely for technology to progress. Decades later one might look at the era of Moore’s Law as a golden period where computers came of age through a masterful display of an industry’s ability to miniaturize and create billions of flawless and identical copies of tiny circuits at factories throughout the world. But, much as a pack of migratory birds flying in V-formation rotate in at the lead position, there will, at that future time, be many other technologies that will have carried us forward in the information age.

Supratik Guha is director of physical sciences at IBM.