YouTube announced on Thursday that it will switch off the comments section on videos showing minors, in response to reports that highlighted the problem of pedophiles leaving sexual comments on videos of children.

“We recognize that comments are a core part of the YouTube experience and how you connect with and grow your audience. At the same time, the important steps we’re sharing today are critical for keeping young people safe,” the company said in a blog post.

YouTube has disabled comments on tens of millions of videos the company believes “could be subject to predatory behavior.” While the new policy is designed to create a safer community, YouTube said it will allow some creators to keep their comments sections active.

“These channels will be required to actively moderate their comments, beyond just using our moderation tools, and demonstrate a low risk of predatory behavior,” the blog post said.

YouTube is also launching a new “classifier” that the company said is twice as effective in weeding out comments that violate YouTube’s policies. The response comes after several advertisers, including Disney, Nestle, and Fornite maker Epic Games, pulled their advertising dollars from YouTube after it was discovered some of their ads were running alongside the predatory comments.

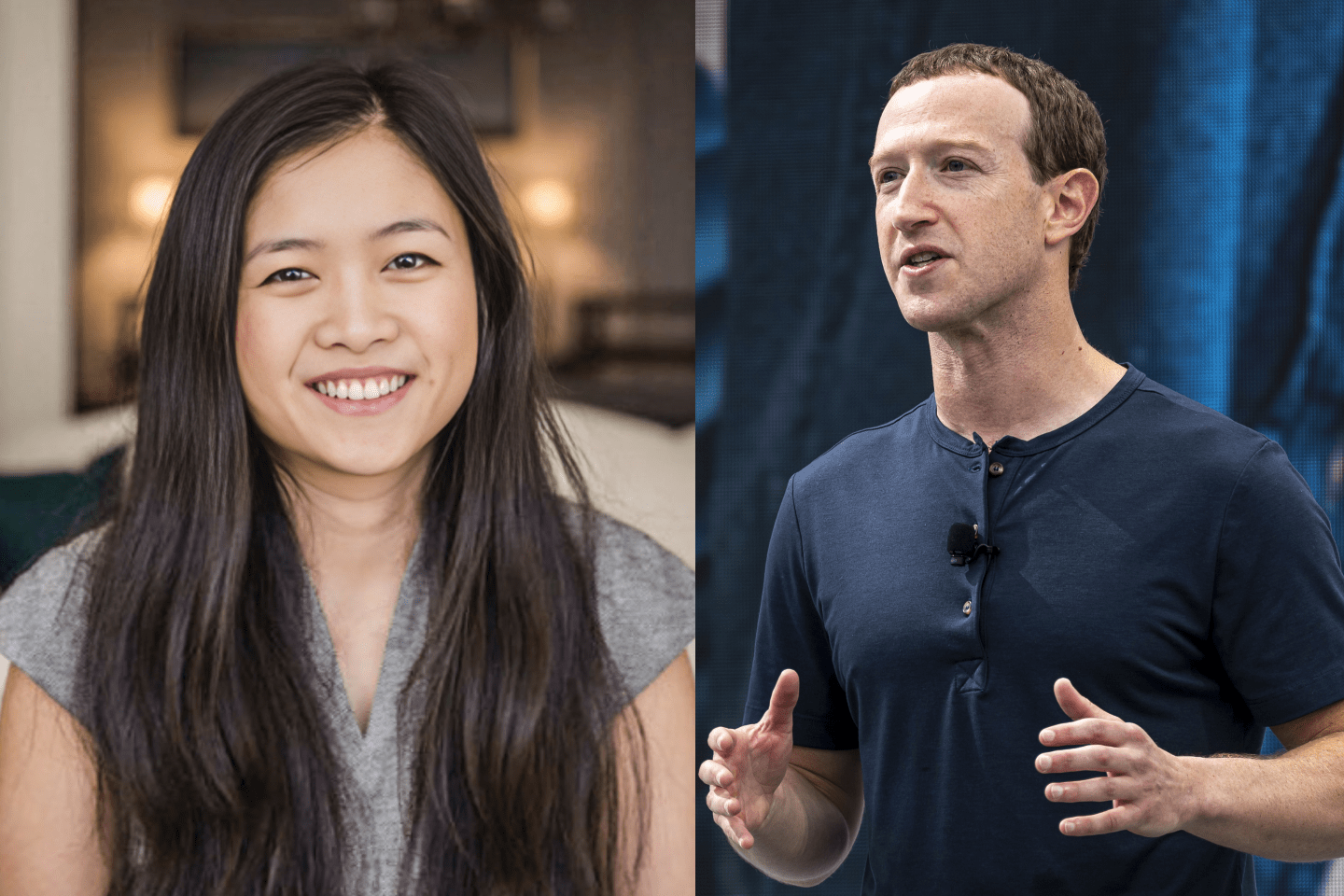

Dylan Collins, CEO and co-founder of SuperAwesome, a London-based company that builds products that help other companies create kid-friendly online experiences, said the new policy is a positive step forward for YouTube.