For years, Twitter has been criticized for focusing more on freedom of speech and anonymity than on curbing abuse and harassment of its users. Recently, however, the company has been trying to show that it’s listening to those complaints, and on Wednesday it rolled out new features designed to help curb such attacks.

The most far-reaching step, and probably also the most difficult, is to identify abusive behavior before users report it and take action immediately reduce its visibility.

Twitter has gradually improved how it handles abuse and harassment, by removing hurdles to reporting it and speeding up its response time (although some users argue the process is still too difficult). But now, the company says it hopes to identify abuse while it is actually occurring, instead of waiting until a user flags it.

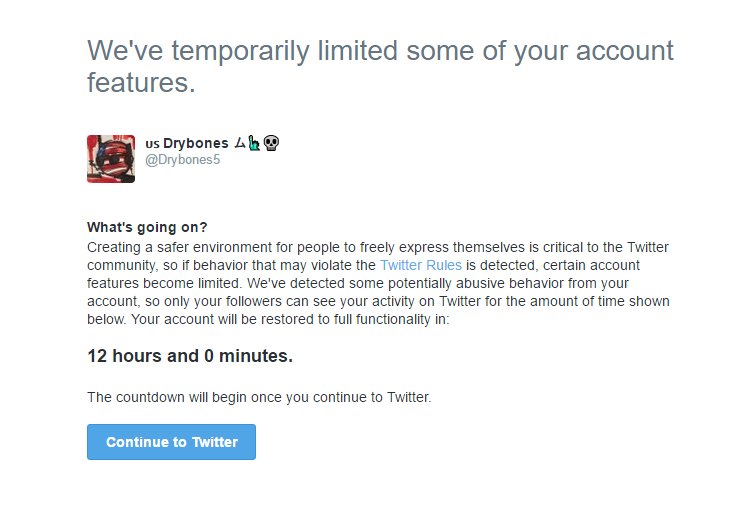

According to a blog post by Ed Ho, Twitter’s VP of engineering, if the service’s algorithm detects abuse, it will take one of a number of steps to reduce the potential reach and harm of those tweets, including limiting the harasser’s account for a set time period.

Get Data Sheet, Fortune’s technology newsletter.

This includes limiting the reach of a user’s tweets so that only people who already follow them can see their posts. This appears to be the “time out” feature that a number of users reported seeing recently after they posted certain tweets considered by the company to be in violation of its rules. They received a message saying their account would be restricted for up to 12 hours, and that it would then return to normal.

A source with knowledge of Twitter’s plans said that future steps could include requiring an account that has been flagged as abusive by the algorithm to provide a verified phone number or email address before the restriction is lifted.

The Twitter blog post notes that the service “supports the freedom to share any viewpoint,” but that the company will take action if an account holder violates the rules. The difficult part for Twitter will be determining—using only an algorithm—what is a joke, or a sarcastic remark between friends, or some other innocuous statement, and what is actual harassment or abuse.

“We aim to only act on accounts when we’re confident, based on our algorithms, that their behavior is abusive,” says Ho. “Since these tools are new we will sometimes make mistakes, but know that we are actively working to improve and iterate on them everyday.”

A number of the users whose accounts were limited after this feature was being tested complained that their free-speech rights were being restricted for no reason, just because they used a specific word. One tweet said a user had been “put on the naughty step” by a “digital daddy.”

In the past, Twitter has trumpeted its status as the “free-speech wing of the free-speech party,” the service responsible for helping topple dictators in Egypt and Libya. Cracking down on abuse inevitably means deciding what kind of speech is tolerated and what isn’t, something Facebook has also run into difficulty with.

In addition to the curbs that use technology, Twitter said that it is adding more fine-grained filtering options for notifications, so that users can control what they see and from whom. For example, users will be able to filter out tweets from accounts that have no profile picture, and those that have unverified email addresses or phone numbers.

Twitter also said it is enhancing the “mute” function that it introduced in November that allows users to block tweets based on specific keywords or phrases. Now, users will be able to set limits for how long they want such blocks to be in effect.

The company added that it will try to be more “open and transparent” about the process it uses to handle abuse reports and other safety problems. It is an apparent response to complaints that users seldom knew whether anyone got their reports of harassment, and were never informed about what action had been taken.

Ho added that Twitter is trying to learn quickly from its mistakes as well, like the recent case in which the company announced a change to how it notifies users about when they have been added to a list (a collection of accounts that are followed by a specific user). It said that users would no longer be notified, but after a torrent of negative feedback, that feature was rolled back within a matter of hours.