Google wants to make your phone capable of faster, battery-sipping artificial intelligence. By taking AI out of the cloud and putting it on the device Google plans to make waiting for a response from your phone a thing of the past.

Your phone is one the most challenging environments to implement true artificial intelligence, yet it’s also the most important if the goal of letting computers aid people in everyday decision-making is ever to come to pass. The type of processing power required to enable a computer to learn is enormous, sucking up huge amounts of energy. In fact, when Facebook engineers were designing a new form of server to train computers to learn, one of their biggest battles was with the folks providing power to the data center. They wanted too much.

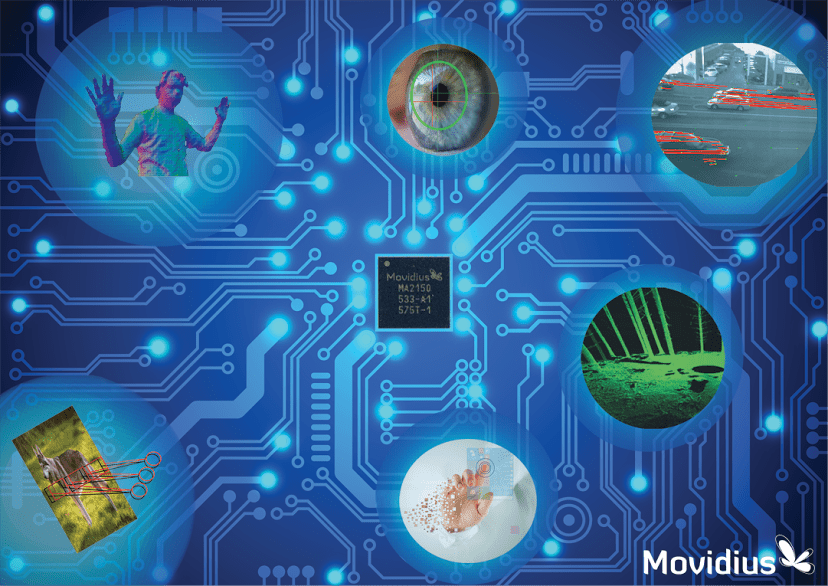

So combining battery-powered phones and AI is a challenge, but it’s one that Google is taking on with an order for new chips from Irish firm Movidius. Movidius makes a line of processors that that allow for computer vision on mobile devices in a power efficient manner. The search giant has been testing the Movidius Myriad first generation chips in its Project Tango computer vision and 3D mapping project and has now gone public with an order of the second generation product.

Get Data Sheet, Fortune’s technology newsletter.

Now Google (GOOG) wants to take Movidius’s expertise in computer vision and combine that with machine learning to create mobile phones that can presumably “see” and learn to recognize the world around them without having to rely on pattern matching. This is a tall order. Most machine learning relies on training the machines to actually learn, which happens in a lab with researchers using clusters of servers using graphics processors. It is time-consuming, power consuming and hugely iterative.

Then there is the second-aspect of machine learning, which is the execution of that training on data sets in the real world. That still benefits from specialized silicon, and Google plans to bring the models it builds over to the Movidius chips so it can run them on mobile devices. This reduces latency, or the amount of time between a request and the delivery of an answer.

For more on AI watch our video.

Already it’s frustrating to wait for translation or a service like Google Now to try to understand what you are trying to say, as Google shuttles your spoken words back to the cloud to try to get the words translated into a computer-understandable version and then implement the task at hand. Doing anything on device will help when there is no Internet access and will speed up the service.

Since Movidius is first and foremost a chip designed for vision and audio, its initial tasks might involve the above examples or something like identifying your friends in photos for Google’s Picasa service instantaneously. Or perhaps Google might create a service that offers a visual search engine for places and things. For example, if you snap a picture of a dog, you can get it’s breed and info immediately.

I’d love to see a visual search engine for food that corresponds with calorie data. I imagine Google would too.