Cloudera, a company that helped popularize Hadoop as a platform for analyzing huge amounts of data when it was founded in 2008, is overhauling its core technology. The One Platform Initiative the company announced Wednesday lays out Cloudera’s plan to officially replace MapReduce with Apache Spark as the default processing engine for Hadoop.

Cloudera chief technologist Eli Collins said the company is “at best” halfway through the process from a technology standpoint and should be done in about a year. When complete, Spark should have similar levels of security, manageability, and scalability as MapReduce, and should be equally integrated with the rest of the technologies that comprise the ever-expanding Hadoop platform.

Collins said Spark’s existing weaknesses are “OK for early adopters, but really not acceptable to our customer base” as a whole. Cloudera says it has more than 100 customers running Spark in production—including Equifax, Experian, and CSC—but realizes that broader adoption and an improved Spark experience are a chicken-or-egg type of problem.

“If Spark is everywhere, then it’s a safe technology choice,” Collins explained. “And if it’s a safe technology choice, we can move the ecosystem.”

The history of the move to Spark is in some ways as old Hadoop itself. Google (GOOG) created MapReduce in the early 2000s as a faster, easier implementation of existing parallel processing approaches, and the creators of Hadoop developed an open source version of Google’s work. However, while MapReduce proved revolutionary for early big data workloads (nearly every major web company is a heavy Hadoop user), its limitations became more clear as Hadoop and big data became mainstream technology movements.

Large enterprises, technology startups and other potential Hadoop users saw the potential in storing lots of data using the Hadoop file system and in analyzing that data, but they wanted something faster and more flexible than MapReduce. It was designed for indexing the web at places like Google and Yahoo (YHOO), a batch-processing job where latency was measured in hours rather than milliseconds. MapReduce is also notoriously difficult to program, a problem that helped exacerbate the “big data skills gap” to which analyst firms and consultants have been pointing for years.

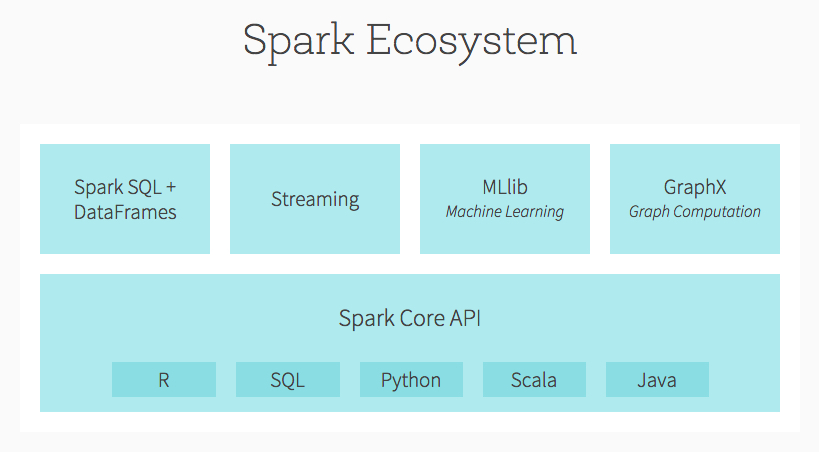

When Spark was created a few years ago at the University of California, Berkeley, it was the solution Hadoop vendors, Hadoop users, and venture capitalists alike needed to resolve their MapReduce woes. Spark is significantly faster and easier to program than MapReduce, meaning it can handle a much broader array of jobs. In fact, the project includes libraries for real-time data analysis, interactive SQL analysis, and machine learning, in addition to its core MapReduce-style engine.

And better yet, Spark is designed to integrate with Hadoop’s native file system. This means Hadoop users don’t have to move their terabytes or even petabytes of data elsewhere in order to take advantage of Spark. By 2013, major VC firms had began putting millions of dollars into Databricks, a startup founded by the creators of Spark, and major Hadoop vendors Cloudera, MapR, and Hortonworks (HDP) were beginning to integrate Spark into their Hadoop distributions.

“Spark is one of the few components where you’ve seen 100% adoption and 100% investment [from the Hadoop community],” Cloudera’ Collins said. Even Yahoo, which sponsored and drove Hadoop’s development early on and from which Hortonworks spun out, should be off of MapReduce within a year, he added. And IBM (IBM) announced in June a $300 million commitment to help develop Spark as the future of seemingly all analytic workloads.

That’s saying something in a technology market that has been characterized by corporate one-upmanship and flat-out insults over the years.

So now, Cloudera and the greater Hadoop community are trying to take the Spark transition over the finish line by making sure it works where MapReduce works and can handle as much (or nearly as much) data as MapReduce can. The latter is challenging—large Spark users might run it on hundreds of nodes, whereas large MapReduce users might run it on tens of thousands of nodes—but heavy Spark users and developers are working to close the gap.

“If we really want to replace MapReduce, we have to dot all those Is and cross all those Ts,” Collins said.

If the Hadoop community does it right, it could reap serious rewards as the technology, and “big data” in general, begins really catching on among mainstream businesses. “The vast majority of people who’ll use Hadoop in the next 10 years haven’t seen it yet,” Collins said. “…and Spark will be there [when they do.]”

For more about the business value of data analytics, watch this Fortune video:

Sign up for Data Sheet, Fortune’s daily newsletter about the business of technology.